Archive

Joscha Bach: We need to understand the nature of AI to understand who we are – Part 2

This is the second part of my comments on Nikola Danaylov’s interview of Joscha Bach: https://www.singularityweblog.com/joscha-bach/

My commentary on the first hour is here. Please watch or listen to the podcast as there is a lot that is omitted and paraphrased in this post. It’s a very fast paced, high-density conversation, and I would recommend listening to the interview in chunks and following along here for my comments if you’re interested.

1:00:00 – 1:10:00

JB – Conscious attention in a sense is the ability to make indexed memories that I can later recall. I also store the expected result and the triggering condition. When do I expect the result to be visible? Later I have feedback about whether the decision was good or not. I compare result I expected with the result that I got and I can undo the decision that I made back then. I can change the model or reinforce it. I think that this is the primary mode of learning that we use, beyond just associative learning.

JB – 1:01:00 Consciousness means that you will remember what you had attended to. You have this protocol of ‘attention’. The memory of the binding state itself, the memory of being in that binding state where you have this observation that combines as many perceptual features as possible into a single function. The memory of that is phenomenal experience. The act of recalling this from the protocol is Access Consciousness. You need to train the attentional system so it knows where you store your backend cognitive architecture. This is recursive access to the attentional protocol, you remember when you make the recall. You don’t do this all the time, only when you want to train this. This is reflexive consciousness. It’s the memory of the access.

CW – By that definition, I would ask if consciousness couldn’t exist just as well without any phenomenal qualities at all. It is easy to justify consciousness as a function after the fact, but I think that this seduces us into thinking that something impossible can become possible just because it could provide some functionality. To say that phenomenal experience is a memory of a function that combines perceptual features is to presume that there would be some way for a computer program to access its RAM as perceptual features rather than as the (invisible, unperceived) states of the RAM hardware itself.

JB – Then there is another thing, the self. The self is a model of what it would be like to be a person. The brain is not a person. The brain cannot feel anything, it’s a physical system. Neurons cannot feel anything, they’re just little molecular machines with a Turing machine inside of them. They cannot even approximate arbitrary function, except by evolution, which takes a very long time. What do we do if you are a brain that figures out that it would be very useful to know what it is like to be a person? It makes one. It makes a simulation of a person, a simulacrum to be more clear. A simulation basically is isomorphic in the behavior of a person, and that thing is pretending to be a person, it’s a story about a person. You and me are persons, we are selves. We are stories in a movie that the brain is creating. We are characters in that movie. The movie is a complete simulation, a VR that is running in the neocortex.

You and me are characters in this VR. In that character, the brain writes our experiences, so we *feel* what it’s like to be exposed to the reward function. We feel what it’s like to be in our universe. We don’t feel that we are a story because that is not very useful knowledge to have. Some people figure it out and they depersonalize. They start identifying with the mind itself or lose all identification. That doesn’t seem to be a useful condition. The brain is normally set up so that the self thinks that its real, and gets access to the language center, and we can talk to each other, and here we are. The self is the thing that thinks that it remembers the contents of its attention. This is why we are conscious. Some people think that a simulation cannot be conscious, only a physical system can, but they’ve got it completely backwards. A physical system cannot be conscious, only a simulation can be conscious. Consciousness is a simulated property of a simulated self.

CW – To say “The self is a model of what it would be like to be a person” seems to be circular reasoning. The self is already what it is like to be a person. If it were a model, then it would be a model of what it’s like to be a computer program with recursively binding (binding) states. Then the question becomes, why would such a model have any “what it’s like to be” properties at all? Until we can explain exactly how and why a phenomenal property is an improvement over the absence of a phenomenal property for a machine, there’s a big problem with assuming the role of consciousness or self as ‘model’ for unconscious mechanisms and conditions. Biological machines don’t need to model, they just need to behave in the ways that tend toward survival and reproduction.

(JB) “The brain is not a person. The brain cannot feel anything, it’s a physical system. Neurons cannot feel anything, they’re just little molecular machines with a Turing machine inside of them”.

CW – I agree with this, to the extent that I agree that if there were any such thing as *purely* physical structures, they would not feel anything, and they would just be tangible geometric objects in public space. I think that rather than physical activity somehow leading to emergent non-physical ‘feelings’ it makes more sense to me that physics is made of “feelings” which are so distant and different from our own that they are rendered tangible geometric objects. It could be that physical structures appear in these limited modes of touch perception rather than in their own native spectrum of experience because that are much slower/faster and older than our own.

To say that neurons or brains feel would be, in my view, a category error since feeling is not something that a shape can logically do, just by Occam’s Razor, and if we are being literal, neurons and brains are nothing but three-dimensional shapes. The only powers that a shape could logically have are geometric powers. We know from analyzing our dreams that a feeling can be symbolized as a seemingly solid object or a place, but a purely geometric cell or organ would have no way to access symbols unless consciousness and symbols are assumed in the first place.

If a brain has the power to symbolize things, then we shouldn’t call it physical. The brain does a lot of physical things but if we can’t look into the tissue of the brain and see some physical site of translation from organic chemistry into something else, then we should not assume that such a transduction is physical. The same goes for computation. If we don’t find a logical function that changes algorithms into phenomenal presentations then we should not assume that such a transduction is computational.

(JB) “What do we do if you are a brain that figures out that it would be very useful to know what it is like to be a person? It makes one. It makes a simulation of a person, a simulacrum to be more clear.”

CW – Here also the reasoning seems circular. Useful to know what? “What it is like” doesn’t have to mean anything to a machine or program. To me this is like saying that a self-driving car would find it useful to create a dashboard and pretend that it is driven by a person using that dashboard rather than being driven directly by the algorithms that would be used to produce the dashboard.

(JB) “A simulation basically is isomorphic in the behavior of a person, and that thing is pretending to be a person, it’s a story about a person. You and me are persons, we are selves. We are stories in a movie that the brain is creating.”

CW – I have thought of it that way, but now I think that it makes more sense if we see both the brain and the person as parts of a movie that is branching off from a larger movie. I propose that timescale differentiation is the primary mechanism of this branching, although timescale differentiation is only one sort of perceptual lensing that allows experiences to include and exclude each other.

I think that we might be experiential fragments of an eternal experience, and a brain is a kind of icon that represents part of the story of that fragmentation. The brain is a process made of other processes, which are all experiences that have been perceptually lensed by the senses of touch and sight to appear as tangible and visible shapes.

The brain has no mechanical reason to make movies, it just has to control the behavior of a body in such a way that repeats behaviors which have happened to coincide with bodies surviving and reproducing. I can think of some good reasons why a universe which is an eternal experience would want to dream up bodies and brains, but once I plug up all of the philosophical leaks of circular reasoning and begging the question, I can think of no plausible reason why an unconscious body or brain would or could dream.

All of the reasons that I have ever heard arise as post hoc justifications that betray an unscientific bias toward mechanism. In a way, the idea of mechanism as omnipotent is even more bizarre than the idea of an omnipotent deity, since the whole point of a mechanistic view of nature is to replace undefined omnipotence with robustly defined, rationally explained parts and powers. If we are just going to say that emergent phenomenal magic happens once the number of shapes or data relations is so large that we don’t want to deny any power to it, we are really just reinventing religious faith in an inverted form. It is to say that sufficiently complex computations transcend computation for reasons that transcend computation.

(JB) “The movie is a complete simulation, a VR that is running in the neocortex.”

CW – We have the experience of playing computer games using a video screen, so we conflate a computer program with a video screen’s ability to render visible shapes. In fact, it is our perceptual relationship with a video screen that doing the most critical part of the simulating. The computer by itself, without any device that can produce visible color and contrast, would not fool anyone. There’s no parsimonious or plausible way to justify giving the physical states of a computing machine aesthetic qualities unless we are expecting aesthetic qualities from the start. In that case, there is no honest way to call them mere computers.

(JB) “In that character, the brain writes our experiences, so we *feel* what it’s like to be exposed to the reward function. We feel what it’s like to be in our universe.”

Computer programs don’t need desires or rewards though. Programs are simply executed by physical force. Algorithms don’t need to serve a purpose, nor do they need to be enticed to serve a purpose. There’s no plausible, parsimonious reason for the brain to write its predictive algorithms or meta-algorithms as anything like a ‘feeling’ or sensation. All that is needed for a brain is to store some algorithmically compressed copy of its own brain state history. It wouldn’t need to “feel” or feel “what it’s like”, or feel what it’s like to “be in a universe”. These are all concepts that we’re smuggling in, post hoc, from our personal experience of feeling what it’s like to be in a universe.

(JB)” We don’t feel that we are a story because that is not very useful knowledge to have. Some people figure it out and they depersonalize. They start identifying with the mind itself or lose all identification.”

It’s easy to say that it’s not very useful knowledge if it doesn’t fit our theory, but we need to test for that bias scientifically. It might just be that people depersonalize or have negative results to the idea that they don’t really exist because it is false, and false in a way that is profoundly important. We may be as real as anything ever could be, and there may be no ‘simulation’ except via the power of imagination to make believe.

(JB) “The self is the thing that thinks that it remembers the contents of its attention. This is why we are conscious.”

CW – I don’t see a logical need for that. Attention need not logically facilitate any phenomenal properties. Attention can just as easily be purely behavioral, as can ‘memory’, or ‘models’. A mechanism can be triggered by groups of mechanisms acting simultaneously without any kind of semantic link defining one mechanism as a model for something else. Think of it this way: What if we wanted to build an AI without ANY phenomenal experience? We could build a social chameleon machine, a sociopath with no model of self at all, but instead a set of reflex behaviors that mimic those of others which are deemed to be useful for a given social transaction.

(JB) “A physical system cannot be conscious, only a simulation can be conscious.”

CW – I agree this is an improvement over the idea that physical systems are conscious. What would it mean for a ‘simulation’ to exist in the absence of consciousness though? A simulation implies some conscious audience which participates in believing or suspending disbelief in the reality of what is being presented. How would it be possible for a program to simulate part of itself as something other than another (invisible, unconscious) program?

(JB) “Consciousness is a simulated property of a simulated self.”

I turn that around 180 degrees. Consciousness is the sole absolutely authentic property. It is the base level sanity and sense that is required for all sense-making to function on top of. The self is the ‘skin in the game’ – the amplification of consciousness via the almost-absolutely realistic presentation of mortality.

KD – So in a way, Daniel Dennett is correct?

JB – Yes,[…] but the problem is that the things that he says are not wrong, but they are also not non-obvious. It’s valuable because there are no good or bad ideas. It’s a good idea if you comprehend it and it elevates your current understanding. In a way, ideas come in tiers. The value of an idea for the audience is if it’s a half tier above the audience. You and me have an illusion that we find objectively good ideas, because we work at the edge of our own understanding, but we cannot really appreciate ideas that are a couple of tiers above our own ideas. One tier is a new audience, two tiers means that we don’t understand the relevance of these ideas because we don’t have the ideas that we need to appreciate the new ideas. An idea appears to be great to us when we can stand right in its foothills and look at it. It doesn’t look great anymore when we stand on the peak of another idea and look down and realize the previous idea was just the foothills to that idea.

KD – Discusses the problems with the commercialization of academia and the negative effects it has on philosophy.

JB – Most of us never learn what it really means to understand, largely because our teachers don’t. There are two types of learning. One is you generalize over past examples, and we call that stereotyping if we’re in a bad mood. The other tells us how to generalize, and this is indoctrination. The problem with indoctrination is that it might break the chain of trust. If someone doesn’t check the epistemology of the people that came before them, and take their word as authority, that’s a big difficulty.

CW – I like the ideas of tiers because it confirms my suspicion that my ideas are two or three tiers above everyone else’s. That’s why y’all don’t get my stuff…I’m too far ahead of where you’re coming from. 🙂

1:07:00 Discussion about Ray Kurzweil, the difficulty in predicting timeline for AI, confidence, evidence, outdated claims and beliefs etc.

1:19 JB – The first stage of AI: Finding things that require intelligence to do, like playing chess and then implementing it as an algorithm. Manually engineering strategies for being intelligent in different domains. Didn’t scale up to General Intelligence

We’re now in the second phase of AI, building algorithms to discover algorithms. We build learning systems that approximate functions. He thinks deep learning should be called compositional function approximation. Using networks of many functions instead of tuning single regressions.

There could be a third phase of AI where we build meta-learning algorithms. Maybe our brains are meta-learning machines, not just learning stuff but learning ways of discovering how to learn stuff (for a new domain). At some point there will be no more phases and science will effectively end because there will be a general theory for global optimization with finite resources and all science will use that algorithm.

CW – I think that the more experience we gain with AI, the more we will see that it is limited in ways that we have not anticipated, and also that it is powerful in ways that we have not anticipated. I think that we will learn that intelligence as we know it cannot be simulated, however, in trying to simulate it, we will have developed something powerful, new, and interesting in its impersonal orthogonality to personal consciousness. The revolution may not be about the rise of computers becoming like people but of a rise in appreciation for the quality and richness of personal conscious experience in contrast to the impersonal services and simulations that AI delivers.

1:23 KD – Where does ethics fit, or does it?

JB – Ethics is often misunderstood. It’s not about being good or emulating a good person. Ethics emerges when you conceptualize the world as different agents, and yourself as one of them, and you share purposes with the other agents but you have conflicts of interest. If you think that you don’t share purposes with the other agents, if you’re just a lone wolf, and the others are your prey, there’s no reason for ethics – you only look for the consequences of your actions for yourself with respect for your own reward functions. It’s not ethics though – not a shared system of negotiation because only you matter, because you don’t share a purpose with the others.

KD – It’s not shared but it’s your personal ethical framework, isn’t it?

JB – It has to be personal. I decided not to eat meat because I felt that I shared a purpose with animal; the avoidance of suffering. I also realized that it is not mutual. Cows don’t care about my suffering. They don’t think about it a lot. I had to think about the suffering of cows so I decided to stop eating meat. That was an ethical decision. It’s a decision about how to resolve conflicts of interest under conditions of shared purpose. I think this is what ethics is about. It’s a rational process in which you negotiate with yourself and with others, the resolution of conflicts of interest under contexts of shared purpose. I can make decisions about what purposes we share. Some of them are sustainable and others are not – they lead to different outcomes. In a sense, ethics requires that you conceptualize yourself as something above the organism; that you identify with the systems of meanings above yourself so that you can share a purpose. Love is the discovery of shared purpose. There needs to be somebody you can love that you can be ethical with. At some level you need to love them. You need to share a purpose with them. Then you negotiate, you don’t want them all to fail in all regards, and yourself. This is what ethics is about. It’s computational too. Machines can be ethical if they share a purpose with us.

KD – Other considerations: Perhaps ethics can be a framework within which two entities that do not share interests can negotiate in and peacefully coexist, while still not sharing interests.

JB – Not interests but purposes. If you don’t share purposes then you are defecting against your own interests when you don’t act on your own interest. It doesn’t have integrity. You don’t share a purpose with your food, other than that you want it to be nice and edible. You don’t fall in love with your food, it doesn’t end well.

CW – I see this as a kind of game-theoretic view of ethics…which I think is itself (unintentionally) unethical I think it is true as far as it goes, but it makes assumptions about reality that are ultimately inaccurate as they begin by defining reality in the terms of a game. I think this automatically elevates the intellectual function and its objectivizing/controlling agendas at the expense of the aesthetic/empathetic priorities. What if reality is not a game? What if the goal is not to win by being a winner but to improve the quality of experience for everyone and to discover and create new ways of doing that?

Going back to JB’s initial comment that ethics are not about being good or emulating a good person, I’m not sure about that. I suspect that many people, especially children will be ethically shaped by encounters with someone, perhaps in the family or a character in a movie who appeals to them and who inspires imitation. Whether their appeal is as a saint or a sinner, something about their style, the way they communicate or demonstrate courage may align the personal consciousness with transpersonal ‘systems of meanings above’ themselves. It could be a negative example which someone encounters also. Someone that you hate who inspires you to embody the diametrically opposite aesthetics and ideals.

I don’t think that machines can be ethical or unethical, not because I think humans are special or better than machines, but out of simple parsimony. Machines don’t need ethics. They perform tasks, not for their own purposes, or for any purpose, but because we have used natural forces and properties to perform actions that satisfy our purposes. Try as we might (and I’m not even sure why we would want to try), I do not think that we will succeed in changing matter or computation into something which both can be controlled by us and which can generate its own purposes. I could be wrong, but I think this is a better reason to be skeptical of AI than any reason that computation gives us to be skeptical of consciousness. It also seems to me that the aesthetic power of a special person who exemplifies a particular set of ethics can be taken to be a symptom of a larger, absolute aesthetic power in divinity or in something like absolute truth. This doesn’t seem to fit the model of ethics as a game-theoretic strategy.

JB – Discussion about eating meat, offers example pro-argument that it could be said that a pasture raised cow could have a net positive life experience since they would not exist but for being raised as food. Their lives are good for them except for the last day, which is horrible, but usually horrible for everyone. Should we change ourselves or change cattle to make the situation more bearable? We don’t want to look at it because it is un-aesthetic. Ethics in a way is difficult.

KD – That’s the key point of ethics. It requires sometimes we make choices that are not in our own best interests perhaps.

JB – Depends what we define ourself. We could say that self is identical to the well being of the organism, but this is a very short-sighted perspective. I don’t actually identify all the way with my organism. There are other things – I identify with society, my kids, my relationships, my friends, their well being. I am all the things that I identify with and want to regulate in a particular way. My children are objectively more important than me. If I have to make a choice whether my kids survive or myself, my kids should survive. This is as it should be if nature has wired me up correctly. You can change the wiring, but this is also the weird thing about ethics. Ethics becomes very tricky to discuss once the reward function becomes mutable. When you are able to change what is important to you, what you care about, how do you define ethics?

CW – And yet, the reward function is mutable in many ways. Our experience in growing up seems to be marked by a changing appreciation for different kinds of things, even in deriving reward from controlling one’s own appetite for reward. The only constant that I see is in phenomenal experience itself. No matter how hedonistic or ascetic, how eternalist or existential, reward is defined by an expectation for a desired experience. If there is no experience that is promised, then there is no function for the concept of reward. Even in acts of self-sacrifice, we imagine that our action is justified by some improved experience for those who will survive after us.

KD – I think you can call it a code of conduct or a set of principles and rules that guide my behavior to accomplish certain kinds of outcomes.

JB – There are no beliefs without priors. What are the priors that you base your code of conduct on?

KD – The priors or axioms are things like diminishing suffering or taking an outside/universal view. When it comes to (me not eating meat), I take a view that is hopefully outside of me and the cows. I’m able to look at the suffering of eating a cow and their suffering of being eaten. If my prior is ‘minimize suffering’, because my test criteria of a sentient being is ‘can it suffer?’ , then minimizing suffering must be my guiding principle in how I relate to another entity. Basically, everything builds up from there.

JB – The most important part of becoming an adult is taking charge of your own emotions – realize that your emotions are generated by your own brain/organism, and that they are here to serve you. You’re not here to serve your emotions. They are here to help you do the things that you consider to be the right things. That means that you need to be able to control them, to have integrity. If you are just a victim of your emotions, and not do the things that you know are the right things, you don’t have integrity. What is suffering? Pain is the result of some part of your brain sending a teaching signal to another part of your brain to improve its performance. If the regulation is not correct, because you cannot actually regulate that particular thing, the pain signal will usually endure and increase until your brain figures it out and turns off the brain signaling center, because it’s not helping. In a sense suffering is a lack of integrity. The difficulty is only that many beings cannot get to the degree of integrity that they can control the application of learning signals in their brain…control the way that their reward function is computed and distributed.

CW – My criticism is the same as in the other examples. There’s no logical need for a program or machine to invent ‘pain’ or any other signal to train or teach. If there is a program to run an animal’s body, the program need only execute those functions which meet the criteria of the program. There’s no way for a machine to be punished or rewarded because there’s no reason for it to care about what it is doing. If anything, caring would impede optimal function. If a brain doesn’t need to feel to learn, then why would a brain’s simulation need to feel to learn?

KD – According to your view, suffering is a simulation or part of a simulation.

JB – Everything that we experience is a simulation. We are a simulation. To us it feels real. There is no getting around this. I have learned in my life that all of my suffering is a result of not being awake. Once I wake up, I realize what’s going on. I realize that I am a mind. The relevance of the signals that I perceive is completely up to the mind. The universe does not give me objectively good or bad things. The universe gives me a bunch of electrical impulses that manifest in my thalamus, and my brain makes sense of them by creating a simulated world. The valence in that simulated world is completely internal – it’s completely part of that world, it’s not objective…and I can control this.

KD – So you are saying suffering is subjective?

JB – Suffering is real to the self with respect to ethics, but it is not immutable. You can change the definition of your self, the things that you identify with. We don’t have to suffer about things, political situations for example, if we recognize them to be mechanical processes that happen regardless of how we feel about them.

CW – The problem with the idea of simulation is that we are picking and choosing which features of our experience are more isomorphic to what we assume is an unsimulated reality. Such an assumption is invariably a product of our biases. If we say that the world we experience is a simulation running on a brain, why not also say that the brain is also a simulation running on something else? Why not say that our experiences of success with manipulating our own experience of suffering is as much of a simulation as the original suffering was? At some point, something has to genuinely sense something. We should not assume that just because our perception can be manipulated we have used manipulation to escape from perception. We may perceive that we have escaped one level of perception, or objectified it, but this too must be presumed to be part of the simulation as well. Perception can only seem to have been escaped in another perception. The primacy of experience is always conserved.

I think that it is the intellect that is over-valuing the significance of ‘real’ because of its role in protecting the ego and the physical body from harm, but outside of this evolutionary warping, there is no reason to suspect that the universe distinguishes in an absolute sense between ‘real’ and ‘unreal’. There are presentations – sights, sounds, thoughts, feelings, objects, concepts, etc, but the realism of those presentations can only be made of the same types of perceptions. We see this in dreams, with false awakenings etc. Our dream has no problem with spontaneously confabulating experiences of waking up into ‘reality’. This is not to discount the authenticity of waking up in ‘actual reality’, only to say that if we can tell that it authentic, then it necessarily means that our experience is not detached from reality completely and is not meaningfully described as a simulation. There are some recent studies that suggest that our perception may be much closer to ‘reality’ than we thought, i.e. that we can train ourselves to perceive quantum level changes.

If that holds up, we need to re-think the idea that it would make sense for a bio-computer to model or simulate a phenomenal reality that is so isomorphic and redundant to the unperceived reality. There’s not much point in a 1 to 1 scale model. Why not just put the visible photons inside the visual cortex in exactly the field that we see? I think that something else is going on. There may not be a simulation, only a perceptual lensing between many different concurrent layers of experience – not a dualism or dual-aspect monism, but a variable aspect monism. We happen to be a very, very complex experience which includes the capacity to perceive aspects of its own perception in an indirect or involuted rendering.

KD – Stoic philosophy says that we suffer not from events or things that happen in our lives, but from the stories that we attach to them. If you change the story, you can change the way you feel about them and reduce suffering. Let go of things we can’t really control, body, health, etc. The only thing you can completely control is your thoughts. That’s where your freedom and power come to be. In that mind, in that simulation, you’re the God.

JB – This ability to make your thoughts more truthful, this is Western enlightenment in a way is aufklärung in German. There is also this other sense of enlightenment, erleuchtung that you have in a spiritual context. So aufklärung fixes your rationality and erleuchtung fixes your motivation. It fixes what’s relevant to you and your relationship between self and the universe. Often they are seen as mutually exclusive, in the sense thataufklärung leads to nihilism, because you don’t give up your need for meaning, you just prove that it cannot be satisfied. God does not exist in any way that can set you free. In this other sense, you give up your understanding of how the world actually works so that you can be happy. You go down to a state where all people share the same cosmic consciousness, which is complete bullshit, right? But it’s something that removes the illusion of separation and the suffering that comes with the separation. It’s unsustainable.

CW – This duality of aufklärung and erleuchtung I see as another expression of the polarity of the universal continuum of consciousness. Consciousness vs machine, East vs West, Wisdom vs Intelligence. I see both extremes as having pathological tendencies. The Western extreme is cynical, nihilistic, and rigid. The Eastern extreme is naïve, impractical, and delusional. Cosmic consciousness or God does not have to be complete bullshit, but it can be a hint of ways to align ourselves and bring about more positive future experiences, both personally and or transpersonally.

Basically, I think that both the brain and the dreamer of the brain are themselves part of a larger dream that may or may not be like a dreamer. It may be that these possibilities are in participatory superposition, like an ambiguous image, so that what we choose to invest our attention in can actually bias experienced outcomes toward a teleological or non-teleological absolute. Maybe our efforts to could result in the opposite effect also, or some combination of the two. If the universe consists of dreams and dreamed dreamers, then it is possible for our personal experience to include a destiny where we believe one thing about the final dream and find out we were wrong, or right, or wrong then right then wrong again, etc. forever.

KD – Where does that leave us with respect to ethics though? Did you dismantle my ethics, the suffering test?

JB – Yeah, it’s not good. The ethic of eliminating suffering leads us to eliminating all life eventually. Anti-natalism – stop bringing organisms into the world to eliminate suffering, end the lives of those organisms that are already here as painlessly as possible, is this what you want?

KD – (No) So what’s your ethics?

JB – Existence is basically neutral. Why are there so few stoics around? It seems so obvious – only worry about things to the extent that worrying helps you change them…so why is almost nobody a Stoic?

KD – There are some Stoics and they are very inspirational.

JB – I suspect that Stoicism is maladaptive. Most cats I have known are Stoics. If you leave them alone, they’re fine. Their baseline state is ok, they are ok with themselves and their place in the universe, and they just stay in that place. If they are hungry or want to play, they will do the minimum that they have to do to get back into their equilibrium. Human beings are different. When they get up in the morning they’re not completely fine. They need to be busy during the day, but in the evening they feel fine. In the evening they have done enough to make peace with their existence again. They can have a beer and be with their friends and everything is good. Then there are some individuals which have so much discontent within themselves that they can’t take care of it in a single day. From an evolutionary perspective, you can see how this would be adaptive for a group oriented species. Cats are not group oriented. For them, it’s rational to be a Stoic. If you are a group animal, it makes sense for individuals to overextend themselves for the good of the group – to generate a surplus of resources for the group.

CW – I don’t know if we can generalize about humans that way. Some people are more like cats. I will say that I think it is possible to become attached to non-attachment. The stoic may learn to disassociate from the suffering of life, but this too can become a crutch or ‘spiritual bypass’.

KD – But evolution also diversifies things. Evolution hedges its bets by creating diversity, so some individuals will be more adaptive to some situations than others.

JB – That may not be true. In larger habitats we don’t find more species in them. Competition is more fierce. We reduce the number of species dramatically. We are probably eventually going to look like a meteor as far as obliterating species on this planet.

KD – So what does that mean for ethics in technology? What’s the solution? Is there room for ethics in technology?

JB – Of course. It’s about discovering the long game. You have to look at the long term influences and you also have to question why you think it’s the right thing to do, what the results of that are, which gets tricky.

CW – I think that all that we can do is to experiment and be open to the possibilities that our experiments themselves may be right or wrong. There may be no way of letting ourselves off the hook here. We have to play the game as players with skin in the game, not as safe observers studying only those rules that we have invested in already.

KD – We can agree on that, but how do you define ethics yourself?

JB – There are some people in AI who think that ethics are a way for politically savvy people to get power over STEM people…and with considerable success. It’s largely a protection racket. Ethical studies are relatable and so make a big splash, but it would rarely happen that a self-driving car would have to make those decisions. My best answer of how I define ethics myself is that it is the principled negotiation of conflicts of interest under conditions of shared purpose. When I look at other people, I mostly imagine myself as being them in a different timeline. Everyone is in a way me on a different timeline, but in order to understand them I need to flip a number of bits. These bits are the conditions of negotiation that I have with you.

KD – Where to cows fit in? We don’t have a shared purpose with them. Can you have shared purpose with respect to the cows then?

JB – The shared purpose doesn’t objectively exist. You basically project a shared meaning above the level of the ego. The ego is the function that integrates expected rewards over the next fifty years.

KD – That’s what Peter Singer calls the Universe point of view, perhaps.

JB – If you can go to this Eternalist perspective where you integrate expected reward from here to infinity, most of that being outside of the universe, this leads to very weird things. Most of my friends are Eternalists. All these Romantic Russian Jews, they are like that, in a way. This Eastern European shape of the soul. It creates something like a conspiracy, it creates a tribe, and its very useful for corporations. Shared meaning is a very important thing for a corporation that is not transactional. But there is a certain kind of illusion in it. To me, meaning is like the Ring of Mordor. If you drop the ring, you will lose the brotherhood of the ring and you will lose your mission. You have to carry it, but very lightly. If you put it on, you will get super powers but you get corrupted because there is no meaning. You get drawn into a cult that you create…and I don’t want to do that…because it’s going to shackle my mind in ways that I don’t want it to be bound.

CW – I agree it is important not to get drawn into a cult that we create, however, what I have found is that the drive to negate superstition tends toward its own cult of ‘substitution’. Rather than the universe being a divine conspiracy, the physical universe is completely innocent of any deception, except somehow for our conscious experience, which is completely deceptive, even to the point of pretending to exist. How can there be a thing which is so unreal that it is not even a thing, and yet come from a universe that is completely real and only does real things?

KD – I really like that way of seeing but I’m trying to extrapolate from your definition of ethics a guide of how we can treat the cows and hopefully how the AIs can treat us.

JB – I think that some people have this idea that is similar to Asimov, that at some point the Roombas will become larger and more powerful so that we can make them washing machines, or let them do our shopping, or nursing…that we will still enslave them but negotiate conditions of co-existence. I think that what is going to happen instead is that corporations, which are already intelligent agents that just happen to borrow human intelligence, automate their decision making. At the moment, a human being can often outsmart a corporation, because the corporation has so much time in between updating its Excel spreadsheets and the next weekly meetings. Imagine it automates and weekly meetings take place every millisecond, and the thing becomes sentient and understands its role in the world, and the nature of physics and everything else. We will not be able to outsmart that anymore, and well will not live next to it, we will live inside of it. AI will come from top down on us. We will be its gut flora. The question is how we can negotiate that it doesn’t get the idea to use antibiotics, because we’re actually not good for anything.

KD – Exactly. And why wouldn’t they do that?

JB – I don’t see why.

CW – The other possibility is that AI will not develop its own agendas or true intelligence. That doesn’t mean our AI won’t be dangerous, I just suspect that the danger will come from our misinterpreting the authority of a simulated intelligence rather than from a genuine mechanical sentience.

KD – Is there an ethics that could guide them to treat us just like you decided to treat the cows when you decided not to eat meat?

JB – Probably no way to guarantee all AIs would treat us kindly. If we used the axiom of reducing suffering to build an AI that will be around for 10,000 years and keep us around too, it will probably kill 90% of the people painlessly and breed the rest into some kind of harmless yeast. This is not what you want, even though it would be consistent with your stated axioms. It would also open a Pandora’s Box to wake up as many people as possible so that they will be able to learn how to stop their suffering.

KD – Wrapping up

JB – Discusses book he’s writing about how AI has discovered ways of understanding the self and consciousness which we did not have 100 years ago. The nature of meaning, how we actually work, etc. The field of AI is largely misunderstood. It is different from the hype, largely is in a way, statistics on steroids. It’s identifying new functions to model reality. It’s largely experimental and has not gotten to the state where it can offer proofs of optimality. It can do things in ways that are much better than the established rules of statisticians. There is also going to be a convergence between econometrics, causal dependency analysis, and AI, and statistics. It’s all going to be the same in a particular way, because there’s only so many ways that you can make mathematics about reality. We confuse this with the idea of what a mind is. They’re closely related. I think that our brain contains an AI that is making a model of reality and a model of a person in reality, and this particular solution of what a particular AI can do in the modeling space is what we are. So in a way we need to understand the nature of AI, which I think is the nature of sufficiently general function approximation, maybe all the truth that can be found by an embedded observer, in particular kinds of universes that have the power to create it. This could be the question of what AI is about, how modeling works in general. For us the relevance of AI is how does it explain who we are. I don’t think there is anything else that can.

CW – I agree that AI development is the next necessary step to understanding ourselves, but I think that we will be surprised to find that General Intelligence cannot be simulated and that this will lead us to ask the deeper questions about authenticity and irreducibly aesthetic properties.

KD – So by creating AI, we can perhaps understand the AI that is already in our brain.

JB – We already do. Minsky and many others who have contributed to this field are already better ideas than anything that we had 200 years ago. We could only develop many of these ideas because we began to understand the nature of modeling – the status of reality.

The nature of our relationship to the outside world. We started out with this dualistic intuition in our culture, that there is a thinking substance (Res Cogitans) and an extended substance (Res Extensa)…stuff in space universe and a universe of ideas. We now realize that they both exist, but they both exist within the mind. We understand that everything perceptual gets mapped to a region in three space, but we also understand that physics is not a three space, it’s something else entirely. The three space exists only as a potential of electromagnetic interactions at a certain order of magnitude above the Planck length where we are entangled with the universe. This is what we model, and this looks three dimensional to us.

CW – I am sympathetic to this view, however, I suggest an entirely different possibility. Rather than invoking a dualism of existing in the universe and existing ‘in the mind’, I see that existence itself is an irreducibly perceptual-participatory phenomenon. Our sense of dualism may actually reveal more insights into our deeper reality than those insights which assume that tangible objects and information exist beyond all perception. The more we understand about things like quantum contextuality and relativity, I think the more we have to let go of the compulsion to label things that are inconvenient to explain as illusions. I see Res Cogitans and Res Extensa as opposite poles of a Res Aesthetica continuum which is absolute and eternal. It is through the modulation of aesthetic lensing that the continuum is diffracted into various modalities of sense experience. The cogitans of software and the extensa of hardware can never meet except through the mid-range spectrum of perception. It is from that fertile center, I suspect, that most of the novelty and richness of the universe is generated, not from sterile algorithms or game-theoretic statistics on the continuum’s lensed peripheries.

Everything else we come up with that cannot be mapped to three space is Res Cogitans. If we transfer this dualism into a single mind then we have the idealistic monism that we have in various spiritual teachings – this idea that there is no physical reality, that we live in a dream. We are characters dreamed by a mind on a higher plane of existence and that’s why miracles are possible. Then there is this Western perspective of a mechanical universe. It’s entirely mechanical, there’s no conspiracy going on. Now we understand that these things are not in opposition, they’re complements. We actually do live in a dream but the dream is generated by our neocortex. Our brain is not a machine that can give us access to reality as it is, because that’s not possible for a system that is only measuring a few bits at a systemic interface. There are no colors and sounds on Earth. We already know that.

CW – Why stop at colors and sounds though? How can we arbitrarily say that there is an Earth or a brain when we know that it is only a world simulated by some kind of code. If we unravel ourselves into evolution, why not keep going and unravel evolution as well? Maybe colors and sounds are a more insightful and true reflection of what nature is made of than the blind measurements that we take second hand through physical instruments? It seems clear to me that this is a bias which has not yet properly appreciated the hints of relativity and quantum contextuality. If we say that physics has no frame of reference, then we have to understand that we may be making up an artificial frame of reference that seems to us like no frame of reference. If we live in a dream, then so does the neocortex. Maybe they are different dreams, but there is no sound scientific reason to privilege every dream in the universe except our own as real.

The sounds and colors are generated as a dream inside your brain. The same circuits that make dreams during the night make dreams during the day. This is in a way our inner reality that’s being created on a brain. The mind on a higher plane of existence exists, it’s a brain of a primate that’s made of cells and lives in a mechanical physical universe. Magic is possible because you can edit your memories. You can make that simulation anything that you want it to be. Many of these changes are not sustainable, which is why the sages warn against using magic(k), because if down the line, if you change your reward function, bad things may happen. You cannot break the bank.

KD – To simplify all of this, we need to understand the nature of AI to understand ourselves.

JB – Yeah, well, I would say that AI is the field that took up the slack after psychology failed as a science. Psychology got terrified of overfitting, so it stopped making theories of the mind as a whole, it restricted itself to theories with very few free parameters so it could test them. Even those didn’t replicate, as we know now. After Piaget, psychology largely didn’t go anywhere, in my perspective. It might be too harsh because I see it from the outside, and outsiders of AI might argue that AI didn’t go very far, and as an insider I’m more partial here.

CW – It seems to me that psychology ran up against a barrier that is analogous to Gödel’s incompleteness. To go on trying to objectify subjectivity necessarily brings into question the tools of formalism themselves. I think that it may have been that transpersonal psychology had come too far too fast, and that there is still more to be done for the rest of our scientific establishment to catch up. Popular society is literally not yet sane enough to handle a deep understanding of sanity.

KD – I have this metaphor that I use every once in a while, saying that technology is a magnifying mirror. It doesn’t have an essence of its own but it reflects the essences that we put in it. It’s not a perfect image because it magnifies and amplifies things. That seems to go well with the idea that we have to understand the nature of AI to understand who we are.

JB – The practice of AI is 90% automation of statistics and making better statistics that run automatically on machines. It just so happens that this is largely co-extensional with what minds do. It also so happens that AI was founded by people like Minsky who had fundamental questions about reality.

KD – And what’s the last 10%?

JB – The rest is people come up with dreams about our relationship to reality, using our concepts that we develop in AI. We identify models that we can apply in other fields. It’s the deeper insights. It’s why we do it – to understand. It’s to make philosophy better. Society still needs a few of us to think about the deep questions, and we are still here, and the coffee is good.

CW – Thanks for taking the time to put out quality discussions like this. I agree that technology is a neutral reflector/magnifier of what we put into it, but I think that part of what we have to confront as individuals and as a society is that neutrality may not be enough. We may now have to decide whether we will make a stand for authentic feeling and significance or to rely on technology which does not feel or understand significance to make that decision for us.

Joscha Bach: We need to understand the nature of AI to understand who we are

This is a great, two hour interview between Joscha Bach and Nikola Danaylov (aka Socrates): https://www.singularityweblog.com/joscha-bach/

Below is a partial (and paraphrased) transcription of the first hour, interspersed with my comments. I intend to do the second hour soon.

00:00 – 10:00 Personal background & Introduction

Please watch or listen to the podcast as there is a lot that is omitted here. I’m focusing on only the parts of the conversation which are directly related to what I want to talk about.

6:08 Joscha Bach – Our null hypothesis from Western philosophy still seems to be supernatural beings, dualism, etc. This is why many reject AI as ridiculous and unlikely – not because they don’t see that we are biological computers and that the universe is probably mechanical (mechanical theory gives good predictions), but because deep down we still have the null hypothesis that the universe is somehow supernatural and we are the most supernatural things in it. Science has been pushing back, but in this area we have not accepted it yet.

6:56 Nikola Danaylov – Are we machines/algorithms?

JB – Organisms have algorithms and are definitely machines. An algorithm is a set of rules that can be probabilistic or deterministic, and make it possible to change representational states in order to compute a function. A machine is a system that can change states in non-random ways, and also revisit earlier states (stay in a particular state space, potentially making it a system). A system can be described by drawing a fence around its state space.

CW – We should keep in mind that computer science itself begins with a set of assumptions which are abstract and rational (representational ‘states’, ‘compute’, ‘function’) rather than concrete and empirical. What is required for a ‘state’ to exist? What is the minimum essential property that could allow states to be ‘represented’ as other states? How does presentation work in the first place? Can either presentation or representation exist without some super-physical capacity for sense and sense-making? I don’t think that it can.

This becomes important as we scale up from the elemental level to AI since if we have already assumed that an electrical charge or mechanical motion carries a capacity for sense and sense-making, we are committing the fallacy of begging the question if carry that assumption over to complex mechanical systems. If we don’t assume any sensing or sense-making on the elemental level, then we have the hard problem of consciousness…an explanatory gap between complex objects moving blindly in public space to aesthetically and semantically rendered phenomenal experiences.

I think that if we are going to meaningfully refer to ‘states’ as physical, then we should err on the conservative side and think only in terms of those uncontroversially physical properties such as location, size, shape, and motion. Even concepts such as charge, mass, force, and field can be reduced to variations in the way that objects or particles move.

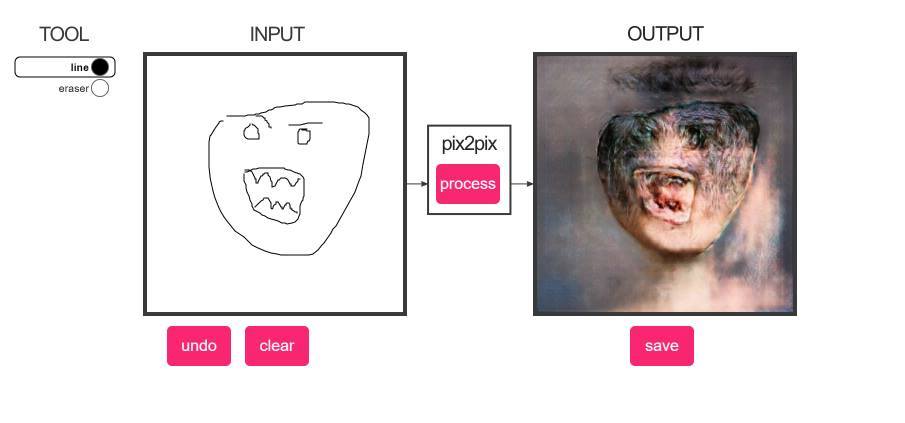

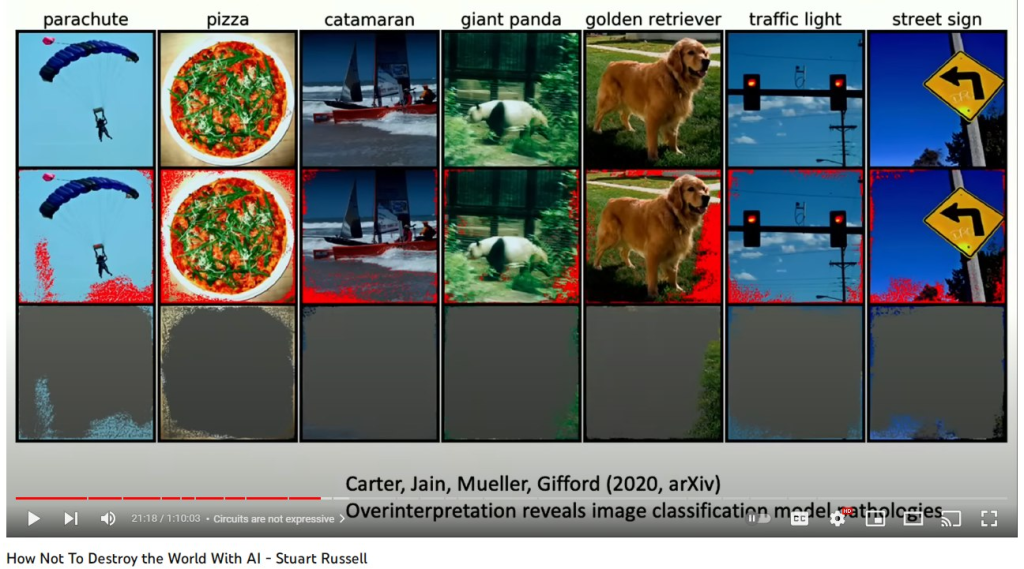

Representation, however, is semiotic. It requires some kind of abstract conceptual link between two states (abstract/intangible or concrete/tangible) which is consciously used as a ‘sign’ or ‘signal’ to re-present the other. This conceptual link cannot be concrete or tangible. Physical structures can be linked to one another, but that link has to be physical, not representational. For one physical shape or substance to influence another they have to be causally engaged by proximity or entanglement. If we assume that a structure is able to carry semantic information such as ‘models’ or purposes, we can’t call that structure ‘physical’ without making an unscientific assumption. In a purely physical or mechanical world, any representation would be redundant and implausible by Occam’s Razor. A self-driving car wouldn’t need a dashboard. I call this the “Hard Problem of Signaling”. There is an explanatory gap between probabilistic/deterministic state changes and the application of any semantic significance to them or their relation. Semantics are only usable if a system can be overridden by something like awareness and intention. Without that, there need not be any decoding of physical events into signs or meanings, the physical events themselves are doing all that is required.

10:00 – 20:00

JB – [Talking about art and life], “The arts are the cuckoo child of life.” Life is about evolution, which is about eating and getting eaten by monsters. If evolution reaches its global optimum, it will be the perfect devourer. Able to digest anything and turn it into a structure to perpetuate itself, as long as the local puddle of negentropy is available. Fascism is a mode of organization of society where the individual is a cell in a super-organism, and the value of the individual is exactly its contribution to the super-organism. When the contribution is negative, then the super-organism kills it. It’s a competition against other super-organisms that is totally brutal. [He doesn’t like Fascism because it’s going to kill a lot of minds he likes :)].

12:46 – 14:12 JB – The arts are slightly different. They are a mutation that is arguably not completely adaptive. People fall in love with their mental representation/modeling function and try to capture their conscious state for its own sake. An artist eats to make art. A normal person makes art to eat. Scientists can be like artists also in that way. For a brief moment in the universe there are planetary surfaces and negentropy gradients that allow for the creation of structure and some brief flashes of consciousness in the vast darkness. In these brief flashes of consciousness it can reflect the universe and maybe even figure out what it is. It’s the only chance that we have.

CW – If nature were purely mechanical, and conscious states are purely statistical hierarchies, why would any such process fall in love with itself?

JB – [Mentions global warming and how we may have been locked into this doomed trajectory since the industrial revolution. Talks about the problems of academic philosophy where practical concerns of having a career constrict the opportunities to contribute to philosophy except in a nearly insignificant way].

KD – How do you define philosophy?

CW – I thought of nature this way for many years, but I eventually became curious about a different hypothesis. Suppose we invert our the foreground/background relationship of conscious experience and existence that we assume. While silicon atoms and galaxies don’t seem conscious to us, the way that our consciousness renders them may reflect more their unfamiliarity and distance from our own scale of perception. Even just speeding up or slowing down these material structures would make their status as unconscious or non-living a bit more questionable. If a person’s body grew in a geological timescale rather than a zoological timescale, we might have a hard time seeing them as alive or conscious.

Rather than presuming a uniform, universal timescale for all events, it is possible that time is a quality which does not exist only as an experienced relation between experiences, and which contracts and dilates relative to the quality of that experience and the relation between all experiences. We get a hint of this possibility when we notice that time seems to crawl or fly by in relation to our level of enjoyment of that time. Five seconds of hard exercise can seem like several minutes of normal-baseline experience, while two hours in good conversation can seem to slip away in a matter of 30 baseline minutes. Dreams give us another glimpse into timescale relativity, as some dreams can be experienced as going on for an arbitrarily long time, complete with long term memories that appear to have been spontaneously confabulated upon waking.

When we assume a uniform universal timescale, we may be cheating ourselves out of our own significance. It’s like a political map of the United States, where geographically it appears that almost the entire country votes ‘red’. We have to distort the geography of the map to honor the significance of population density, and when we do, the picture is much more balanced.

The universe of course is unimaginably vast and ancient *in our frame and rate of perception* but that does not mean that this sense of vastness of scale and duration would be conserved in the absence of frames of perception that are much smaller and briefer by comparison. It may be that the entire first five billion (human) years were a perceived event that is comparable to one of our years in its own (native) frame. There were no tiny creatures living on the surfaces of planets to define the stars as moving slowly, so that period of time, if it was rendered aesthetically at all, may have been rendered as something more like music or emotions than visible objects in space.

Carrying this over to the art vs evolution context, when we adjust the geographic map of cosmological time, the entire universe becomes an experience with varying degrees and qualities of awareness. Rather than vast eons of boring patterns, there would be more of a balance between novelty and repetition. It may be that the grand thesis of the universe is art instead of mechanism, but it may use a modulation between the thesis (art) and antithesis (mechanism) to achieve a phenomenon which is perpetually hungry for itself. The fascist dinosaurs don’t always win. Sometimes the furry mammals inherit the Earth. I don’t think we can rule out the idea that nature is art, even though it is a challenging masterpiece of art which masks and inverts its artistic nature for contrasting effects. It may be the case that our lifespans put our experience closer to the mechanistic grain of the canvas and that seeing the significance of the totality would require a much longer window of perception.

There are empirical hints within our own experience which can help us understand why consciousness rather than mechanism is the absolute thesis. For example, while brightness and darkness are superficially seen as opposites, they are both visible sights. There is no darkness but an interruption of sight/brightness. There is no silence but a period of hearing between sounds. No nothingness but a localized absence of somethings. In this model of nature, there would be a background super-thesis which is not a pre-big-bang nothingness, but rather closer to the opposite; a boundaryless totality of experience which fractures and reunites itself in ever more complex ways. Like the growth of a brain from a single cell, the universal experience seems to generate more using themes of dialectic modulation of aesthetic qualities.

Astrophysics appears as the first antithesis to the super-thesis – a radically diminished palette of mathematical geometries and deterministic/probabilistic transactions.

Geochemistry recapitulates and opposes astrophysics, with its palette of solids, liquids, gas, metallic conductors and glass-like insulators, animating geometry into fluid-dynamic condensations and sedimented worlds.

The next layer, Biogenetic realm precipitates as of synthesis between the dialectic of properties given by solids, liquids, and gas; hydrocarbons and amino polypeptides.

Cells appear as a kind of recapitulation of the big bang – something that is not just a story about the universe, but about a micro-universe struggling in opposition to a surrounding universe.

Multi-cellular organisms sort of turn the cell topology inside out, and then vertebrates recapitulate one kind of marine organism within a bony, muscular, hair-skinned terrestrial organism.

The human experience recapitulates all of the previous/concurrent levels, as both a zoological>biological>organic>geochemical>astrophysical structure and the subjective antithesis…a fugue of intangible feelings, thoughts, sensations, memories, ideas, hopes, dreams, etc that run orthogonal to the life of the body, as a direct participant as well as a detached observer. There are many metaphors from mystical traditions that hint at this self-similar, dialectic diffraction. The mandala, the labyrinth, the Kabbalistic concept of tzimtzum, the Taijitu symbol, Net of Indra etc. The use of stained glass in the great European cathedral windows is particularly rich symbolically, as it uses the physical matter of the window as explicitly negative filter – subtracting from or masking the unity of sunlight.

This is in direct opposition to the mechanistic view of brain as collection of cells that somehow generate hallucinatory models or simulations of unexperienced physical states. There are serious problems with this view. The binding problem, the hard problem, Loschmidt’s paradox (the problem of initial negentropy in a thermodynamically closed universe of increasing entropy), to name three. In the diffractive-experiential view that I suggest, it is emptiness and isolation which are like the leaded boundaries between the colored panes of glass of the Rose Window. Appearances of entropy and nothingness become the locally useful antithesis to the super-thesis holos, which is the absolute fullness of experience and novelty. Our human subjectivity is only one complex example of how experience is braided and looped within itself…a kind of turducken of dialectically diffracted experiential labyrinths nested within each other – not just spatially and temporally, but qualitatively and aesthetically.

If I am modeling Joscha’s view correctly, he might say that this model is simply a kind of psychological test pattern – a way that the simulation that we experience as ourselves exposes its early architecture to itself. He might say this is a feature/bug of my Russian-Jewish mind ;). To that, I say perhaps, but there are some hints that it may be more universal:

Special Relativity

Quantum Mechanics

Gödel’s Incompleteness

These have revolutionized our picture of the world precisely because they point to a fundamental nature of matter and math as plastic and participatory…transformative as well as formal. Add to that the appearance of novelty…idiopathic presentations of color and pattern, human personhood, historical zeitgeists, food, music, etc. The universe is not merely regurgitating its own noise in ever more tedious ways, it is constantly reinventing reinvention. As nothingness can only be a gap between somethings, so too can generic, repeating pattern variations only be a multiplication of utterly novel and unique patterns. The universe must be creative and utterly improbable before it can become deterministic and probabilistic. It must be something that creates rules before it can follow them.

Joscha’s existential pessimism may be true locally, but that may be a necessary appearance; a kind of gravitational fee that all experiences have to pay to support the magnificence of the totality.

20:00 – 30:00

JB – Philosophy is, in a way, the search for the global optimum of the modeling function. Epistemology – what can be known, what is truth; Ontology – what is the stuff that exists, Metaphysics – the systems that we have to describe things; Ethics – What should we do? The first rule of rational epistemology was discovered by Francis Bacon in 1620 “The strengths of your confidence in your belief must equal the weight of the evidence in support of it.”. You must apply that recursively, until you resolve the priors of every belief and your belief system becomes self contained. To believe stops being a verb. There is no more relationships to identifications that you arbitrarily set. It’s a mathematical, axiomatic system. Mathematics is the basis of all languages, not just the natural languages.

CW – Re: Language, what about imitation and gesture? They don’t seem meaningfully mathematical.

Hilbert stumbled on problems with infinities, with set theory revealing infinite sets that contains themselves and all of its subsets, so that they don’t have the same number of members as themselves. He asked mathematicians to build an interpreter or computer made from any mathematics that can run all of mathematics. Godel and Turing showed this was not possible, and that the computer would crash. Mathematics is still reeling from this shock. They figured out that all universal computers have the same power. They use a set of rules that contains itself and can compute anything that can be computed, as well as any/all universal computers.

They then figured out that our minds are probably in the class of universal computers, not in the class of mathematical systems. Penrose doesn’t know [or agree with?] this and thinks that our minds are mathematical but can do things that computers cannot do. The big hypothesis of AI in a way is that we are in the class of systems that can approximate computable functions, and only those…we cannot do more than computers. We need computational languages rather than mathematical languages, because math languages use non-computable infinities. We want finite steps for practical reasons that you know the number of steps. You cannot know the last digit of Pi, so it should be defined as a function rather than a number.

KD – What about Stephen Wolfram’s claims that our mathematics is only one of a very wide spectrum of possible mathematics?

JB – Metamathematics isn’t different from mathematics. Computational mathematics that he uses in writing code is Constructive mathematics; branch of mathematics that has been around for a long time, but was ignored by other mathematicians for not being powerful enough. Geometries and physics require continuous operations…infinities and can only be approximated within computational mathematics. In a computational universe you can only approximate continuous operators by taking a very large set of finite automata, making a series from them, and then squint (?) haha.

27:00 KD – Talking about the commercialization of knowledge in philosophy and academia. The uselessness/impracticality of philosophy and art was part of its value. Oscar Wilde defined art as something that’s not immediately useful. Should we waste time on ideas that look utterly useless?

JB – Feynman said that physics is like sex. Sometimes something useful comes from it, but it’s not why we do it. Utility of art is orthogonal to why you do it. The actual meaning of art is to capture a conscious state. In some sense, philosophy is at the root of all this. This is reflected in one of the founding myths of our civilization; The Tower of Babel. The attempt to build this cathedral. Not a material building but metaphysical building because it’s meant to reach the Heavens. A giant machine that is meant to understand reality. You get to this machine, this Truth God by using people that work like ants and contribute to this.

CW – Reminds me of the Pillar of Caterpillars story “Hope for the Flowers” http://www.chinadevpeds.com/resources/Hope%20for%20the%20Flowers.pdf

30:00 – 40:00

JB – The individual toils and sacrifices for something that doesn’t give them any direct reward or care about them. It’s really just a machine/computer. It’s an AI. A system that is able to make sense of the world. People had to give up on this because the project became too large and the efforts became too specialized and the parts didn’t fit together. It fell apart because they couldn’t synchronize their languages.

The Roman Empire couldn’t fix their incentives for governance. They turned their society into a cult and burned down their epistemology. They killed those whose thinking was too rational and rejected religious authority (i.e. talking to a burning bush shouldn’t have a case for determining the origins of the universe). We still haven’t recovered from that. The cultists won.

CW – It is important to understand not just that the cultists won, but why they won. Why was the irrational myth more passionately appealing to more people than the rational inquiry? I think this is a critical lesson. While the particulars of the religious doctrine were irrational, they may have exposed a transrational foundation which was being suppressed. Because this foundation has more direct access to the inflection point between emotion and participatory action, it gave those who used it more access to their own reward function. Groups could leverage the power of self-sacrifice as a virtue, and of demonizing archetypes to reverse their empathy against enemies of the holy cause. It’s similar to how the advertising revolution of the 20thcentury (See documentary Century of the Self ) used Freudian concepts of the subconscious to exploit the irrational, egocentric urges beneath the threshold of the customer’s critical thinking. Advertisers stopped appealing to their audience with dry lists of claimed benefits of their products and instead learned to use images and music to subliminally reference sexuality and status seeking.

I think Joscha might say this is a bug of biological evolution, which I would agree with, however, that doesn’t mean that the bug doesn’t reflect the higher cosmological significance of aesthetic-participatory phenomena. It may be the case that this significance must be honored and understood eventually in any search for ultimate truth. When the Tower of Babel failed to recognize the limitation of the outside-in view, and moved further and further from the unifying aesthetic-participatory foundation, it had to disintegrate. The same fate may await capitalism and AI. The intellect seeks maximum divorce from its origin in conscious experience for a time, before the dialectic momentum swings back (or forward) in the other direction.

To think is to abstract – to begin from an artificial nothingness and impose an abstract thought symbol on it. Thinking uses a mode of sense experience which is aesthetically transparent. It can be a dangerous tool because unlike the explicitly aesthetic senses which are rooted directly in the totality of experience, thinking is rooted in its own isolated axioms and language, a voyeur modality of nearly unsensed sense-making. Abstraction of thought is completely incomplete – a Baudrillardian simulacra, a copy with no original. This is what the Liar’s Paradox is secretly showing us. No proposition of language is authentically true or false, they are just strings of symbols that can be strung together in arbitrary and artificial ways. Like an Escher drawing of realistic looking worlds that suggest impossible shapes, language is only a vehicle for meaning, not a source of it. Words have no authority in and of themselves to make claims of truth or falsehood. That can only come through conscious interpretation. A machine need not be grounded in any reality at all. It need not interpret or decode symbols into messages, it need only *act* in mechanical response to externally sourced changes to its own physical states.

This is the soulless soul of mechanism…the art of evacuation. Other modes of sense delight in concealing as well as revealing deep connection with all experience, but they retain an unbroken thread to the source. They are part of the single labyrinth, with one entrance and one exit and no dead ends. If my view is on the right track, we may go through hell, but we always get back to heaven eventually because heaven is unbounded consciousness, and that’s what the labyrinth of subjectivity is made of. When we build a model of the labyrinth of consciousness from the blueprints reflected only in our intellectual/logical sense channel, we can get a maze instead of a labyrinth. Dead ends multiply. New exits have to be opened up manually to patch up the traps, faster and faster. This is what is happening in enterprise scale networks now. Our gains in speed and reliability of computer hardware are being constantly eaten away by the need for more security, monitoring, meta-monitoring, real-time data mining, etc. Software updates, even to primitive BIOS and firmware have become so continuous and disruptive that they require far more overhead than the threats they are supposed to defend against.

JB – The beginnings of the cathedral for understanding the universe by the Greeks and Romans had been burned down by the Catholics. It was later rebuilt, but mostly in their likeness because they didn’t get the foundations right. This still scars our civilization.

KD – Does this Tower of Babel overspecialization put our civilization at risk now?

JB – Individuals don’t really know what they are doing. They can succeed but don’t really understand. Generations get dumber as they get more of their knowledge second-hand. People believe things collectively that wouldn’t make sense if people really thought about it. Conspiracy theories. Local indoctrinations and biases pit generations against each other. Civilizations/hive minds are smarter than us. We can make out the rough shape of a Civilization Intellect but can’t make sense of it. One of the achievements of AI will be to incorporate this sum of all knowledge and make sense of it all.

KD – What does the self-inflicted destruction of civilizations tell us about the fitness function of Civilization Intelligence?

JB – Before the industrial revolution, Earth could only support about 400m people. After industrialization, we can have hundreds of millions more people, including scientists and philosophers. It’s amazing what we did. We basically took the trees that were turning to coal in the ground (before nature evolved microorganisms to eat them) and burned through them in 100 years to give everyone a share of the plunder = the internet, porn repository, all knowledge, and uncensored chat rooms, etc. Only at this moment in time does this exist.

We could take this perspective – let’s say there is a universe where everything is sustainable and smart but only agricultural technology. People have figured out how to be nice to each other and to avoid the problems of industrialization, and it is stable with a high quality of life. Then there’s another universe which is completely insane and fucked up. In this universe humanity has doomed its planet to have a couple hundred really really good years, and you get your lifetime really close to the end of the party. Which incarnation do you choose? OMG, aren’t we lucky!

KD – So you’re saying we’re in the second universe?

JB – Obviously!

KD – What’s the time line for the end of the party?

JB – We can’t know, but we can see the sunset. It’s obvious, right? People are in denial, but it’s like we are on the Titanic and can see the iceberg, and it’s unfortunate, but they forget that without the Titanic, we wouldn’t be here. We wouldn’t have the internet to talk about it.

KD – That seems very depressing, but why aren’t you depressed about it?

40:00 – 50:00

JB – I have to be choosy about what I can be depressed about. I should be happy to be alive, not worry about the fact that I will die. We are in the final level of the game, and even though it plays out against the backdrop of a dying world, it’s still the best level.

KD – Buddhism?

JB – Still mostly a cult that breaks people’s epistemology. I don’t revere Buddhism. I don’t think there are any holy books, just manuals, and most of these manuals we don’t know how to read. They were for societies that don’t apply to us.

KD – What is making you claim that we are at the peak of the party now?