Archive

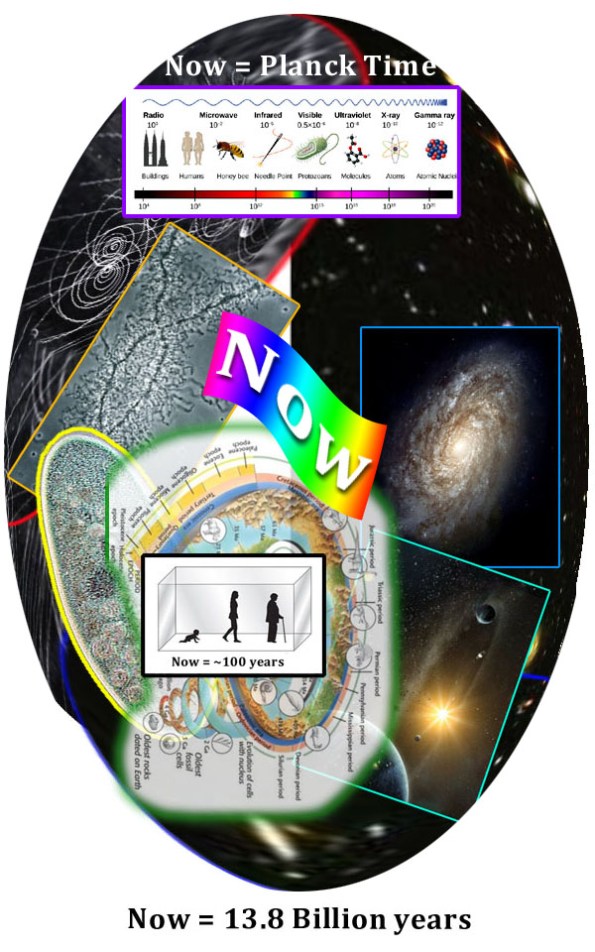

MSR Time Diagram

The Multisense Realism view of time contains both A-Series and B-Series time as emergent perspectives within the larger schema of nested/diffracted frames of perception (“Now”s).

Fooling Computer Image Recognition is Easier Than it Should Be

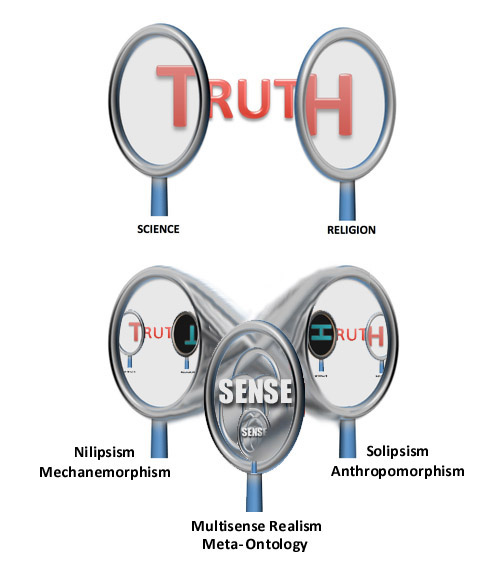

This 2016 study, Universal Adversarial Perturbations, demonstrates how the introduction of specially designed low level noise into image data makes state of the art neural networks misclassify natural images with high probability. Because the noise is almost imperceptible to the human eye, I think it should be a clue that image processing technology is not ‘seeing’ images.

It is not only the fact that it is possible to throw off the technology so easily that is significant, but that the kinds of miscalculations that are made are so broad and unnatural. Had the program had any real sense of an image, adding some digital grit to a picture of a coffee pot or plant should not cause a ‘macaw’ hit, but rather some other visually similar object or plant.

While many will choose to see this paper as a suggestion for a need to improve recognition methods, I see it as supporting a shift away from outside-in, bottom-up models of perception altogether. As I have suggested in other posts, all of out current AI models are inside out.

3/16/17 – see also http://www.popsci.com/byzantine-science-deceiving-artificial-intelligence

Recent Comments