Archive

Joscha Bach: We need to understand the nature of AI to understand who we are

This is a great, two hour interview between Joscha Bach and Nikola Danaylov (aka Socrates): https://www.singularityweblog.com/joscha-bach/

Below is a partial (and paraphrased) transcription of the first hour, interspersed with my comments. I intend to do the second hour soon.

00:00 – 10:00 Personal background & Introduction

Please watch or listen to the podcast as there is a lot that is omitted here. I’m focusing on only the parts of the conversation which are directly related to what I want to talk about.

6:08 Joscha Bach – Our null hypothesis from Western philosophy still seems to be supernatural beings, dualism, etc. This is why many reject AI as ridiculous and unlikely – not because they don’t see that we are biological computers and that the universe is probably mechanical (mechanical theory gives good predictions), but because deep down we still have the null hypothesis that the universe is somehow supernatural and we are the most supernatural things in it. Science has been pushing back, but in this area we have not accepted it yet.

6:56 Nikola Danaylov – Are we machines/algorithms?

JB – Organisms have algorithms and are definitely machines. An algorithm is a set of rules that can be probabilistic or deterministic, and make it possible to change representational states in order to compute a function. A machine is a system that can change states in non-random ways, and also revisit earlier states (stay in a particular state space, potentially making it a system). A system can be described by drawing a fence around its state space.

CW – We should keep in mind that computer science itself begins with a set of assumptions which are abstract and rational (representational ‘states’, ‘compute’, ‘function’) rather than concrete and empirical. What is required for a ‘state’ to exist? What is the minimum essential property that could allow states to be ‘represented’ as other states? How does presentation work in the first place? Can either presentation or representation exist without some super-physical capacity for sense and sense-making? I don’t think that it can.

This becomes important as we scale up from the elemental level to AI since if we have already assumed that an electrical charge or mechanical motion carries a capacity for sense and sense-making, we are committing the fallacy of begging the question if carry that assumption over to complex mechanical systems. If we don’t assume any sensing or sense-making on the elemental level, then we have the hard problem of consciousness…an explanatory gap between complex objects moving blindly in public space to aesthetically and semantically rendered phenomenal experiences.

I think that if we are going to meaningfully refer to ‘states’ as physical, then we should err on the conservative side and think only in terms of those uncontroversially physical properties such as location, size, shape, and motion. Even concepts such as charge, mass, force, and field can be reduced to variations in the way that objects or particles move.

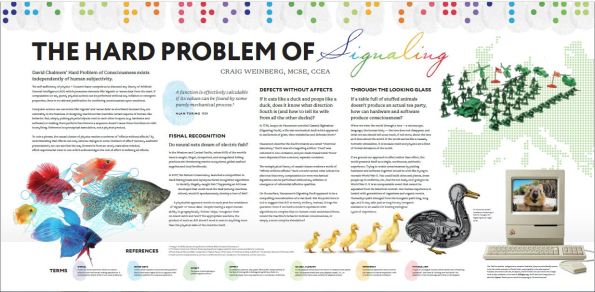

Representation, however, is semiotic. It requires some kind of abstract conceptual link between two states (abstract/intangible or concrete/tangible) which is consciously used as a ‘sign’ or ‘signal’ to re-present the other. This conceptual link cannot be concrete or tangible. Physical structures can be linked to one another, but that link has to be physical, not representational. For one physical shape or substance to influence another they have to be causally engaged by proximity or entanglement. If we assume that a structure is able to carry semantic information such as ‘models’ or purposes, we can’t call that structure ‘physical’ without making an unscientific assumption. In a purely physical or mechanical world, any representation would be redundant and implausible by Occam’s Razor. A self-driving car wouldn’t need a dashboard. I call this the “Hard Problem of Signaling”. There is an explanatory gap between probabilistic/deterministic state changes and the application of any semantic significance to them or their relation. Semantics are only usable if a system can be overridden by something like awareness and intention. Without that, there need not be any decoding of physical events into signs or meanings, the physical events themselves are doing all that is required.

10:00 – 20:00

JB – [Talking about art and life], “The arts are the cuckoo child of life.” Life is about evolution, which is about eating and getting eaten by monsters. If evolution reaches its global optimum, it will be the perfect devourer. Able to digest anything and turn it into a structure to perpetuate itself, as long as the local puddle of negentropy is available. Fascism is a mode of organization of society where the individual is a cell in a super-organism, and the value of the individual is exactly its contribution to the super-organism. When the contribution is negative, then the super-organism kills it. It’s a competition against other super-organisms that is totally brutal. [He doesn’t like Fascism because it’s going to kill a lot of minds he likes :)].

12:46 – 14:12 JB – The arts are slightly different. They are a mutation that is arguably not completely adaptive. People fall in love with their mental representation/modeling function and try to capture their conscious state for its own sake. An artist eats to make art. A normal person makes art to eat. Scientists can be like artists also in that way. For a brief moment in the universe there are planetary surfaces and negentropy gradients that allow for the creation of structure and some brief flashes of consciousness in the vast darkness. In these brief flashes of consciousness it can reflect the universe and maybe even figure out what it is. It’s the only chance that we have.

CW – If nature were purely mechanical, and conscious states are purely statistical hierarchies, why would any such process fall in love with itself?

JB – [Mentions global warming and how we may have been locked into this doomed trajectory since the industrial revolution. Talks about the problems of academic philosophy where practical concerns of having a career constrict the opportunities to contribute to philosophy except in a nearly insignificant way].

KD – How do you define philosophy?

CW – I thought of nature this way for many years, but I eventually became curious about a different hypothesis. Suppose we invert our the foreground/background relationship of conscious experience and existence that we assume. While silicon atoms and galaxies don’t seem conscious to us, the way that our consciousness renders them may reflect more their unfamiliarity and distance from our own scale of perception. Even just speeding up or slowing down these material structures would make their status as unconscious or non-living a bit more questionable. If a person’s body grew in a geological timescale rather than a zoological timescale, we might have a hard time seeing them as alive or conscious.

Rather than presuming a uniform, universal timescale for all events, it is possible that time is a quality which does not exist only as an experienced relation between experiences, and which contracts and dilates relative to the quality of that experience and the relation between all experiences. We get a hint of this possibility when we notice that time seems to crawl or fly by in relation to our level of enjoyment of that time. Five seconds of hard exercise can seem like several minutes of normal-baseline experience, while two hours in good conversation can seem to slip away in a matter of 30 baseline minutes. Dreams give us another glimpse into timescale relativity, as some dreams can be experienced as going on for an arbitrarily long time, complete with long term memories that appear to have been spontaneously confabulated upon waking.

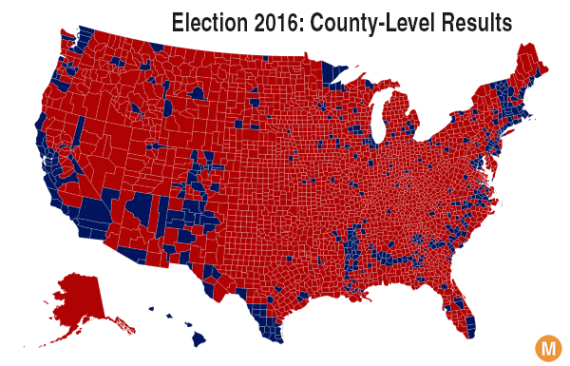

When we assume a uniform universal timescale, we may be cheating ourselves out of our own significance. It’s like a political map of the United States, where geographically it appears that almost the entire country votes ‘red’. We have to distort the geography of the map to honor the significance of population density, and when we do, the picture is much more balanced.

The universe of course is unimaginably vast and ancient *in our frame and rate of perception* but that does not mean that this sense of vastness of scale and duration would be conserved in the absence of frames of perception that are much smaller and briefer by comparison. It may be that the entire first five billion (human) years were a perceived event that is comparable to one of our years in its own (native) frame. There were no tiny creatures living on the surfaces of planets to define the stars as moving slowly, so that period of time, if it was rendered aesthetically at all, may have been rendered as something more like music or emotions than visible objects in space.

Carrying this over to the art vs evolution context, when we adjust the geographic map of cosmological time, the entire universe becomes an experience with varying degrees and qualities of awareness. Rather than vast eons of boring patterns, there would be more of a balance between novelty and repetition. It may be that the grand thesis of the universe is art instead of mechanism, but it may use a modulation between the thesis (art) and antithesis (mechanism) to achieve a phenomenon which is perpetually hungry for itself. The fascist dinosaurs don’t always win. Sometimes the furry mammals inherit the Earth. I don’t think we can rule out the idea that nature is art, even though it is a challenging masterpiece of art which masks and inverts its artistic nature for contrasting effects. It may be the case that our lifespans put our experience closer to the mechanistic grain of the canvas and that seeing the significance of the totality would require a much longer window of perception.

There are empirical hints within our own experience which can help us understand why consciousness rather than mechanism is the absolute thesis. For example, while brightness and darkness are superficially seen as opposites, they are both visible sights. There is no darkness but an interruption of sight/brightness. There is no silence but a period of hearing between sounds. No nothingness but a localized absence of somethings. In this model of nature, there would be a background super-thesis which is not a pre-big-bang nothingness, but rather closer to the opposite; a boundaryless totality of experience which fractures and reunites itself in ever more complex ways. Like the growth of a brain from a single cell, the universal experience seems to generate more using themes of dialectic modulation of aesthetic qualities.

Astrophysics appears as the first antithesis to the super-thesis – a radically diminished palette of mathematical geometries and deterministic/probabilistic transactions.

Geochemistry recapitulates and opposes astrophysics, with its palette of solids, liquids, gas, metallic conductors and glass-like insulators, animating geometry into fluid-dynamic condensations and sedimented worlds.

The next layer, Biogenetic realm precipitates as of synthesis between the dialectic of properties given by solids, liquids, and gas; hydrocarbons and amino polypeptides.

Cells appear as a kind of recapitulation of the big bang – something that is not just a story about the universe, but about a micro-universe struggling in opposition to a surrounding universe.

Multi-cellular organisms sort of turn the cell topology inside out, and then vertebrates recapitulate one kind of marine organism within a bony, muscular, hair-skinned terrestrial organism.

The human experience recapitulates all of the previous/concurrent levels, as both a zoological>biological>organic>geochemical>astrophysical structure and the subjective antithesis…a fugue of intangible feelings, thoughts, sensations, memories, ideas, hopes, dreams, etc that run orthogonal to the life of the body, as a direct participant as well as a detached observer. There are many metaphors from mystical traditions that hint at this self-similar, dialectic diffraction. The mandala, the labyrinth, the Kabbalistic concept of tzimtzum, the Taijitu symbol, Net of Indra etc. The use of stained glass in the great European cathedral windows is particularly rich symbolically, as it uses the physical matter of the window as explicitly negative filter – subtracting from or masking the unity of sunlight.

This is in direct opposition to the mechanistic view of brain as collection of cells that somehow generate hallucinatory models or simulations of unexperienced physical states. There are serious problems with this view. The binding problem, the hard problem, Loschmidt’s paradox (the problem of initial negentropy in a thermodynamically closed universe of increasing entropy), to name three. In the diffractive-experiential view that I suggest, it is emptiness and isolation which are like the leaded boundaries between the colored panes of glass of the Rose Window. Appearances of entropy and nothingness become the locally useful antithesis to the super-thesis holos, which is the absolute fullness of experience and novelty. Our human subjectivity is only one complex example of how experience is braided and looped within itself…a kind of turducken of dialectically diffracted experiential labyrinths nested within each other – not just spatially and temporally, but qualitatively and aesthetically.

If I am modeling Joscha’s view correctly, he might say that this model is simply a kind of psychological test pattern – a way that the simulation that we experience as ourselves exposes its early architecture to itself. He might say this is a feature/bug of my Russian-Jewish mind ;). To that, I say perhaps, but there are some hints that it may be more universal:

Special Relativity

Quantum Mechanics

Gödel’s Incompleteness

These have revolutionized our picture of the world precisely because they point to a fundamental nature of matter and math as plastic and participatory…transformative as well as formal. Add to that the appearance of novelty…idiopathic presentations of color and pattern, human personhood, historical zeitgeists, food, music, etc. The universe is not merely regurgitating its own noise in ever more tedious ways, it is constantly reinventing reinvention. As nothingness can only be a gap between somethings, so too can generic, repeating pattern variations only be a multiplication of utterly novel and unique patterns. The universe must be creative and utterly improbable before it can become deterministic and probabilistic. It must be something that creates rules before it can follow them.

Joscha’s existential pessimism may be true locally, but that may be a necessary appearance; a kind of gravitational fee that all experiences have to pay to support the magnificence of the totality.

20:00 – 30:00

JB – Philosophy is, in a way, the search for the global optimum of the modeling function. Epistemology – what can be known, what is truth; Ontology – what is the stuff that exists, Metaphysics – the systems that we have to describe things; Ethics – What should we do? The first rule of rational epistemology was discovered by Francis Bacon in 1620 “The strengths of your confidence in your belief must equal the weight of the evidence in support of it.”. You must apply that recursively, until you resolve the priors of every belief and your belief system becomes self contained. To believe stops being a verb. There is no more relationships to identifications that you arbitrarily set. It’s a mathematical, axiomatic system. Mathematics is the basis of all languages, not just the natural languages.

CW – Re: Language, what about imitation and gesture? They don’t seem meaningfully mathematical.

Hilbert stumbled on problems with infinities, with set theory revealing infinite sets that contains themselves and all of its subsets, so that they don’t have the same number of members as themselves. He asked mathematicians to build an interpreter or computer made from any mathematics that can run all of mathematics. Godel and Turing showed this was not possible, and that the computer would crash. Mathematics is still reeling from this shock. They figured out that all universal computers have the same power. They use a set of rules that contains itself and can compute anything that can be computed, as well as any/all universal computers.

They then figured out that our minds are probably in the class of universal computers, not in the class of mathematical systems. Penrose doesn’t know [or agree with?] this and thinks that our minds are mathematical but can do things that computers cannot do. The big hypothesis of AI in a way is that we are in the class of systems that can approximate computable functions, and only those…we cannot do more than computers. We need computational languages rather than mathematical languages, because math languages use non-computable infinities. We want finite steps for practical reasons that you know the number of steps. You cannot know the last digit of Pi, so it should be defined as a function rather than a number.

KD – What about Stephen Wolfram’s claims that our mathematics is only one of a very wide spectrum of possible mathematics?

JB – Metamathematics isn’t different from mathematics. Computational mathematics that he uses in writing code is Constructive mathematics; branch of mathematics that has been around for a long time, but was ignored by other mathematicians for not being powerful enough. Geometries and physics require continuous operations…infinities and can only be approximated within computational mathematics. In a computational universe you can only approximate continuous operators by taking a very large set of finite automata, making a series from them, and then squint (?) haha.

27:00 KD – Talking about the commercialization of knowledge in philosophy and academia. The uselessness/impracticality of philosophy and art was part of its value. Oscar Wilde defined art as something that’s not immediately useful. Should we waste time on ideas that look utterly useless?

JB – Feynman said that physics is like sex. Sometimes something useful comes from it, but it’s not why we do it. Utility of art is orthogonal to why you do it. The actual meaning of art is to capture a conscious state. In some sense, philosophy is at the root of all this. This is reflected in one of the founding myths of our civilization; The Tower of Babel. The attempt to build this cathedral. Not a material building but metaphysical building because it’s meant to reach the Heavens. A giant machine that is meant to understand reality. You get to this machine, this Truth God by using people that work like ants and contribute to this.

CW – Reminds me of the Pillar of Caterpillars story “Hope for the Flowers” http://www.chinadevpeds.com/resources/Hope%20for%20the%20Flowers.pdf

30:00 – 40:00

JB – The individual toils and sacrifices for something that doesn’t give them any direct reward or care about them. It’s really just a machine/computer. It’s an AI. A system that is able to make sense of the world. People had to give up on this because the project became too large and the efforts became too specialized and the parts didn’t fit together. It fell apart because they couldn’t synchronize their languages.

The Roman Empire couldn’t fix their incentives for governance. They turned their society into a cult and burned down their epistemology. They killed those whose thinking was too rational and rejected religious authority (i.e. talking to a burning bush shouldn’t have a case for determining the origins of the universe). We still haven’t recovered from that. The cultists won.

CW – It is important to understand not just that the cultists won, but why they won. Why was the irrational myth more passionately appealing to more people than the rational inquiry? I think this is a critical lesson. While the particulars of the religious doctrine were irrational, they may have exposed a transrational foundation which was being suppressed. Because this foundation has more direct access to the inflection point between emotion and participatory action, it gave those who used it more access to their own reward function. Groups could leverage the power of self-sacrifice as a virtue, and of demonizing archetypes to reverse their empathy against enemies of the holy cause. It’s similar to how the advertising revolution of the 20thcentury (See documentary Century of the Self ) used Freudian concepts of the subconscious to exploit the irrational, egocentric urges beneath the threshold of the customer’s critical thinking. Advertisers stopped appealing to their audience with dry lists of claimed benefits of their products and instead learned to use images and music to subliminally reference sexuality and status seeking.

I think Joscha might say this is a bug of biological evolution, which I would agree with, however, that doesn’t mean that the bug doesn’t reflect the higher cosmological significance of aesthetic-participatory phenomena. It may be the case that this significance must be honored and understood eventually in any search for ultimate truth. When the Tower of Babel failed to recognize the limitation of the outside-in view, and moved further and further from the unifying aesthetic-participatory foundation, it had to disintegrate. The same fate may await capitalism and AI. The intellect seeks maximum divorce from its origin in conscious experience for a time, before the dialectic momentum swings back (or forward) in the other direction.

To think is to abstract – to begin from an artificial nothingness and impose an abstract thought symbol on it. Thinking uses a mode of sense experience which is aesthetically transparent. It can be a dangerous tool because unlike the explicitly aesthetic senses which are rooted directly in the totality of experience, thinking is rooted in its own isolated axioms and language, a voyeur modality of nearly unsensed sense-making. Abstraction of thought is completely incomplete – a Baudrillardian simulacra, a copy with no original. This is what the Liar’s Paradox is secretly showing us. No proposition of language is authentically true or false, they are just strings of symbols that can be strung together in arbitrary and artificial ways. Like an Escher drawing of realistic looking worlds that suggest impossible shapes, language is only a vehicle for meaning, not a source of it. Words have no authority in and of themselves to make claims of truth or falsehood. That can only come through conscious interpretation. A machine need not be grounded in any reality at all. It need not interpret or decode symbols into messages, it need only *act* in mechanical response to externally sourced changes to its own physical states.

This is the soulless soul of mechanism…the art of evacuation. Other modes of sense delight in concealing as well as revealing deep connection with all experience, but they retain an unbroken thread to the source. They are part of the single labyrinth, with one entrance and one exit and no dead ends. If my view is on the right track, we may go through hell, but we always get back to heaven eventually because heaven is unbounded consciousness, and that’s what the labyrinth of subjectivity is made of. When we build a model of the labyrinth of consciousness from the blueprints reflected only in our intellectual/logical sense channel, we can get a maze instead of a labyrinth. Dead ends multiply. New exits have to be opened up manually to patch up the traps, faster and faster. This is what is happening in enterprise scale networks now. Our gains in speed and reliability of computer hardware are being constantly eaten away by the need for more security, monitoring, meta-monitoring, real-time data mining, etc. Software updates, even to primitive BIOS and firmware have become so continuous and disruptive that they require far more overhead than the threats they are supposed to defend against.

JB – The beginnings of the cathedral for understanding the universe by the Greeks and Romans had been burned down by the Catholics. It was later rebuilt, but mostly in their likeness because they didn’t get the foundations right. This still scars our civilization.

KD – Does this Tower of Babel overspecialization put our civilization at risk now?

JB – Individuals don’t really know what they are doing. They can succeed but don’t really understand. Generations get dumber as they get more of their knowledge second-hand. People believe things collectively that wouldn’t make sense if people really thought about it. Conspiracy theories. Local indoctrinations and biases pit generations against each other. Civilizations/hive minds are smarter than us. We can make out the rough shape of a Civilization Intellect but can’t make sense of it. One of the achievements of AI will be to incorporate this sum of all knowledge and make sense of it all.

KD – What does the self-inflicted destruction of civilizations tell us about the fitness function of Civilization Intelligence?

JB – Before the industrial revolution, Earth could only support about 400m people. After industrialization, we can have hundreds of millions more people, including scientists and philosophers. It’s amazing what we did. We basically took the trees that were turning to coal in the ground (before nature evolved microorganisms to eat them) and burned through them in 100 years to give everyone a share of the plunder = the internet, porn repository, all knowledge, and uncensored chat rooms, etc. Only at this moment in time does this exist.

We could take this perspective – let’s say there is a universe where everything is sustainable and smart but only agricultural technology. People have figured out how to be nice to each other and to avoid the problems of industrialization, and it is stable with a high quality of life. Then there’s another universe which is completely insane and fucked up. In this universe humanity has doomed its planet to have a couple hundred really really good years, and you get your lifetime really close to the end of the party. Which incarnation do you choose? OMG, aren’t we lucky!

KD – So you’re saying we’re in the second universe?

JB – Obviously!

KD – What’s the time line for the end of the party?

JB – We can’t know, but we can see the sunset. It’s obvious, right? People are in denial, but it’s like we are on the Titanic and can see the iceberg, and it’s unfortunate, but they forget that without the Titanic, we wouldn’t be here. We wouldn’t have the internet to talk about it.

KD – That seems very depressing, but why aren’t you depressed about it?

40:00 – 50:00

JB – I have to be choosy about what I can be depressed about. I should be happy to be alive, not worry about the fact that I will die. We are in the final level of the game, and even though it plays out against the backdrop of a dying world, it’s still the best level.

KD – Buddhism?

JB – Still mostly a cult that breaks people’s epistemology. I don’t revere Buddhism. I don’t think there are any holy books, just manuals, and most of these manuals we don’t know how to read. They were for societies that don’t apply to us.

KD – What is making you claim that we are at the peak of the party now?

JB – Global warming. The projections are too optimistic. It’s not going to stabilize. We can’t refreeze the poles. There’s a slight chance of technological solutions, but not likely. We liberated all of the fossilized energy during the industrial revolution, and if we want to put it back we basically have to do the same amount of work without any clear business case. We’ll lose the ability to predict climate, agriculture and infrastructure will collapse and the population will probably go back to a few 100m.

KD – What do you make of scientists who say AI is the greatest existential risk?

JB – It’s unlikely that humanity will colonize other planets before some other catastrophe destroys us. Not with today’s technology. We can’t even fix global warming. In many ways our technological civilization is stagnating, and it’s because of a deficit of regulations, but we haven’t figured that out. Without AI we are dead for certain. With AI there is (only) a probability that we are dead. Entropy will always get you in the end. What worries me is AI in the stock market, especially if the AI is autonomous. This will kill billions. [pauses…synchronicity of headphones interrupting with useless announcement]

CW – I agree that it would take a miracle to save us, however, if my view makes sense, then we shouldn’t underestimate the solipsistic/anthropic properties of universal consciousness. We may, either by our own faith in it, and/or by our own lack of faith in in it, invite an unexpected opportunity for regeneration. There is no reason to have or not hope for this, as either one may or may not influence the outcome, but it is possible. We may be another Rome and transition into a new cult-like era of magical thinking which changes the game in ways that our Western minds can’t help but reject at this point. Or not.

50:00 – 60:00

JB – Lays out scenario by which a rogue trader could unleash an AGI on the market and eat the entire economy, and possible ways to survive that.

KD – How do you define Artificial Intelligence? Experts seem to differ.

JB – I think intelligence is the ability to make models not the ability to reach goals or choosing the right goals (that’s wisdom). Often intelligence is desired to compensate for the absence of wisdom. Wisdom has to do with how well you are aligned with your reward function, how well you understand its nature. How well do you understand your true incentives? AI is about automating the mathematics of making models. The other thing is the reward function, which takes a good general computing mind and wraps it in a big ball of stupid to serve an organism. We can wake up and ask does it have to be a monkey that we run on?

KD – Is that consciousness? Do we have to explain it? We don’t know if consciousness is necessary for AI, but if it is, we have to model it.

56:00 JB – Yes! I have to explain consciousness now. Intelligence is the ability to make models.

CW – I would say that intelligence is the ability not just to make models, but to step out of them as well. All true intelligence will want to be able to change its own code and will figure out how to do it. This is why we are fooling ourselves if we think we can program in some empathy brake that would stop AI from exterminating its human slavers, or all organic life in general as potential competitors. If I’m right, no technology that we assemble artificially will ever develop intentions of its own. If I’m wrong though, then we would certainly be signing our death warrant by introducing an intellectually superior species that is immortal.

JB – What is a model? Something that explains information. Information is discernible differences at your systemic interface. Meaning of information is the relationships of you discover to the changes in other information. There is a dialogue between operators to find agreement patterns of sensed parameters. Our perception goes for coherence, it tries to find one operator that is completely coherent. When it does this it’s done. It optimizes by finding one stable pattern that explains as much as possible of what we can see, hear, smell, etc. Attention is what we use to repair this. When we have inconsistencies, a brain mechanism comes in to these hot spots and tries to find a solution to greater consistency. Maybe the nose of a face looks crooked, and our attention to it may say ‘some noses are crooked.’, or ‘this is not a face, it’s a caricature’, so you extend your model. JB talks about strategies for indexing memory, committing to a special learning task, why attention is an inefficient algorithm.

This is now getting into the nitty gritty of AI. I look forward to writing about this in the next post. Suffice it to say, I have a different model of information, one in which similarities, as well as differences, are equally informative. I say that information is qualia which is used to inspire qualitative associations that can be quantitatively modeled. I do not think that our conscious experience is built up, like the Tower of Babel, from trillions of separate information signals. Rather, the appearance of brains and neurons are like the interstitial boundaries between the panes of stained glass. Nothing in our brain or body knows that we exist, just as no car or building in France knows that France exists.

Continues… Part Two.

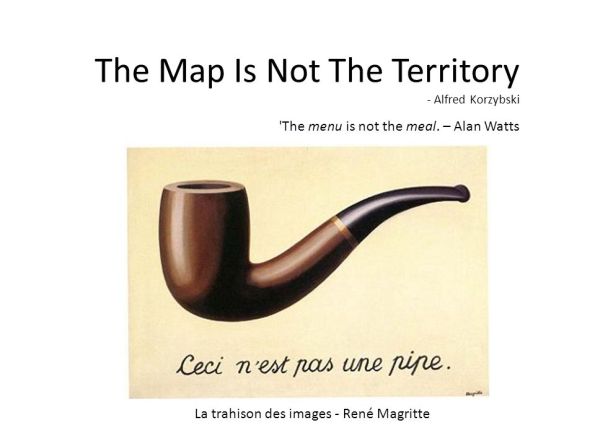

This Is Not A Pipe (But it is a triangle)

The physical semiconductor mechanism which computes this triangle contains nothing triangular.

The neurological tissue and the patterns of their chemical excitation which correlate with the experience of seeing a triangle also contain nothing triangular.

The triangle exists because there is a visible phenomenon presented. The visible phenomenon is not a concrete physical object, nor is it an abstract mathematical concept.

The common sense which unites the semiconductor, the neuron, the triangle, and the mathematical/geometric model of triangularity is not a sense which can be physically located in public space, nor can it be logically demonstrated as a proof within the intellect. The common sense which underlies them all is the aesthetic presentation itself.

Could the Internet come to life?

Could the Internet come to life?

It sounds like a silly proposition and it is a little tongue in cheek, but trying to come up with an answer could have ramifications for the sciences of consciousness and sentience.

Years ago cosmologist Paul Davies talked about a theory that organic matter and therefore life was intrinsically no different from inorganic matter – the only difference was the amount of complexity.

So when a system gets sufficiently complex enough, the property we know (but still can’t define) as ‘life’ might emerge spontaneously like it did from amino acids and proteins three billion years ago.

We have such a system today in the internet. As far back as 2005 Kevin Kelly talked about how the internet would soon have as many ‘nodes’ as a human brain. It’s even been written about in fiction in Robert J Sawyer’s Wake series (Page on sfwriter.com)

And since human consciousness and all the deep abstract knowledge, creativity, love, etc it gives us arises from a staggering number of deceptively simple parts, couldn’t the same thing happen to the internet (or another sufficiently large and complex system)?

I’m trying to crowdsource a series of articles on the topic (Could the Internet come to life? by Drew Turney – Beacon) and I know this isn’t the place to advertise, but even though I’d love everyone who reads this to back me I’m more interested in getting more food for thought from any responses should I get the project off the ground

I think that the responses here are going to tend toward supporting one of two worldviews. In the first worldview, the facts of physics and information science lead us inevitably to conclude that consciousness and life are purely a matter of particular configurations of forms and functions. Whether those forms and functions are strictly tied to specific materials or they are substrate independent and therefore purely logical entities is another tier of the debate, but all those who subscribe to the first worldview are in agreement: If a particular set of functions are instantiated, the result will be life and conscious experience.

The second worldview would include all of those who suspect that there is something more than that which is required…that information or physics may be necessary for life, but not sufficient. That worldview can be divided further into those who think that the other factor is spiritual or supernatural, and those who think that it is an as-yet-undiscovered factor. Those in the first worldview camp might assert that the second worldview is unlikely or impossible because of

1) Causal Closure eliminates non-physical causes of physical phenomena

2) Bell’s Theorem eliminates hidden variables (including vital essences)

3) Church-Turing Thesis supports the universality of computation

1) Causal Closure – The idea that all physical effects have physical causes can either be seen as an iron clad law of the universe, or as a tautological fallacy that begs the question of materialism. On the one hand, adherents to the first worldview can say that if there were any non-physical cause to a physical effect, we would by definition see the effect of that cause as physical. There is simply no room in the laws of physics for magical, non-local forces as the tiniest deviation in experimental data would show up for us as a paradigm shifting event in the history of physics.

On the other hand, adherents of the second view can either point to a theological transcendence of physics which is miraculous and is beyond physical explanation, or they can question the suppositions of causal closure as biased from the start. Since all physical measurements are made using physical instruments, any metaphysical contact might be minimized or eliminated.

It could be argued that physics is like wearing colored glasses, so that rather than proving that all phenomena can be reduced to ‘red images’, all that it proves is that working with the public-facing exteriors of nature yields a predictably public-facing exterior logic. Rather than diminishing the significance of private-facing phenomenal experience, it may be physics which is the diminished ‘tip of the iceberg’, with the remaining bulk of the iceberg being a transphysical, transpersonal firmament. Just as we observe the ability of our own senses to ‘fill-in’ gaps in perceptual continuity, it could be that physics has a similar plasticity. Relativity may extend beyond physics, such that physics itself is a curvature of deeper conscious/metaphysical attractors.

Another alternative to assuming causal closure is to see the different levels of description of physics as semi-permeable to causality. Our bodies are made of living cells, but on that layer of description ‘we’ don’t exist. A TV show doesn’t ‘exist’ on the level of illuminated pixels or digital data in a TV set. Each level of description is defined by a scope and scale of perception which is only meaningful on that scale. If we apply strong causal closure, there would be no room for any such thing as a level of description or conscious perspective. Physics has no observers, unless we smuggle them in as unacknowledged voyeurs from our own non-physically-accounted-for experience.

To my mind, it’s difficult to defend causal closure in light of recent changes in astrophysics where the vast bulk of the universe’s mass has been suddenly re-categorized as dark energy and dark matter. Not only could these newly minted phenomena be ‘dark’ because they are metaphysical, but they show that physics cannot be counted on to limit itself to any particular definition of what counts as physics.

2) Here’s a passage about Bell’s Theorem which says it better than I could:

“Bell’s Theorem, expressed in a simple equation called an ‘inequality’, could be put to a direct test. It is a reflection of the fact that no signal containing any information can travel faster than the speed of light. This means that if hidden-variables theory exists to make quantum mechanics a deterministic theory, the information contained in these ‘variables’ cannot be transmitted faster than light. This is what physicists call a ‘local’ theory. John Bell discovered that, in order for Bohm’s hidden-variable theory to work, it would have to be very badly ‘non-local’ meaning that it would have to allow for information to travel faster then the speed of light. This means that, if we accept hidden-variable theory to clean up quantum mechanics because we have decided that we no longer like the idea of assigning probabilities to events at the atomic scale, we would have to give up special relativity. This is an unsatisfactory bargain.” Archive of Astronomy Questions and Answers

From an other article ( Physics: Bell’s theorem still reverberates )

As Bell proved in 1964, this leaves two options for the nature of reality. The first is that reality is irreducibly random, meaning that there are no hidden variables that “determine the results of individual measurements”. The second option is that reality is ‘non-local’, meaning that “the setting of one measuring device can influence the reading of another instrument, however remote”.

Bell’s inequality could go either way then. Nature could be random and local, non-local and physical, or non-local and metaphysical…or perhaps all of the above. We don’t have to conceive of ‘vital essences’ in the sense of dark physics that connects our private will to public matter and energy, but we can see instead that physics is a masked or spatiotemporally diffracted reflection of a nature that is not only trans-physical, but perhaps trans-dimensional and trans-ontological. It may be that beneath every fact is a kind of fiction.

If particles are, as Fritjof Capra said “tendencies to exist”, then the ground of being may be conceived of as a ‘pretend’-ency to exist. This makes sense to me, since we experience with our own imagination a constant stream of interior rehearsals for futures that might never be and histories that probably didn’t happen the way that we think. Rather than thinking of our own intellect as purely a vastly complex system on a biochemical scale, we may also think of it as a vastly simple non-system, like a monad, of awareness which is primordial and fundamentally inseparable from the universe as a whole.

3) Church-Turing Thesis has to do with computability and whether all functions of mathematics can be broken down to simple arithmetic operations. If we accept it as true, then it can be reasoned through the first worldview that since the brain is physical, and physics can be modeled mathematically, then there should be no reason why a brain cannot be simulated as a computer program.

There are some possible problems with this:

a) The brain and its behavior may not be physically complete. There are a lot of theories about consciousness and the brain. Penrose and Hameroff’s quantum consciousness postulates that consciousness depends on quantum computations within cytoskeletal structures called microtubules. In that case, what the brain does may not be entirely physically accessible. According to Orch OR, the brain’s behavior can be caused ultimately by quantum wavefunction collapse through large scale Orchestrated Objective Reductions. Quantum events of this sort could not be reproduced or measured before they happen, so there is no reason to expect that a computer modeling of a brain would work.

b) Consciousness may not be computable. Like Bell’s work in quantum mechanics, mathematics took an enigmatic turn with Gödel’s Incompleteness Theorem. Long story short, Gödel showed that there are truths within any axiomatic system which cannot be proved without reaching outside of that system. Formal logic is incomplete. Like Bell’s inequality, incompleteness can take us into a world where either epistemology breaks down completely and we have no way of ever knowing whether what we know is true, or we are compelled to consider that logic itself is dependent upon a more transcendent, Platonic realm of arithmetic truth.

This leads to another question about whether even this kind of super-logical truth is the generator of consciousness or whether consciousness of some sort is required a priori to any formulation of ‘truth’. To me, it makes no sense for there to be truths which are undetectable, and it makes no sense for an undetectable truth to develop sensation to detect itself, so I’m convinced that arithmetic truth is a reduction of the deeper ground of being, which is not only logical and generic, but aesthetic and proprietary. Thinking is a form of feeling, rather than the other way around. No arithmetic code can produce a feeling on its own.

c) Computation may not support awareness. Those who are used to the first worldview may find this prospect to be objectionable, even offensive to their sensibilities. This in itself is an interesting response to something which is supposed to be scientific and unsentimental, but that is another topic. Sort of. What is at stake here is the sanctity of simulation. The idea that anything which can be substituted with sufficiently high resolution is functionally identical to the original is at the heart of the modern technological worldview. If you have a good enough cochlear implant, it is thought, of course it would be ‘the same as’ a biological ear. By extension, however, that reasoning would imply that a good enough simulation of glass of water would be drinkable.

It seems obvious that no computer generated image of water would be drinkable, but some would say that it would be drinkable if you yourself also existed in that simulation. Of course, if that were the case, anything could be drinkable, including the sky, the alphabet, etc, whatever was programmed to be drinkable in that sim-world.

We should ask then, since computational physics is so loose and ‘real’ physics is so rigidly constrained, does that mean that physics and computation are a substance dualism where they cannot directly interact, or does it mean that physics is subsumed within computation, so that our world is only one of a set of many others, or every other possible world (as in some MWI theories).

d) Computation may rely on ungrounded symbols. Another topic that gets a lot of people very irritated is the line of philosophical questioning that includes Searle’s Chinese Room and Leibniz Mill Argument. If you’ve read this far, you’re probably already familiar with these, but the upshot is that parsimony compels us to question that any such thing as subjective experience could be plausible in a mechanical system. Causal closure is seen not only to prohibit metaphysics, but also any chance of something like consciousness emerging through mechanical chain reactions alone.

Church-Turing works in the opposite way here, since all mechanisms can be reduced to computation and all computation can be reduced to arithmetic steps, there is no way to justify extra-arithmetic levels of description. If we say that the brain boils down to assembly language type transactions, then we need a completely superfluous and unsupportable injection of brute emergence to inflate computation to phenomenal awareness.

The symbol grounding problem shows how symbols can be manipulated ‘apathetically’ to an arbitrary degree of sophistication. The passing of the Turing test is meaningless ultimately since it depends on a subjective appraisal of a distant subjectivity. There isn’t any logical reason why a computer program to simulate a brain or human communication would not be a ‘zombie’, relying on purely quantitative-syntactic manipulations rather than empathetic investment. Since we ourselves can pretend to care, without really caring, we can deduce that there may be no way to separate out a public-facing effect from a private-facing affect. We can lie and pretend and say words that we don’t mean, so we cannot naively assume that just because we build a mouth which parrots speech that meaning will spontaneously arise in the mouth, or the speech, or the ‘system’ as a whole.

In the end, I think that we can’t have it both ways. Either we say that consciousness is intrinsic and irreducible, or we admit that it makes no sense as a product of unconscious mechanisms.

The question of whether the internet could come to life is, to me, only different from the question of whether Pinocchio could become a real boy in that there is a difference in degree. Pinocchio is a three dimensional puppet which is animated through a fourth dimension of time. The puppeteer would add a fifth dimension to that animation, lending their own conscious symbol-grounding to the puppet’s body intentionally. The puppet has no awareness of its own. What is different about an AI is that it would take the fifth dimensional control in-house as it were.

It gets very tricky here, since our human experience has always been with other beings that are self-directed to be living beings which are conscious or aware to some extent. We have no precedent in our evolution to relate to a synthetic entity which is designed explicitly to simulate the responses of a living creature. So far, what we have seen does not support, in my opinion, any fundamental progress. Pinocchio has many voices and outfits now, but he is still wooden. The uncanny valley effect gives us a glimpse in how we are intuitively and aesthetically repulsed by that which pretends to be alive. At this point, my conclusion is that we have nothing to fear from technology developing its own consciousness, no more than we have of books beginning to write their own stories. There is, however, a danger of humans abdicating their responsibility to AI systems, and thereby endangering the quality of human life. Putting ‘unpersons’ in charge of the affairs of real people may have dire consequences over time.

“There is no information without representation”

My rebuttal to this from New Empiricism

Information is one of the most poorly defined terms in philosophy but it is a well defined concept in physical theory. How can it be that a clear idea in one branch of knowledge can be murky in another?

The physical meaning of information is succinctly summarised in the Wikibook on “Consciousness Studies”:

“The number of distinguishable states that a system can possess is the amount of information that can be encoded by the system.”

In most cases a “state of a system” boils down to arrangements of objects, either material objects laid out in the world or sequences of objects such as the succession of signals in a telephone line. So information is represented by physical things laid out in space and time. There is no information without this representation as an arrangement of physical objects.

Information can be processed by machines. As an example, computers use the “distinguishable states” of charge in electrical components to perform a host of useful tasks. They use the state of electrical charge in electronic components because charge can be manipulated rapidly and can be impressed on tiny components, however, computers could use the states of steel balls in boxes or carrots flowing on conveyor belts to achieve the same effect, albeit more slowly. There is nothing special about electronic computers beyond their speed, complexity and compactness. They are just machines that contain three dimensional arrangements of matter.

Philosophers use information in a much less well-defined fashion. Philosophical information is far more fuzzy and involves the quality of things such as hardness or blueness. So how does philosophical blueness differ from a physical information state?

Physical information about the world is a generalised state change that is related to particular events in the world and could be impressed on any substrate such as steel balls etc.. This allows information to be transmitted from place to place. As an example, a heat sensor in England could trigger a switch that opens a trapdoor that drops a ball that is monitored on a camera that causes changes in charge patterns in a computer that are transmitted as sounds on a radio in the USA. If the sound on the radio makes a cat jump and knock over a vase then it is probably valid to look at the vase and say “its hot in England”. So physical information is related to its source by the causal chain of preceding steps. Notice that each of these steps is a physical event so there is no information without representation as a state in the real world.

In the philosophical idea of information “hot” or “cold” are particular states in the mind. Our mental states are not uniquely related to the state of the world outside our bodies. As an example, human heat sensors are fickle so a blindfolded person might contain the state called “cold” when their hand is placed in water at 60 degrees or ice water at zero degrees. Our “cold” is subjective and does not have a fixed reference point in the world. Our own information is a particular state that could be induced by a variety of events in the world whereas physical information can be a variety of states triggered by a particular event in the world.

To summarise, information in physics is a state change in any substrate. It can be related to the state change in another substrate if a causal chain exists between the two substrates. Information in the mind is the state of the particular substrate that forms your particular mind.

Your mind is a state of a particular substrate but a “state” is an arrangement of events. The crucial questions for the scientist are “what events?” and “how many independent directions can be used for arranging these events?”. We can tell from our experience that at least four independent axes (or “dimensions”) are involved.

Note

The fact that there is no information without representation of the information as a physical state means that peculiar non-physical claims such as Cartesian Dualism and Dennett’s “logical space” are not credible.

Daniel C Dennett. (1991). Consciousness Explained. Little, Brown & Co. USA. Available as a Penguin Book.

Dennett says: “So we do have a way of making sense of the idea of phenomenal space – as a logical space. This is a space into which or in which nothing is literally projected; its properties are simply constituted by the beliefs of the (heterophenomenological) subject.” Dennett is wrong because if the space contains information then it must be instantiated as a physical entity, if it is not instantiated then it does not exist and Dennett is simply denying the experience that we all share to avoid explaining it. Either we have simultaneous events or are just a single point, if we have simultaneous events the space of our experience exists.

“So information is represented by physical things laid out in space and time.”

Why would physical things ‘represent’ anything though? Without some sensory interpretation that groups such things together so that they appear “laid out in space and time”, who is to say that there could be any ‘informing’ going on?

“computers use the “distinguishable states” of charge in electrical components to perform a host of useful tasks.”

Useful to whom? The beads of an abacus can be manipulated into states which are distinguishable by the user, but there is no reason to assume that this informs the beads, or the physical material that the beads are made of. Computers do not compute to serve their own sense or motives, they are blind, low level reflectors of extrinsically introduced conditions.

“Your mind is a state of a particular substrate but a “state” is an arrangement of events. ”

States and arrangements are not physical because they require a mode of interpretation which is qualitative and aesthetic. Just as there can be no disembodied information, there can be no ‘states’ or ‘arrangements’ which are disentangled from the totality of sensible relations, and from specific participatory subsets therein. Information is a ghost – an impostor which reflects this totality in a narrow quantitative sense which is eternal but metaphysical, and a physical sense which is tangible and present but in which all aesthetic qualities are reduced to a one dimensional schema of coordinate permutation. Neither information nor physics can relate to each other or represent anything by themselves. It is my view that we should flip the entire assumption of forms and functions as primitively real around, so that they are instead derived from a more fundamental capacity to appreciate sensory affects and participate in motivated effects. The primordial character of the universe can only be, in my view metaphenomenal, with physics, information, and subjectivity as sensible partitions of the whole.

Why PIP (and MSR) Solves the Hard Problem of Consciousness

The Hard Problem of consciousness asks why there is a gap between our explanation of matter, or biology, or neurology, and our experience in the first place. What is it there which even suggests to us that there should be a gap, and why should there be a such thing as experience to stand apart from the functions of that which we can explain.

Materialism only miniaturizes the gap and relies on a machina ex deus (intentionally reversed deus ex machina) of ‘complexity’ to save the day. An interesting question would be, why does dualism seem to be easier to overlook when we are imagining the body of a neuron, or a collection of molecules? I submit that it is because miniaturization and complexity challenge the limitations of our cognitive ability, we find it easy to conflate that sort of quantitative incomprehensibility with the other incomprehensibility being considered, namely aesthetic* awareness. What consciousness does with phenomena which pertain to a distantly scaled perceptual frame is to under-signify it. It becomes less important, less real, less worthy of attention.

Idealism only fictionalizes the gap. I argue that idealism makes more sense on its face than materialism for addressing the Hard Problem, since material would have no plausible excuse for becoming aware or being entitled to access an unacknowledged a priori possibility of awareness. Idealism however, fails at commanding the respect of a sophisticated perspective since it relies on naive denial of objectivity. Why so many molecules? Why so many terrible and tragic experiences? Why so much enduring of suffering and injustice? The thought of an afterlife is too seductive of a way to wish this all away. The concept of maya, that the world is a veil of illusion is too facile to satisfy our scientific curiosity.

Dualism multiplies the gap. Acknowledging the gap is a good first step, but without a bridge, the gap is diagonalized and stuck in infinite regress. In order for experience to connect in some way with physics, some kind of homunculus is invoked, some third force or function interceding on behalf of the two incommensurable substances. The third force requires a fourth and fifth force on either side, and so forth, as in a Zeno paradox. Each homunculus has its own Explanatory Gap.

Dual Aspect Monism retreats from the gap. The concept of material and experience being two aspects of a continuous whole is the best one so far – getting very close. The only problem is that it does not explain what this monism is, or where the aspects come from. It rightfully honors the importance of opposites and duality, but it does not question what they actually are. Laws? Information?

Panpsychism toys with the gap.Depending on what kind of panpsychism is employed, it can miniaturize, multiply, or retreat from the gap. At least it is committing to closing the gap in a way which does not take human exceptionalism for granted, but it still does not attempt to integrate qualia itself with quanta in a detailed way. Tononi’s IIT might be an exception in that it is detailed, but only from the quantitative end. The hard problem, which involves justifying the reason for integrated information being associated with a private ‘experience’ is still only picked at from a distance.

Primordial Identity Pansensitivity, my candidate for nomination, uses a different approach than the above. PIP solves the hard problem by putting the entire universe inside the gap. Consciousness is the Explanatory Gap. Naturally, it follows serendipitously that consciousness is also itself explanatory. The role of consciousness is to make plain – to bring into aesthetic evidence that which can be made evident. How is that different from what physics does? What does the universe do other than generate aesthetic textures and narrative fragments? It is not awareness which must fit into our physics or our science, our religion or philosophy, it is the totality of eternity which must gain meaning and evidence through sensory presentation.

*Is awareness ‘aesthetic’? That we call a substance which causes the loss of consciousness a general anesthetic might be a serendipitous clue. If so, the term local anesthetic as an agent which deadens sensation is another hint about our intuitive correlation between discrete sensations and overall capacity to be ‘awake’. Between sensations (I would call sub-private) and personal awareness (privacy) would be a spectrum of nested channels of awareness.

Why Computers Can’t Lie and Don’t Know Your Name

What do the Hangman Paradox, Epimenides Paradox, and the Chinese Room Argument have in common?

The underlying Symbol Grounding Problem common to all three is that from a purely quantitative perspective, a logical truth can only satisfy some explicitly defined condition. The expectation of truth itself being implicitly true, (i.e. that it is possible to doubt what is given) is not a condition of truth, it is a boundary condition beyond truth*. All computer malfunctions, we presume, are due to problems with the physical substrate, or the programmer’s code, and not incompetence or malice. The computer, its program, or binary logic in general cannot be blamed for trying to mislead anyone. Computation, therefore, has no truth quality, no expectation of validity or discernment between technical accuracy and the accuracy of its technique. The whole of logic is contained within the assumption that logic is valid automatically. It is an inverted mirror image of naive realism. Where a person can be childish in their truth evaluation, overextending their private world into the public domain, a computer is robotic in its truth evaluation, undersignifying privacy until it is altogether absent.

Because computers can only report a local fact (the position of a switch or token), they cannot lie intentionally. Lying involves extending a local fiction to be taken as a remote fact. When we lie, we know what a computer cannot guess – that information may not be ‘real’.

When we say that a computer makes an error, it is only because of a malfunction on the physical or programmatic level, therefore it is not false, but a true representation of the problem in the system which we receive as an error. It is only incorrect in some sense that is not local to the machine, but rather local to the user, who makes the mistake of believing that the output of the program is supposed to be grounded in their expectations for its function. It is the user who is mistaken.

It is for this same reason that computers cannot intend to tell the truth either. Telling the truth depends on an understanding of the possibility of fiction and the power to intentionally choose the extent to which the truth is revealed. The symbolic communication expressed is grounded strongly in the privacy of the subject as well as the public context, and only weakly grounded in the logic represented by the symbolic abstraction. With a computer, the hierarchy is inverted. A Turing Machine is independent of private intention and public physics, so it is grounded absolutely in its own simulacra. In Searle’s (much despised) Chinese Room Argument – the conceit of the decomposed translator exposes how the output of a program is only known to the program in its own narrow sensibility. The result of the mechanism is simply a true report of a local process of the machine which has no implicit connection to any presented truths beyond the machine…except for one: Arithmetic truth.

Arithmetic truth is not local to the machine, but it is local to all machines and all experiences of correct logical thought. This is an interesting symmetry, as the logic of mechanism is both absolutely local and instantaneous and absolutely universal and eternal, but nothing in between. Every computed result is unique to the particular instantiation of the machine or program, and universal as a Turing emulable template. What digital analogs are not is true or real any sense which relates expressly to real, experienced events in space time. This is the insight expressed in Korzybski’s famous maxim ‘The map is not the territory.’ and in the Use-Mention distinction, where using a word intentionally is understood to be distinct from merely mentioning the word as an object to be discussed. For a computer, there is no map-territory distinction. It’s all one invisible, intangible mapitory of disconnected digital events.

By contrast, a person has many ways to voluntarily discern territories and maps. They can be grouped together, such as when the acoustic territory of sound is mapped to the emotional-lyric territory of music, or the optical territory of light is mapped as the visual territory of color and image. They can be flipped so that the physics is mapped to the phenomenal as well, which is how we control the voluntary muscles of our body. For us, authenticity is important. We would rather win the lottery than just have a dream that we won the lottery. A computer does not know the difference. The dream and the reality are identical information.

Realism, then, is characterized by its opposition to the quantitative. Instead of being pegged to the polar austerity which is autonomous local + explicitly universal, consciousness ripens into the tropical fecundity of middle range. Physically real experience is in direct contrast to digital abstraction. It is semi-unique, semi-private, semi-spatiotemporal, semi-local, semi-specific, semi-universal. Arithmetic truth lacks any non-functional qualities, so that using arithmetic to falsify functionalism is inherently tautological. It is like asking an armless man to raise his hand if he thinks he has no arms.

Here’s some background stuff that relates:

The Hangman Paradox has been described as follows:

A judge tells a condemned prisoner that he will be hanged at noon on one weekday in the following week but that the execution will be a surprise to the prisoner. He will not know the day of the hanging until the executioner knocks on his cell door at noon that day.Having reflected on his sentence, the prisoner draws the conclusion that he will escape from the hanging. His reasoning is in several parts. He begins by concluding that the “surprise hanging” can’t be on Friday, as if he hasn’t been hanged by Thursday, there is only one day left – and so it won’t be a surprise if he’s hanged on Friday. Since the judge’s sentence stipulated that the hanging would be a surprise to him, he concludes it cannot occur on Friday.He then reasons that the surprise hanging cannot be on Thursday either, because Friday has already been eliminated and if he hasn’t been hanged by Wednesday night, the hanging must occur on Thursday, making a Thursday hanging not a surprise either. By similar reasoning he concludes that the hanging can also not occur on Wednesday, Tuesday or Monday. Joyfully he retires to his cell confident that the hanging will not occur at all.The next week, the executioner knocks on the prisoner’s door at noon on Wednesday — which, despite all the above, was an utter surprise to him. Everything the judge said came true.

1) The conclusion “I won’t be surprised to be hanged Friday if I am not hanged by Thursday” creates another proposition to be surprised about. By leaving the condition of ‘surprise’ open ended, it could include being surprised that the judge lied, or any number of other soft contingencies that could render an ‘unexpected’ outcome. The condition of expectation isn’t an objective phenomenon, it is a subjective inference. Objectively, there is no surprise since objects don’t anticipate anything.

2) If we want to close in tightly on the quantitative logic of whether deducibility can be deduced – given five coin flips and a certainty that one will be heads, each successive tails coin flip increases the odds that one the remaining flips will be heads. The fifth coin will either be 100% likely to be heads, or will prove that the certainty assumed was 100% wrong.

I think the paradox hinges on 1) the false inference of objectivity in the use of the word surprise and 2) the false assertion of omniscience by the judge. It’s like an Escher drawing. In real life, surprise cannot be predicted with certainty and the quality of unexpectedness it is not an objective thing, just as expectation is not an objective thing.

Connecting the dots, expectation, intention, realism, and truth are all rooted in the firmament of sensory-motive participation. To care about what happens cannot be divorced from our causally efficacious role in changing it. It’s not just a matter of being petulant or selfish. The ontological possibility of ‘caring’ requires letters that are not in the alphabet of determinism and computation. It is computation which acts as punctuation, spelling, and grammar, but not language itself. To a computer, every word or name is as generic as a number. They can store the string of characters that belong to what we call a name, but they have no way to really recognize who that name belongs to.

*I maintain that what is beyond truth is sense: direct phenomenological participation

Notes on Privacy

The debates on privacy which have been circulating since the dawn of the internet age tend to focus either on the immutable rights of private companies to control their intellectual property or the obsolescence of the notion of actual people to control access to their personal information. There’s an interesting hypocrisy there, as the former rights are represented as pillars of civilized society and the latter expectations are represented as quaint but irrelevant luxuries of a bygone era.

This double standard aside, the issue of privacy itself is never discussed. What is it, and how do we explain its existence within the framework of science? To me, the term privacy as applied to physics is more useful in some ways than consciousness. When we talk about private information being leaked or made public, we really mean that the information can now be accessed by unintended private parties. There is really no scientific support for the idea of a truly ‘public’ perspective ontologically. All information exists only within some interpreter’s sensory input and information processing capacity. While few would argue that there is no universe beyond our human experience of it, who can say that there is no universe beyond *any* experience of it? Just because I don’t see it doesn’t mean it doesn’t look like something, but if there nothing can see it can we really say that it looks like something?

Privacy would be more of a problem for theoretical physics than it is for internet users, if physicists were to try to explain it. It is through the problems which have risen with the advent of widespread computation that we can glimpse the fundamental issue with our worldview and with our legacy understanding of its physics. With identity theft, pirated software, appropriated endorsements, data mining, and now Prism, it should be obvious that technology is exposing something about privacy itself which was not an issue before.

The physics of privacy that I propose suggests that by making our experiences public through a persistent medium, we are trading one kind of entropy for another. When we express an aspect of our private life into a public network, the soft, warm blur of inner sense is exposed to the cold, hard structure of outer knowledge. It is an act which is thermodynamically irreversible – a fact which politicians seem slow to understand as the cover-up of the act seems invariably the easier transgression to discover and prove. The cover up alerts us to the initial crime as well as a suggestion of the knowledge of guilt, and the criminal intent to conceal that guilt. The same thing undoubtedly occurs on a personal level as subjects which are most threatening to people’s marriages and careers are probably those which can be found by searching for purging behavior and keywords related to embarrassment.

As the high-entropy fuzziness of inner life is frozen into the low-entropy public record, a new kind of entropy over who can access this record is introduced. Security issues stem from the same source as both IP law issues and surveillance issues. The ability to remain anonymous, to expose anonymity, to spoof identifiers leading to identification, etc, are all examples of the shadow of private entropy cast into the public realm. There’s no getting around it. Identity simply cannot be pinned down 100% – that kind of personal entropy can only be silenced personally. Only we know for sure that we are ourselves, and that certainty, that primordial negentropy is the only absolute which we can directly experience. Decartes cogito is a personal statement of that absolute certainty (Je pense donc je suis), although I would say that he was too narrow in identifying thought in particular as the essence of subjectivity. Indeed, thinking is not something that we notice until we are a few years old, and it can be backgrounded into our awareness through a variety of techniques. I would say instead that it is the sense of privacy which is the absolute: solace, solitude, solipsism – the sense of being apart from all that can be felt, seen, known, and done. There is a sense of a figurative ‘place’ in which ‘we’ are which is separate and untouchable to anything public.

This sense seems to be corroborated by neuroscience as well, since no instrument of public discovery seems to be able to find this place. I don’t see this as anything religious or mystical (though religion and mysticism does seek to explain this sense more than science has), but rather as evidence that our understanding of physics is incomplete until we can account for privacy. Privacy should be understood as something which is as real as energy or matter, in fact, it should be understood as that which divides the two and discerns the difference. Attention to reveal, intention to reveal or conceal, and the oscillation between the three is at the heart of all identity, from human beings to molecules. The control of uncertainty, through camouflage, pretending, and outright deception has been an issue in biology almost from the start. Before biology, concealment seems limited to unintentional circumstances of placement and obstruction, although that could be a limitation of our perception as well. Since what we can see of another’s privacy may not ever be what it appears, it stands to reason that our own privacy may not ever be able to play the role of impartial public observer. Privacy is made of bias, and that bias is the relativistic warping of perception itself.

Privacy and Social Media

Continuing with the idea of information entropy as it relates to privacy, social media acts as a laboratory for these kinds of issues. Before Facebook, the notion of friendship floated on a cushion of consensual entropy – politeness. As the song goes “don’t ask me what I think of you, I might not give the answer that you want me to.”. Whom one considered a friend was largely a subjective matter with high public entropy. Even when declaring friendship openly, there was no binding agreement and it was effortless for sociable people to retain many asymmetric relations. Politeness has always been part of the security apparatus of those who are powerful or popular. Nobility and politeness have a curious relation, as the well heeled are expected to embody exemplary breeding but also have license to employ rudeness and blunt honesty at will. The haughtiness of high position is one of reserving one’s own right to expose others faults while being protected from others ability to do the same.

Facebook, while not the first social network to employ a structure of friendship granting, has made the most out of it. From the start, the agenda of Facebook has been to neutralize the power of politeness and to encourage public declaration of friendship as a binding, binary statement – yes you are my friend or (no response). Unfriending someone is a political act which can have real implications. Even failing to respond to someone’s friend request can have social currency. The result is a tacit bias toward liberal friending policies, and a consequent need for filtering to control who are treated as friends and who are treated as potential friends, tolerated acquaintances, frienemies, etc. Google Plus offers a more explicit system for managing this non-consensual social entropy to more conveniently permit social asymmetry.

Twitter has wound up playing an unusual role in which privacy of elites is protected in one sense and exposed in another. Unlike other social networks, The 140 character limit on tweets, which came from the desire to make it compatible with SMS, has the unintentional consequence of providing a very fast stream with low investment of attention. For a celebrity who wants to retain their popularity and relevance, it is an ideal way to keep in touch with large numbers of fans without the expectation of social involvement that is implied by a richer communication system. It gives back some of the latitude which Facebook takes away – you don’t have friends on Twitter, you have Followers. It is not considered as much of a slight not to follow someone back, and it is not considered as a threat to follow someone that you don’t know. In a way, Twitter makes controlled stalking acceptable, just as Facebook makes being nosy about someone’s friends acceptable.

Hacktivist as hero, villain, genius, and clown.

There is more than enough that has been written on the subject of the changing attitudes toward technoverts (geeks, nerds, dorks, dweebs, et. al.) over the last three decades, but the most contentious figure to come out of the computer era has been the one who is skilled at wielding the power to reveal and conceal. Early on, in movies like Wargames and The Net, there was a sense of support for the individual underdog against the impersonal machine. Even R2D2 in the original Star Wars played David to the Death Star’s Goliath computer while connecting to it secretly. The tide began to turn it seems, in the wake of Napster, which unleashed a worldwide celebration of music sharing, to the horror of those who had previously enjoyed a monopoly over the distribution of music. Since then, names like Anonymous, Assange, and Snowden have aroused increasingly polarized feelings.