Archive

I Think Therefore I Am?

The only thing that can be verified 100% to exist is your own consciousness (“I think, therefore I am”) does this effect/change your own beliefs in any way and how so?

In a way it is true that our consciousness is the only thing that we can verify 100%, however, that way of looking at it may itself not be 100% verifiable. Since cognition is only one aspect of our consciousness, we don’t know if the way that ‘our’ consciousness seems to that part of ‘us’ is truly limited to personal experience or whether it is only the tip of the iceberg of consciousness.

The nature of consciousness may be such that it supplies a sense of limitation and personhood which is itself permeable under different states of consciousness. We may be able to use our consciousness to verify conditions beyond its own self-represented limits, and to do so without knowing how we are able to do it. If we imagine that our consciousness when we are awake is like one finger on a hand, there may be other ‘fingers’ parallel to our own which we might call our intuition or subconscious mind. All of the fingers could have different ways of relating to each other as separate pieces while at the same time all being part of the same ‘hand’ (or hand > arm >body).

With this in mind, Descartes’ cogito “I think therefore I am” could be re-phrased in the negative to some extent. The thought that it is only “I” who is thinking may not be quite true, and all of our thoughts may be pieces to a larger puzzle which the “I” cannot recognize ordinarily. It still cannot be denied that there is a thought, or an experience of thinking, but it is not as undeniable that we are the “I” that “we” think we are.

The modern world view is, in many ways, the legacy of Cartesian doubt. Descartes has gotten a bad rap, ironically due in part to the success of his opening the door to purely materialistic science. Now, after 400 years of transforming the world with technology, it seems prehistoric to many to think in terms of a separate realm of thoughts which is not physical. Descartes does not have the opportunity to defend himself, so his view is an easy target – a straw man even. When we update the information that Descartes had, however, we might see that Cartesian skepticism can still be effective.

Some things which Descartes didn’t have to draw upon in constructing his view include:

1) Quantum Mechanics – QM shifted microphysics from a corpuscular model of atoms to one of quantitative abstractions. Philosophically, quantum theory is ambiguous in both its realism/anti-realism and nominalism/anti-nominalism. Realism starts from the assumption that there are things which exist independently of our awareness of them, while nominalism considers abstract entities to be unreal.

- Because quantum theory is the base of our physics, and physics precedes our biology, quantum mechanics can be thought of as a realist view. Nature existed long before human consciousness did, and nature is composed of quantum functions. Quantum goes on within us and without us.

- Because quantum has been interpreted as being at least partially dependent on acts of detection (e.g. “Experiment confirms quantum theory weirdness”), it can be considered an anti-realist view. Unlike classical objects, quantum phenomena are subject to states like entanglement and superposition, making them more like sensory events than projectiles. Many physicists have emphatically stated that the fabric of the universe is intrinsically participatory rather than strictly ‘real’.

- Quantum theory is nominalist in the sense that it removes the expectation of purpose or meaning in arithmetic. “Shut up and calculate.” is a phrase* which illustrates the nominalist aspects of QM to me; the view is that it doesn’t matter whether these abstract entities are real or not, just so long as they work.

- Quantum theory is anti-nominalist because it shares the Platonic view of a world which is made up of perfect essences – phenomena which are ideal rather than grossly material. The quantum realm is one which can be considered closer to Kant’s ‘noumena’ – the unexperienced truth behind all phenomenal experience. The twist in our modern view is that our fundamental abstractions have become anti-teleogical. Because quantum theory relies on probability to make up the world, instead of a soul as a ghost in the material machine, we have a machine of ghostly appearances without any ghost.

To some, these characteristics when taken together seem contradictory or incomprehensible…mindless mind-stuff or matterless matter. To others, the philosophical content of QM is irrelevant or merely counter-intuitive. What matters is that it makes accurate predictions, which makes makes it a pragmatic, empirical view of nature.

2) Information Theory and Computers

The advent of information processing would have given Descartes something to think about. Being neither mind nor matter, or both, the concept of ‘information’ is often considered a third substance or ‘neutral monism’. Is information real though, or is it the mind treating itself like matter?

Hardware/software relation

This metaphor gets used so often that it is now a cliche, but the underlying analogy has some truth. Hardware exists independently of all software, but the same software can be used to manipulate many different kinds of hardware. We could say that software is merely our use of hardware functions, or we could say that hardware is just nature’s software. Either way there is still no connection to sensory participation. Neither hardware nor software has any plausible support for qualia.

Absent qualia

Information, by virtue of its universality, has no sensory qualities or conscious intentions. It makes no difference whether a program is executed on an electronic computer or a mechanical computer of gears and springs, or a room full of people doing math with pencil and paper. Information reduces all descriptions of forms and functions to interchangeable bits, so the same information processes would have to be the same regardless of whether there were any emergent qualities associated with them. There is no place in math for emergent properties which are not mathematical. Instead of a ‘res cogitans’ grounded in mental experience, information theory amounts to a ‘res machina’…a realm of abstract causes and effects which is both unextended and uninhabited.

The receding horizon of strong AI

If Descartes were around today, he might notice that computer systems which have been developed to work like minds lack the aesthetic qualities of natural people. They make bizarre mistakes in communication which remind us that there is nobody there to understand or care about what is being communicated. Even though there have been improvements in the sophistication of ‘intelligent’ programs, we still seem to be no closer to producing a program which feels anything. To the contrary, when we engage with AI systems or even CGI games, there is an uncanny quality which indicates a sterile and unnatural emptiness.

Incompleteness, fractals, and entropy

Gödel’s incompleteness theorem formalized a paradox which underlies all formal systems – that there are always true statements which cannot be proved within that system. This introduces a kind of nominalism into logic – a reason to doubt that logical propositions can be complete and whole entities. Douglas Hofstadter wrote about strange loops as a possible source of consciousness, citing complexity of self-reference as a key to the self. Fractal mathematics were used to graphically illustrate some aspects of self-similarity or self-reference and some, like Wai H Tsang have proposed that the brain is a fractal.

The work of Turing, Boltzmann, and Shannon treat information in an anti-nominalist way. Abstract data units are considered to be real, with potentially measurable effects in physics via statistical mechanics and through the concept of entropy. The ‘It from Bit’ view described by Wheeler is an immaterialist view that might be summed up as “It computes, therefore it is.”

3) Simulation Triumphalism

Disneyland

When Walt Disney produced full length animated features, he employed the techniques of fine art realism to bring completely simulated worlds to life in movie theaters. For the first time, audiences experienced immersive fantasy which featured no ‘real’ actors or sets. Disney later extended his imaginary worlds across the Cartesian divide to become “real” places, physical parks which are constructed around imaginary themes, turning the tables on realism. In Disneyland, nature is made artificial and artifice is made natural. Audioanimatronic robots populate indoor ‘dark rides’ where time can seem to stop at midnight even in the middle of a Summer day.

Video games

The next step in the development of simulacra culture took us beyond Hollywood theatrics and naturalistic fantasy. Arcade games featured simulated environments which were graphically minimalist. The simulation was freed from having to be grounded in the real world at all and players could identify with avatars that were little more than a group of pixels.

Video, holographic, and VR technologies have set the stage for acceptance of two previously far-fetched possibilities. The first possibility is that of building artificial worlds which are constructed of nothing but electronically rendered data. The second possibility is that the natural world is itself such an illusion or simulation. This echoes Eastern philosophical views of the world as illusion (maya) as well as being a self-reflexive pattern (Jeweled Net of Indra). Both of these are suggested by the title of the movie The Matrix, which asks whether being able to control someone’s experience of the world means that they can be controlled completely.

The Eastern and Western religious concepts overlap in their view of the world as a Matrix-like deception against a backdrop of eternal life. The Eastern view identifies self-awareness as the way to control our experience and transcend illusion, while the Abrahamic religions promise that remaining devoted to the principles laid down by God will reveal the true kingdom in the afterlife. The ancients saw the world as unreal because the true reality can only be God or universal consciousness. In modern simulation theories, everything is unreal except for the logic of the programs which are running to generate it all.

4) Relativity

Einstein’s Theory of Relativity went a long way toward mending the Cartesian split by showing how the description of the world changes depending upon the frame of reference. Previously fixed notions of space, time, mass, and energy were replaced by dynamic interactions between perspectives. The straight, uniform axes of x,y,z, and t were traded for a ‘reference-mollusk’ with new constants, such as the spacetime interval and the speed of light (c). The familiar constants of Newtonian mechanics, and Cartesian coordinates were warped and animated against a tenseless, Non-Euclidean space with no preferred frame of reference.

Even before quantum mechanics introduced a universe built on participation, Relativity had punched a hole in the ”view from nowhere’ sense of objectivity which had been at the heart of the scientific method since the 17th century. Now the universe required us to pick a point within spacetime and a context of physical states to determine the appearance of ‘objective’ conditions. Descartes extended substance had become transparent in some sense, mimicking the plasticity and multiplicity of the subjective ‘thinking substance’.

5) Neuroscience

Descartes would have been interested to know that his hypothesis of the seat of consciousness being the pineal gland had been disproved. People have had their pineal glands surgically removed without losing consciousness or becoming zombies. The advent of MRI technology and other imaging also has given us a view of the brain as having no central place which acts as a miniature version of ourselves. There’s no homunculus in a theater looking out on a complete image stored within the brain. There is also no hint of dualism in the brain as far as a separation between how and where fantasy is processed. To the contrary, all of our waking experiences seamlessly fuse internal expectations with external stimuli.

Neuroscience has conclusively shattered our naive realism about how much control we have over our own mind. Benjamin Libet’s showed that by the time we think that we are making a decision, prior brain activity could be used to predict what the decision would be. With perceptual tests we have shown that our experience of the real world not only contains glaring blind spots and distortions but that those distortions are masked from our direct inspection. Perception is incomplete, however that is no reason to conclude that it is an illusion. We still cannot doubt the fact of perception, only that in a complex kind of perception that a human being has, there are opportunities for conflicts between levels.

Neuroscientific knowledge has also opened up new appreciation for the mystery of consciousness. Some doctors have studied Near Death Experiences and Reincarnation reports. Others have talked about their own experiences in terms which suggest a more mystical presence of universal consciousness than we have imagined. Slowly the old certainties about consciousness in medicine are being challenged.

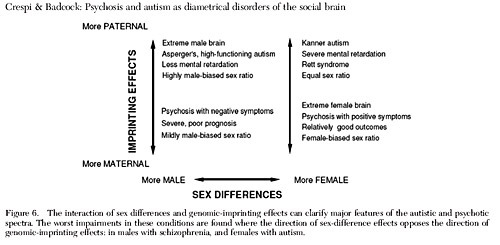

6) Psychology

Psychology has developed a model of mental illness which is natural rather than supernatural. Conditions such as schizophrenia and even depression are diagnosed and treated as neurological disorders. The use of brain-change drugs, both medically and recreationally has given us new insights into the specificity of brain function. Modern psychology has questioned earlier ideas such as Freud’s Id, Ego, and Superego, and the monolithic “I” before that so that there are many neurochemical roles and systems which contribute to making “us”.

To Decartes’ Cogito, the contemporary psychologist might ask whether the I refers to the sense of an inner voice who is verbalizing the statement, or to the sense of identification with the meaning of the concept behind the words, etc.

In all of the excitement of mapping mental symptoms to brain states, some of the most interesting work in psychology have languished. William James, Carl Jung, Piaget, and others presented models of the psyche which were more sympathetic to views of consciousness as a continuum or spectrum of conscious states. By shifting the focus away from first hand accounts and toward medical observation, some have criticized the neuroscientific influence on psychology as a pseudoscience like phrenology. The most important part of the psyche is overlooked, and patients are reduced to sets of correctable symptoms.

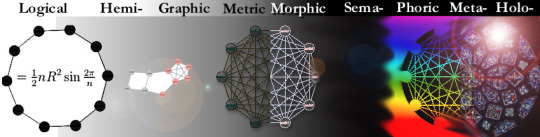

7) Semiotics

Perhaps the most underappreciated contribution on this list is that of semioticians such as C.S. Peirce and de Saussure. Before electronic computing was even imagined, they had begun to formalize ideas about the relation between signs and what is signified. Instead of a substance dualism of mind and matter, semiotic theories introduced triadic formulations such as between signs, objects, and concepts.

Baudrillard wrote about levels of simulation or simulacra, in which a basic reality is first altered or degraded, then that alteration is masked, then finally separated from any reality whatsoever. Together, these notions of semiotic triads and levels of simulation can help guide us away from the insolubility of substance dualism. Reality can be understood as a signifying medium which spans mind-like media and matter-like media. Sense and sense-making can be reconciled without inverting it as disconnected ‘information’.

8) Positivism & Post-Modernism

The certainty which Descartes expressed as a thinker of thoughts can be seen to dissolve when considered in the light of 20th century critics. Heavily criticized by some, philosophers such as Wittgenstein, Derrida, and Rorty continue to be relevant to undermining the incorrigibility of consciousness. The Cogito can be deconstructed linguistically until it is meaningless or nothing but the product of the bias of language or culture. Under Wittgenstein’s Tractatus, the Cogito can be seen as a failure of philosophy’s purpose in clarifying facts, thereby deflating it to an empty affirmation of the unknowable. Since, in his words “Whereof one cannot speak, thereof one must be silent.” we may be compelled to eliminate it altogether.

What logical positivism and deconstructivism does with language to our idea of consciousness is like what neuroscience does through medicine; it demands that we question even the most basic identities and undermines our confidence in the impartiality of our thoughts. In a sense, it is an invitation for a cross-examination of ourselves as our own prosecution witness.

Wilfrid Sellars attack on the Myth of the Given sees statements such as the Cogito as forcing us to accept a contradiction where sense-datum (such as “I think”) are accepted as a priori facts, but justified beliefs (“therefore I am”) have to be acquired. How can consciousness be ‘given’ if understanding is not? This would seem to point to consciousness as a process rather than a state or property. This however, fails to account for lower levels of consciousness which might be responsible for even the micro level processing.

In my view , logic and language based arguments against the incorrigibility fail because they overlook their own false ‘given’, which is that symbols can literally signify reality. In fact, symbols have no authority or power to provide meaning, but instead act as a record for those who intend to preserve or communicate meaning.

An updated Cogito

“I think, therefore I am at least what a thinker thinks is a thinker.”

Rather than seeing Cartesian doubt as only a primitive beginning to science, I think it makes sense to try to pick up where he left off. By adding the puzzle pieces which have been acquired since then, we might find new respect for the approach. Relativism itself may be relative, so that we need not be compelled to deconstruct everything. We can consider that our sense of deconstruction and solipsism as absurd may be well founded, and that just because our personal intuition is often flawed does not mean that kneejerk counter-intuition is any better.

With that in mind, is the existence of the “I” really any more dubious than a quark or a rainbow? Does it serve us to insist upon rigid designations of ‘real’ vs ‘illusion’ in a universe which has demonstrated that its reality is more like illusion? At the same time, does it serve us to deny that all experiences are in some sense ‘real’, regardless of their being ineffable to us now?

*attributed to David Mermin, Richard Feynman, or Paul Dirac (depending on who you ask)

Modality Independence

http://en.wikipedia.org/wiki/Origin_of_speech

A striking feature of language is that it is modality-independent. Should an impaired child be prevented from hearing or producing sound, its innate capacity to master a language may equally find expression in signing […]

This feature is extraordinary. Animal communication systems routinely combine visible with audible properties and effects, but not one is modality independent. No vocally impaired whale, dolphin or songbird, for example, could express its song repertoire equally in visual display. “

This would be hard to explain if consciousness were due to information processing, as we would expect all communication to share a common logical basis. The fact that only human language is modality invariant suggests that communication, as an expression of consciousness is local to aesthetic textures rather than information-theoretic configurations.

Since only humans have evolved to create an abstraction layer that cuts across aesthetic modalities, it would appear that between aesthetic modality and information content, aesthetic modality is the more fundamental and natural phenomenon. Information is derived from conscious presentation, not the other way around.

Semiotics: What are the implications of the Saussurian sign (signifier/signified) for a theory of meaning?

In my theory of meaning, Saussurian concepts of signifier and signified are a good start, but I propose a fundamental change. In his answer, Keith Allpress offers:

here is where I think we stand:

Shannon removed content from meaning but using bits.

Saussure claimed that language creates meaning.

and points out the limitations of post-modern/relativistic/deconstructionist approaches. I would say that the computationalist approach is similarly limited, in that there is no compelling reason that ‘it from bit’ should apply to all aspects of meaning. I think that what is missing from these two approaches is the same thing, only seen from opposite sides. To understand more about that thing, we can begin by asking:

“What cares about the difference?”

I think where Saussure and modern semiotics in general went too far is in presuming representation without presentation. The error of the computationalist view is even more subtle, as it presumes presentation as an emergent property, thereby taking it outside of the realm of science, but without admitting it. To me, this is a very seductive but misguided approach which leads directly to the Emperor’s emergent clothes.

Taking the term ‘signifier’, we can crack the kernel of truth that semiotics-as-cosmology is based on. Just as it is not incorrect to call someone who is driving a car a ‘driver’, neither is driver a complete description of the role of human beings in the world. What is missing? What *cares* that something is missing? What fills the gap is what I call aesthetic participation, or sensory-motive presence. In my view, before ‘information’ (a difference that makes a difference per Bateson) or sign, there must be the raw sensitivity to detect and interpret such ‘differences’ or ‘signs’ and to *care* about those differences. What we have done, by reifing pattern as objectively real things which are recognized, or de-realizing things as subjectively constructed patterns is to void the existence of sense and sense-making itself.

Not to get too cheeky, but what I propose is that beneath Bateson’s adage is a deeper context from which information and signs emerge: an aesthetic phenomenon which likes its own likeness by making its own differences. I call this primordial pansensitivity, or ‘sense’ and the particular quality of appreciation that it cares about I call ‘significance’. Significance cannot be automated, it must be earned directly through intimate acquaintance. It may sound like I am talking about human intimacy here, but I mean nothing of the sort. By acquaintance (stealing that word from Chalmers), I mean sensory-motive encounters on a fundamental level: before humans, before biology, and before even matter. The universe has to make sense before anything can make sense of it.

The aesthetic agenda is purely hedonistic. It is to develop ever richer textures and modalities of appreciation. While the universe is replete with repeating patterns, it never seems to repeat its particular, proprietary holons. A whirlpool, hurricane, and galaxy all share the same unmistakable topology, but nobody would mistake one for the other. Not just the scale but everything that constitutes their appearance and role in the universe is different. In calling the universe signs or bits we are losing the appreciation and proprietary character. The unique and worthwhile becomes generic and inevitable. It ultimately is to make meaning meaningless.

Names (representations) can be related to each other in ways that nature (presentations) cannot be. The equal sign is itself a name for one of these relations. In nature nothing can be absolutely equal to anything else. All of nature is unrepeatably unique in a literal sense, but will seem to be made of repetition and variation from any particular perspective within it. In this way, the postmodernists are right. We have only the presence of our own ability to feel that can be known absolutely as it is. Everything else that exists for us, within our individually customized experience has some degree of approximation/representation.

What makes this even more complicated and confusing is that there are different levels of sense-making whcih can contradict each other. We would like to think of signs as simply a case of dictionary definitions were signs literally signifiy what we expect they should signify. Even the identity principle of A = A is subject to a deeper degree of expectation about what A and = mean in different contexts. We can look at a surreal painting and say ‘that is a painting of something impossible’, but it is only our expectation that the paint shapes refer to something other than themselves which is being misled. What surrealism signifies is not ‘real’, but neither is it nothing.

Where the computationalists are right is in seeing the uniformity of arithmetic principles across all phenomena which can be measured. Reducing all transactions to bits obviously has been tremendously transformative in this century. By banishing the aesthetic qualities (qualia) to an emergent never-never land, however, we have been seduced by the representation of measure (quanta). Simulation-type theories now abound, in which the entire history of human experience (including the development of science, but shh…) is marginalized as a confabulation/illusion/model and the only true reality one which can never been contacted in any way except through theoretical abstraction. We either live in an unreal world, or the world which we now think is real is not the one that we actually live in. We are being asked to believe that meaning is meaningless and that the only alternative to solipsism is a kind of ‘nilipsism’* in which even our ennui is yet another meaningless function of the program.

To turn the page on this era of de-presentation**, I suggest that we look at the roots of semiotics more deeply, and recognize that signs themselves depend upon a deeper context of sensation and sense-making which goes beyond even physics or human experience.

*a word I made up to describe the philosophy that the self (ipse) must be reduced to a non-entity.

**another neologism that I use to refer to what Raymond Tallis calls the ‘Disappearance of Appearance’…the overlooking of the phenomenon of aesthetic presence itself.

Syzygy Integrals and Other Neoquantisms

Syzygy Integral

Syzygy Integral

Syzygy Integral with labels

Syzygy Integral with labels

When applying the syzygy integral to a sense modality such as vision, the Δæ would refer to the difference in the microphenomenal qualities, such as pixel hue, saturation, value, or contrast/edge detection, etc.. the entire palette of what I would call entopic or generic visual encounters. As shown in optical illusions, these elemental graphic features depend on their surrounding context, and two pixels or shaded regions which are measured to be optically identical can be perceived quite differently.

For this reason and others, I suggest that the fundamental nature of all phenomena is only definable in terms of specific properties, but of a pseudo-specific quorum of detectable differences. It looks like a lighter grey on the bottom because of the adjacent contrasts, and it is my conjecture that this kind of pseudo-specificity is at the heart of all measurements, particularly those which we have used to define subatomic particles.

On the top of the integral, the ∇Æ would refer to an entirely different, top-down mode of visual perception. Instead of a delta (Δ) to stand for a the difference of generic micro-phenomenal qualia, the nabla symbol (∇) is used to stand for a divergence from a larger perceptual context. This relates to the binding problem, i.e., when we see two dogs walk behind the same fence, we do not perceive them as becoming the same dog – the narrative continuity does for our overall understanding what the ‘illusory’ plasticity does on a microphenomenal level. To see the ) as a smile in the emoticon : – ) requires both a low level fudging of pixels into a curve, as well as the ability for our expectation of a face to be projected from the top down. The emoticon is a minimalist example, but a better example would be something like this:

Terms like pareidolia, apophenia, simulacra, and eidetic hallucination all have in common this potential to misread a more proprietary, macrophenomenal text on top of a relatively generic, microphenomenal context.

What the syzygy integral is supposed to model is that any given sense modality is a special kind of integration between top-down or holotrophic orientation and bottom up, entropic orientation. In the case of visual sense, the top-down images are encountered like those in an Rorschach inkblot, as endless wells of imaginative psychosexual association. The personal range of the psyche is here encountering influences from the super-personal range of the overall presence of this moment in relation to their lives, and their lives in relation to eternity.

The bottom-up ‘entopic’ confabulation (entopic hallucinations are those which are geometric designs, etc as opposed to eidetic hallucinations which are images such as specific faces) is where the personal psyche encounters the sub-personal influence of neurological, biological, and chemical events as it impinges on them visually. An entopic hallucination presumably maps much more directly to neurochemical patterns in the visual cortex, whereas the eidetic, storytelling hallucinations would be much more obscure and proprietary. A hallucination of Darth Vader or Dick Cheney might be hard to tell apart from looking at an fMRI, but it should not be so difficult to get a fix on zig zag patterns vs concentric circles, etc.

The syzygy integral of vision then would be this continuum between the sub-phenomenal adhesive that holds the graphic canvas together and the cohesive that renders the meta-phenomenal meanings and figures phenomenally visible. It’s not an ordinary integral, since it has an encircled triple bar in the center, which denotes a participatory intent (motive effect), and an aesthetic contour (sense affect). The term syzygy, an old favorite of mine (its a real word), refers to a union of opposites, either figuratively as in yin-yang, or literally as in an solar eclipse where the Moon is opposite to the Sun behind the Earth.

In the syzygy integral for vision, the vast sweep of possible interpretations from the meta to the micro level is interrupted by the inflection point of the moment as it is localized from eternity (the absolute). That which is seen had been both filtered from above and built up from below, but the visual encounter is defined even in opposition to that. The seeing is not the seen. All visual forms are opposed to an equally rich continuum of possible ways to appreciate those forms and images. The syzygy integral is not just a map of what there is ‘there and then’ but the entire domain of what each and every there and then still means ‘here and now’.

As the syzygy integral can be used to describe vision (vision = the participatory integration of graphic differences and imaginative likeness) or sound (sound = the participatory integration of phonic differences and psychoacoustic likeness), so too should it be able to describe the character of all phenomena. The underlying formula (Grand syzygy ingegral) uses the * asterisk and # pound to denote the limit of infinite figurative unity and the limit of literal, finite granularity respectively. In this case, the encircled triple bar refers to the Primoridal Identity Pansensitivity, from which all other syzygies are diffracted.

Grand Syzygy Integral

Grand Syzygy Integral

The syzygy integral without the contour circle I am calling the information integral.

Information Integral

Information Integral

Unlike the syzygy integral, which defines every piece of information as an aesthetic encounter or re-acquaintance, the information integral refers only to the skeletal functionality of sense. Locally we may experience novel encounters or acquaintances, but some would argue that all experiences can only be re-acquaintances from the absolute perspective. I think that it may make the most sense to think of even that either-or condition as just another superimposed quality of the absolute. Awareness is infinitely novel, infinitely repeating, and paradoxically non-paradoxical. It is only the disorientation of locality which provides orientation.

The information integral strips away all of the mystical trappings – the supertext and subtext contours, and refers instead to the conventional concepts of information theory. Here, the triple bar is still a participant and intentional arbiter of interpretation between signal and noise, but without the aesthetic complication. This is the standard view of information processing as a functional exercise, only with the additional acknowledgement of a core superposition of telic intention and ontic unintention, absolute improbability and immaculate reliability.

“There is no information without representation”

My rebuttal to this from New Empiricism

Information is one of the most poorly defined terms in philosophy but it is a well defined concept in physical theory. How can it be that a clear idea in one branch of knowledge can be murky in another?

The physical meaning of information is succinctly summarised in the Wikibook on “Consciousness Studies”:

“The number of distinguishable states that a system can possess is the amount of information that can be encoded by the system.”

In most cases a “state of a system” boils down to arrangements of objects, either material objects laid out in the world or sequences of objects such as the succession of signals in a telephone line. So information is represented by physical things laid out in space and time. There is no information without this representation as an arrangement of physical objects.

Information can be processed by machines. As an example, computers use the “distinguishable states” of charge in electrical components to perform a host of useful tasks. They use the state of electrical charge in electronic components because charge can be manipulated rapidly and can be impressed on tiny components, however, computers could use the states of steel balls in boxes or carrots flowing on conveyor belts to achieve the same effect, albeit more slowly. There is nothing special about electronic computers beyond their speed, complexity and compactness. They are just machines that contain three dimensional arrangements of matter.

Philosophers use information in a much less well-defined fashion. Philosophical information is far more fuzzy and involves the quality of things such as hardness or blueness. So how does philosophical blueness differ from a physical information state?

Physical information about the world is a generalised state change that is related to particular events in the world and could be impressed on any substrate such as steel balls etc.. This allows information to be transmitted from place to place. As an example, a heat sensor in England could trigger a switch that opens a trapdoor that drops a ball that is monitored on a camera that causes changes in charge patterns in a computer that are transmitted as sounds on a radio in the USA. If the sound on the radio makes a cat jump and knock over a vase then it is probably valid to look at the vase and say “its hot in England”. So physical information is related to its source by the causal chain of preceding steps. Notice that each of these steps is a physical event so there is no information without representation as a state in the real world.

In the philosophical idea of information “hot” or “cold” are particular states in the mind. Our mental states are not uniquely related to the state of the world outside our bodies. As an example, human heat sensors are fickle so a blindfolded person might contain the state called “cold” when their hand is placed in water at 60 degrees or ice water at zero degrees. Our “cold” is subjective and does not have a fixed reference point in the world. Our own information is a particular state that could be induced by a variety of events in the world whereas physical information can be a variety of states triggered by a particular event in the world.

To summarise, information in physics is a state change in any substrate. It can be related to the state change in another substrate if a causal chain exists between the two substrates. Information in the mind is the state of the particular substrate that forms your particular mind.

Your mind is a state of a particular substrate but a “state” is an arrangement of events. The crucial questions for the scientist are “what events?” and “how many independent directions can be used for arranging these events?”. We can tell from our experience that at least four independent axes (or “dimensions”) are involved.

Note

The fact that there is no information without representation of the information as a physical state means that peculiar non-physical claims such as Cartesian Dualism and Dennett’s “logical space” are not credible.

Daniel C Dennett. (1991). Consciousness Explained. Little, Brown & Co. USA. Available as a Penguin Book.

Dennett says: “So we do have a way of making sense of the idea of phenomenal space – as a logical space. This is a space into which or in which nothing is literally projected; its properties are simply constituted by the beliefs of the (heterophenomenological) subject.” Dennett is wrong because if the space contains information then it must be instantiated as a physical entity, if it is not instantiated then it does not exist and Dennett is simply denying the experience that we all share to avoid explaining it. Either we have simultaneous events or are just a single point, if we have simultaneous events the space of our experience exists.

“So information is represented by physical things laid out in space and time.”

Why would physical things ‘represent’ anything though? Without some sensory interpretation that groups such things together so that they appear “laid out in space and time”, who is to say that there could be any ‘informing’ going on?

“computers use the “distinguishable states” of charge in electrical components to perform a host of useful tasks.”

Useful to whom? The beads of an abacus can be manipulated into states which are distinguishable by the user, but there is no reason to assume that this informs the beads, or the physical material that the beads are made of. Computers do not compute to serve their own sense or motives, they are blind, low level reflectors of extrinsically introduced conditions.

“Your mind is a state of a particular substrate but a “state” is an arrangement of events. ”

States and arrangements are not physical because they require a mode of interpretation which is qualitative and aesthetic. Just as there can be no disembodied information, there can be no ‘states’ or ‘arrangements’ which are disentangled from the totality of sensible relations, and from specific participatory subsets therein. Information is a ghost – an impostor which reflects this totality in a narrow quantitative sense which is eternal but metaphysical, and a physical sense which is tangible and present but in which all aesthetic qualities are reduced to a one dimensional schema of coordinate permutation. Neither information nor physics can relate to each other or represent anything by themselves. It is my view that we should flip the entire assumption of forms and functions as primitively real around, so that they are instead derived from a more fundamental capacity to appreciate sensory affects and participate in motivated effects. The primordial character of the universe can only be, in my view metaphenomenal, with physics, information, and subjectivity as sensible partitions of the whole.

Consciousness and The Interface Theory of Perception, Donald Hoffman

A very good presentation with lot of overlap on my views. He proposes similar ideas about a sensory-motive primitive and the nature of the world as experience rather than “objective”. What is not factored in is the relation between local and remote experiences and how that relation actually defines the appearance of that relation. Instead of seeing agents as isolated mechanisms, I think they should be seen as more like breaches in the fabric of insensitivity.

It is a little misleading to say (near the end) that a spoon is no more public than a headache. In my view what makes a spoon different from a headache is precisely that the metal is more public than the private experience of a headache. If we make the mistake of assuming an Absolutely public perspective*, then yes, the spoon is not in it, because the spoon is different things depending on how small, large, fast, or slow you are. For the same reason, however, nothing can be said to be in such a perspective. There is no experience of the world which does not originate through the relativity of experience itself. Of course the spoon is more public than a headache, in our experience. To think otherwise as a literal truth would be psychotic or solipsistic. In the Absolute sense, sure, the spoon is a sensory phenomena and nothing else, it is not purely public (nothing is), but locally, is certainly is ‘more’ public.

Something that he mentioned in the presentation had to do with linear algebra and using a matrix of columns which add up to be one. To really jump off into a new level of understanding consciousness, I would think of the totality of experience as something like a matrix of columns which add up, not to 1, but to “=1”. Adding up to 1 is a good enough starting point, as it allows us to think of agents as holes which feel separate on one side and united on the other. Thinking of it as “=1” instead makes it into a portable unity that does something. Each hole recapitulates the totality as well as its own relation to that recapitulation: ‘just like’ unity. From there, the door is open to universal metaphor and local contrasts of degree and kind.

*mathematics invites to do this, because it inverts the naming function of language. Instead of describing a phenomenon in our experience through a common sense of language, math enumerates relationships between theories about experience. The difference is that language can either project itself publicly or integrate public-facing experiences privately, but math is a language which can only face itself. Through math, reflections of experience are fragmented and re-assembled into an ideal rationality – the ideal rationality which reflects the very ideal of rationality that it embodies.

Recent Comments