Archive

Jesse Prinz -On the (Dis)unity of Consciousness

Jesse Prinz gives a well developed perspective on neuronal synchronization as the correlate to attention and explores the question of binding. As always, neuroscience offers important details and clues for us to guide our understanding, however, knowledge alone may not be the pure and unbiased resource that we presume it to be. The assumptions that we make about a world in which we have already defined consciousness to be the behavior of neurons are not neutral. They direct and some cases self-validate the approach as much as any cognitive bias could. For those who watch the video, here are my comments:

To begin with, aren’t unity and disunity qualitative discernments within consciousness? To me, the binding problem is most likely generated from the assumption that consciousness arises a posteriori of distinctions like part-whole, when in fact, awareness may be identical to the capacity for any distinction at all, and is therefore outside of any notion of ‘it-ness’, ‘unity’, or multiplicity. To me, it is clear that consciousness is unified, not-unified, both unified and not unified, and neither unified nor not unified. If we call consciousness ‘attention’, what should we call our awareness of the periphery of our awareness – of memories and intuitions?

The assumption that needs to be questioned is that sub-conscious awareness is different from consciousness in some material way. Our awareness of our awareness is of course limited, but that doesn’t mean that low level ‘processing’ is not also private experience in its own right.

Pointing to synchronization of neuronal activity as causing attention just pushes the hard problem down to a microphenomenal level. In order to synchronize with each other, neurons themselves would ostensibly have to be aware and pay attention to each other in some way.

Synchrony may not be the cause, but the symptom. Experience is stepped down from the top and up from the bottom in the same way that I am using codes of letters to make words which together communicate my top-down ideas. Neurons are the brain’s ‘alphabet’, they are not the author of consciousness, they are not sufficient for consciousness, but they are necessary for a human quality of consciousness. (In my opinion).

Later on, when he covers the idea of Primitive Unity, he dismisses holistic awareness on the basis of separate areas of the brain contribute separate information, but that is based on an expectation that the brain is the cause of awareness rather than the event horizon of privacy as it becomes public (and vice versa) on many levels and scales. The whole idea of ‘building whole experiences’ from atomistic parts assumes holism as a possibility, even as it seeks to deny that possibility. How can a whole experience be built without an expectation of wholes?

Attention is not what consciousness is, it is what consciousness does. In order for attention to exist, there must first be the capacity to receive sensation and to appreciate that sensation qualitatively. Only then, when we have something to pay attention to, can be find our capacity to participate actively in what we perceive.

As far as the refrigerator light idea goes, I think that is a good line of thought to explore with consciousness as I think it should lead to a questioning not only of the constancy of the light, but of the darkness as well. We cannot assume that either the naive state of light on or the sophisticated state of light on with door open/off when closed is more real than the other. Instead, each view only reflects the perspective which is getting the attention. When we look at consciousness from the point of view of a brain, we can only find explanations which break consciousness apart into subconscious and impersonal operations. It is a confirmation bias of a different sort which is never considered.

Diogenes Revenge: Cynicism, Semiotics, and the Evaporating Standard

Diogenes was called Kynos — Greek for dog — for his lifestyle and contrariness. It was from this word for dog that we get the word Cynic.

Diogenes is also said to have worked minting coins with his father until he was 60, but was then exiled for debasing the coinage. – source

In comparing the semiotics of CS Pierce and Jean Baudrillard, two related themes emerge concerning the nature of signs. Pierce famously used trichotomy arrangements to describe the relations, while Baudrillard talked about four stages of simulation, each more removed from authenticity. In Pierce’s formulation, Index, Icon, and Symbol work as separate strategies for encoding meaning. An index is a direct consequence or indication of some reality. An icon is a likeness of some reality. A symbol is a code which has its meaning assigned intentionally.

Baudrillard saw sign as a succession of adulterations – first in which an original reality is copied, then when the copy masks the original in some way, third, as a denatured copy in which the debasement has been masked, and fourth as a pure simulacra; a copy with no original, composed only of signs reflecting each other.

Whether we use three categories or four stages, or some other number of partitions along a continuum, an overall pattern can be arranged which suggests a logarithmic evaporation, an evolution from the authentic and local to the generic and universal. Korzybski’s map and territory distinction fits in here too, as human efforts to automate nature result in maps, maps of maps, and maps of all possible mapping.

The history of human timekeeping reveals the earthy roots of time as a social construct based on physical norms. Timekeeping was, from the beginning linked with government and control of resources.

According to Callisthenes, the Persians were using water clocks in 328 BC to ensure a just and exact distribution of water from qanats to their shareholders for agricultural irrigation. The use of water clocks in Iran, especially in Zeebad, dates back to 500BC. Later they were also used to determine the exact holy days of pre-Islamic religions, such as the Nowruz, Chelah, or Yalda- – the shortest, longest, and equal-length days and nights of the years. The water clocks used in Iran were one of the most practical ancient tools for timing the yearly calendar. source

Anything which burns or flows at a steady rate can be used as a clock. Oil lamps, candles, can incense have been used as clocks, as well as the more familiar sand hourglass, shadow clocks, and clepsydrae (water clocks). During the day, a simple stick in the ground can provide an index of the sun’s position. These kinds of clocks, in which the nature of physics is accessed directly would correspond to Baudrillard’s first level of simulation – they are faithful copies of the sun’s movement, or of the depletion of some material condition.

Staying within this same agricultural era of civilization, we can understand the birth of currency in the same way. Trading of everyday commodities could be indexed with concentrated physical commodities like livestock, and also other objects like shells which had intrinsic value for being attractive and uncommon, as well as secondary value for being durable and portable objects to trade. In the same way that coins came to replace shells, mechanical clocks and watches came to replace physical index clocks. The notions of time and money, while different in that time refers to a commodity beyond the scope of human control and money referring specifically to human control, both serve as regulatory standards for civilization, as well as equivalents for each other in many instances (‘man hours’, productivity).

In the next phase of simulation, coins combined the intrinsic and secondary values of things like shells with a mint mark to ensure transactional viability on the token. The icon of money, as Diogenes discovered, can be extended much further than the index, as anything that bears the official seal will be taken as money, regardless of the actual metal content of the coin. The idea of bank notes was as a promise to pay the bearer a sum of coins. In the world of time measurement, the production of clocks, clocktowers, and watches spread the clock face icon around the world, each one synchronized to a local, and eventually a coordinated universal time. Industrial workers were divided into shifts, with each crew punching a timeclock to verify their hours at work and breaks. While the nature of time makes counterfeiting a different kind of prospect, the practice of having others clock out for you or having a cab driver take the long way around to run the meter longer are ways that the iconic nature of the mechanical clock can be exploited. Being one step removed from the physical reality, iconic technologies provide an early opportunity for ‘hacking’.

| physical territory > index | local map > icon | symbol > universal map |

| water clock, sand clock | sundial/clock face | digital timecode |

| trade > shells | coins > check > paper | plastic > digital > virtual |

| production > organization | bonds > stock | futures > derivatives |

| real estate | mortgage, rent | speculation > derivatives |

| genuine aesthetic | imitation synthetic | artificial emulation |

| non-verbal communication | language | data |

The last three decades have been marked by the rise of the digital economy. Paper money and coins have largely been replaced by plastic cards connected to electronic accounts, which have in turn entered the final stage of simulacra – a pure digital encoding. The promissory note iconography and the physical indexicality of wealth have been stripped away, leaving behind a residue of immediate abstraction. The transaction is not a promise, it is instantaneous. It is not wealth, it is only a license to obtain wealth from the coordinated universal system.

Time has entered its symbolic phase as well. The first exposure to computers that consumers had in the 1970s was in the form of digital watches and calculators. Time and money. First LED, and then LCD displays became available, both in expensive and inexpensive versions. For a whole generation of kids, their first electronic devices were digital calculators and watches. There had been digital clocks before, based on turning wheels or flipping tiles, but the difference here was that the electronic numbers did not look like regular numbers. Nobody had ever seen numbers rendered as these kind of generic combinatorial figures before. Every kid quickly learned how to spell out words by turning the numbers upside down (you couldn’t make much.. 710 77345 spells ShELL OIL)…sort of like emoticons.

Beneath the surface however, something had changed. The digital readout was not even real numbers, they were icons of numbers, and icons which exposed the mechanics of their iconography. Each number was only a combinatorial pattern of binary segments – a specific fraction of the full 8.8.8.8.8.8.8.8. pattern. You could even see the faint outlines of the complete pattern of 8’s if you looked closely, both in LED and LCD. The semiotic process had moved one step closer to the technological and away from the consumer. Making sense of these patterns as numbers was now part of your job, and the language of Arabic numerals became data to be processed.

Since that time, the digital revolution has shaped the making and breaking of world markets. Each financial bubble spread out, Diogenes style, through the banking and finance industry behind a tide of abstraction. Ultra-fast trading which leverages meaningless shifts in transaction patterns has become the new standard, replacing traditional market analysis. From leveraged buyouts in the 1980s to junk bonds, tech IPOs, Credit Default Swaps, and the rest, the world economy is no longer an index or icon of wealth, it is a symbol which refers only to itself.

The advent of 3D printing marks the opposite trend. Where conventional computer printing to allow consumers to generate their own 2D icons from machines running on symbols, the new wave of micro-fabrication technology extend that beyond the icon and the index level. Parts, devices, food, even living tissue can be extruded from symbol directly into material reality. Perhaps this is a fifth level of simulation – the copy with no original which replaces the need for the original…a trophy in Diogenes’ honor.

Light, Vision, and Optics

In the above diagram, the nature of light is examined from a semiotic perspective. As with Piercian sign trichotomies, and semiotics in general the theme of interpretation is deconstructed as it pertains to meanings, interpreters, and objects. In this case the object or sign is “Optics”. This would be the classical, macroscopic appearance of light as beams or rays which can be focused and projected, Color wheels and primary colors are among the tools we use to orient our own human experience of vision with the universal nature of material illumination.

On the other side of bottom of the triangle is “Vision”. This is the component which gives vision a visual quality. The arrows leading to and from vision denote the incoming receptivity from optics and the outgoing engagement toward “Light”. When we see, our awareness is informed from the bottom up and the top down. Seeing rides on top of the low level interactions of our cells, while looking is our way of projecting our will as attention to the visual field.

While optics dictate measurable relationships among physical properties of light on the macroscopic scale, ‘light’ is the hypothetical third partner in the sensory triad. Light is both the microphysical functions of quantum electrodynamics and the absolute frame of perceptual relativity from which various perceptual inertial frames emerge. The span between light and optics is marked by the polar graph and label “Image” to describe the role of resemblance and relativity. Image is a fusion of the cosmological truth of all that can be seen and illuminated (light), with the localization to a particular inertial frame (optics-in-space), and recapitulation by a particular interpreter – who is a time-feeler of private experience.

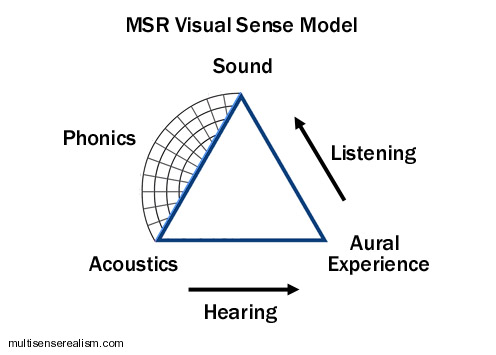

This triangle schema is not limited to light. Any sense can be used with varying degrees of success:

The overall picture can be generalized as well:

Note that the afferent and efferent sided of the triangle have a push-pull orientation, while the quanta side is an expanding graph. This is due to the difference between participation within spacetime, which is proprietary feeling, and the measured positions between participants on multiple scales or frames of participation. Sense is the totality of experience from which subjective extractions are derived. The physical mode describes the relation between each subjective experience and between other frames of subjective experience as representational tokens: bodies or forms. It’s all a kind of trail of breadcrumbs which lead back to the source, which is originality itself.

Pink Floyd, Money

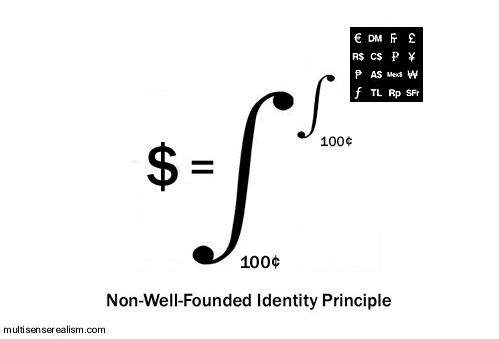

Delving deeper into the Non-Well-Founded Identity Principle that I proposed earlier, here is an illustration which applies the principle to money – US dollars specifically.

What I intend to show is that a dollar is defined by the integral which spans a continuum from the most literal (a dollar “simply is” one hundred cents) to the most figurative. The most figurative end of the continuum is another continuum – an orthogonal continuum which contains the entire top level continuum from what is meant literally as one hundred cents to every other meaning, association, context and contingency, from every perspective, throughout eternity.

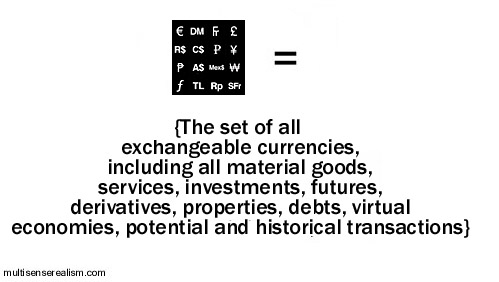

This stack of figurative extensions can yield many loose morphological analogs – electron shell type morphology, stepped pyramid, color wheel mandalas, etc. The closest figures to associate with the dollar would be other kinds of currencies: Euros, Deutschmarks, Yen, etc. These are all of the things which are like dollars, but not dollars, and which dollars can actually buy. The associations radiate out from there to include references to any transaction with dollars. Ultimately this would stretch to encompass any real or imagined transaction which has ever occurred, every transaction which could occur, or could not occur…until the set includes the entire contents of eternity (aka, the Absolute Inertial Frame, or the Totality*).

Once you recover from that (seriously, come back later…I have already had one person say they got a headache thinking about this), then have a look at the diagram below. Same thing, only from the Absolute perspective. Borrowing from the Dark Side of The Moon Prism, I attempt to show how the cosmos is the continuum of self-individuating, nested, Ouroboran Monism. Rather than the Blue Sky icon representing the Totality, it represents any individual thing as a generic presence.

In the top diagram, I show how the individuality of a dollar implies the figuratively nested Totality, and in this diagram, it is the Totality which is considered the individual identity which is spread across the continuum of its own absolutely individuated cardinality or literal granularity.

Another point to make about the continuum is that the bottom limits of the integrals represent both that which is common to each and every unity. The cents which ‘make up’ a dollar represent the quantitative definition which describes any particular dollar, and therefore all dollars. The top end of the integrals represent the opposite definitions – that which is increasingly unique to a particular event in time or associated with a unique group of less particular experiences (qualia, concepts).

You’re welcome 😉

Non-Well-Founded Identity Principle

In an effort to clarify this concept, I wanted to add an update:

Edit 11/02/2018

The point of the Non-Well-Founded Identity principle is to characterize identity in a way which I propose is more accurate and makes fewer presumptions. Rather than following our scientific impulses to define all things in single, final ways, we can step back and instead integrate the full spectrum of epistemological and ontological nuances into our descriptions of math, logic, and science. What I propose here with the Non-Well-Founded Identity Principle is a redefinition of the identity principle to one which factors in the reality of perception, which I propose is not only a bottom up construction, but also a diffraction from the totality down. Unlike artificial intelligence, natural intelligence is kind of prism which opens up the ‘light’ of consciousness to its deeper nature, using both analytical steps and intuitive synthesis.

Rather than saying A=A (that everything is itself), I suggest that every phenomenon is:

- A spectrum of presentations/qualities/properties which can be said to be bounded on two ends.

- On one end, all things are bounded by a conserved identity. They are simply what they appear to be in whatever perspective and context they appear.

- On the other end, all things are a spectrum of resemblances/similarities/associations/dissimilarities that can be navigated poetically and reveal profound dimensions that echo the totality of experience.

In other words, rather than A=A, I propose instead that A equals a spectrum that runs from self equivalence (A=A) to a second spectrum of similarities that ultimately include diametric dissimilarity, i.e. running from A=A to A~!=A.

A= {the spectrum of identity running from A to (a nested spectrum of identity running from almost totally A to almost totally not A)}

This idea is extended further below so that “A” as a unit of identity is replaced by sense itself, so that any sense experience is a spectrum that runs from experience of a purely particular experience to the nested spectrum that runs from all particular experiences to all experiences to the particular experiences that define the sense spectrum itself.

End of update.

Beginning of previous article:

Here’s a crazy little number that I like to call the Non-Well-Founded Identity Principle. It woke my boiling brain up a few times last night, so I present it now in its raw state of lunacy.

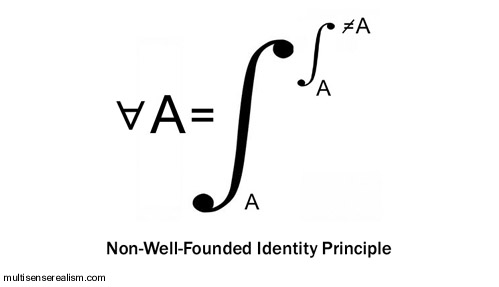

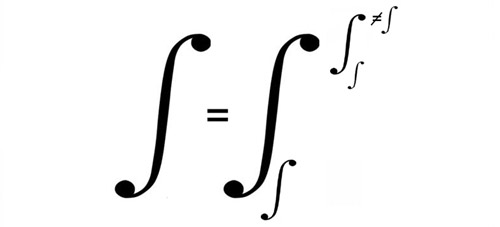

The idea here is “For All A, A equals the integral between A and (the integral between A and not A)”.

This represents a refinement of trivial identity, A=A, to reflect the grounding of all propositions in the Absolute inertial frame of pansensitivity. The nested integral specifies that all integrations are themselves defined as that which is not disintegrated. Any object, subject, or sensory presentation or representation (A) is itself, and it is also the range of all possible relations, literal, figurative, and otherwise, between itself and all that is not itself (≠A).

This comes out of the idea that sense is the Explanatory Gap, i.e. the gap between private experience and public bodies is a non-well-founded set (non-well-founded sets contain themselves as members) in which primordial pansensitivity*defines its nested child sense experiences in a terms which are both unique, generic, and everything in between, depending on how the local perceptual inertia frames it.

*pansensitivity is plain old feeling, sensing, being and doing, but extended and universalized beyond Homo sapiens, as well as physics and arithmetic truth. Ontology itself – being; the is-ness and it-ness of all phenomena can be reduced further through the Non-Well-Founded Identity Principle, under which ontology becomes the nested gap between phenomenology and the sense of its own absence. This is a very tricky shell game, but it is not intended as a trick or a game. Said another way, ‘privacy is the difference between privacy and the difference between private and public experience.’

Applied to philosophy of mind, we would get: Naive realism equals the difference between naive realism and (the difference between naive realism and reductionism). Another one would be Sense equals the sense of the difference between the sense and (the difference between sense and logic). It could be said that X=/(=/≠) X, so that any number is a straight isomorphism with itself, but it is also a superposition of any potential combinations with or relativity upon any and all X that it is not.

The reductio ad absurdum can be seen in this second expression:

in which integration itself is the integral between integration and disintegration. Every set or process is defined by its own self-same initiation and termination.

Is this all insipid tautology? Is it another way of catching a glimpse of Heisenberg uncertainty or Gödel incompleteness through a fun house mirror? I don’t know much about calculus, so there may be a more conventional way of expressing these kinds of relations, but in the mean time, to me, it’s an absolutely interesting way of modeling the absolute: A universal capacity to simultaneously universalize and de-univeralize (proprietize) the universal experience.

Why PIP (and MSR) Solves the Hard Problem of Consciousness

The Hard Problem of consciousness asks why there is a gap between our explanation of matter, or biology, or neurology, and our experience in the first place. What is it there which even suggests to us that there should be a gap, and why should there be a such thing as experience to stand apart from the functions of that which we can explain.

Materialism only miniaturizes the gap and relies on a machina ex deus (intentionally reversed deus ex machina) of ‘complexity’ to save the day. An interesting question would be, why does dualism seem to be easier to overlook when we are imagining the body of a neuron, or a collection of molecules? I submit that it is because miniaturization and complexity challenge the limitations of our cognitive ability, we find it easy to conflate that sort of quantitative incomprehensibility with the other incomprehensibility being considered, namely aesthetic* awareness. What consciousness does with phenomena which pertain to a distantly scaled perceptual frame is to under-signify it. It becomes less important, less real, less worthy of attention.

Idealism only fictionalizes the gap. I argue that idealism makes more sense on its face than materialism for addressing the Hard Problem, since material would have no plausible excuse for becoming aware or being entitled to access an unacknowledged a priori possibility of awareness. Idealism however, fails at commanding the respect of a sophisticated perspective since it relies on naive denial of objectivity. Why so many molecules? Why so many terrible and tragic experiences? Why so much enduring of suffering and injustice? The thought of an afterlife is too seductive of a way to wish this all away. The concept of maya, that the world is a veil of illusion is too facile to satisfy our scientific curiosity.

Dualism multiplies the gap. Acknowledging the gap is a good first step, but without a bridge, the gap is diagonalized and stuck in infinite regress. In order for experience to connect in some way with physics, some kind of homunculus is invoked, some third force or function interceding on behalf of the two incommensurable substances. The third force requires a fourth and fifth force on either side, and so forth, as in a Zeno paradox. Each homunculus has its own Explanatory Gap.

Dual Aspect Monism retreats from the gap. The concept of material and experience being two aspects of a continuous whole is the best one so far – getting very close. The only problem is that it does not explain what this monism is, or where the aspects come from. It rightfully honors the importance of opposites and duality, but it does not question what they actually are. Laws? Information?

Panpsychism toys with the gap.Depending on what kind of panpsychism is employed, it can miniaturize, multiply, or retreat from the gap. At least it is committing to closing the gap in a way which does not take human exceptionalism for granted, but it still does not attempt to integrate qualia itself with quanta in a detailed way. Tononi’s IIT might be an exception in that it is detailed, but only from the quantitative end. The hard problem, which involves justifying the reason for integrated information being associated with a private ‘experience’ is still only picked at from a distance.

Primordial Identity Pansensitivity, my candidate for nomination, uses a different approach than the above. PIP solves the hard problem by putting the entire universe inside the gap. Consciousness is the Explanatory Gap. Naturally, it follows serendipitously that consciousness is also itself explanatory. The role of consciousness is to make plain – to bring into aesthetic evidence that which can be made evident. How is that different from what physics does? What does the universe do other than generate aesthetic textures and narrative fragments? It is not awareness which must fit into our physics or our science, our religion or philosophy, it is the totality of eternity which must gain meaning and evidence through sensory presentation.

*Is awareness ‘aesthetic’? That we call a substance which causes the loss of consciousness a general anesthetic might be a serendipitous clue. If so, the term local anesthetic as an agent which deadens sensation is another hint about our intuitive correlation between discrete sensations and overall capacity to be ‘awake’. Between sensations (I would call sub-private) and personal awareness (privacy) would be a spectrum of nested channels of awareness.

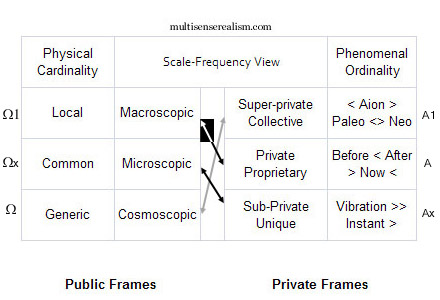

Charting It All

In an effort to provide a more straightforward view of pansensitivity and eigenmorphism, the chart above organizes all phenomena in the cosmos by scale of publicly extended body length and frequency range of privately experienced times. Going left to right (Metaphorically, Occident to Orient), the first and second column denote the public, physical scopes (perceptual inertial frames) according to cardinality and size. The bottom left frames (Ω) correspond to the outermost types of physical phenomena, i.e. absolutely gigantic or absolutely infinitessimal. This reflects the aesthetic intuition by which the atom comes out having more in common morphologically and dynamically with a solar system than a tree or coral reef, despite being at opposite ends of the scale of our awareness. The Ω range of frames is the envelope of physicality, where physical and mathematical ‘laws’ meet the most universally public perceptions.

Our awareness is extended technologically, which broadens our view of the public universe, however, since the awareness being extended is primarily visual and somatic (‘tangible’-kinesthetic rather than tactile), the telescoping of our sensory awareness is also narrowing our depth of field within the private (phenomenological) side of physics. My conjecture is that because of the nature of perceptual relativity, the more we focus on the the outer contexts, not only do we not see the private experience in the universe on these distant scales, but also our entire worldview will, by default, adjust to recontextualize the local experiences of the self. The fallacy of the instrument (if you have only a hammer, everything looks like a nail) might arise through this kind of empathetic feedback loop. This is likely to extend into so-called ‘supernatural’ phenomenon, which explains the increases in magnitude, frequency, and connectedness of coincidence experienced by subjects through altered states of consciousness. The higher up on the right hand column, the more large patterns of synchronicity, with deeper resonance (A1) are available for direct personal experience (A).

By contrast, the lower down on the Oriental, right hand scale we go, the more the needle of synchronicity tips toward mere statistical coincidence and the more top-down intuition, imagination, and eidetic narrative are collapsed into the stepped logic of bottom-up causality. The numbering schema is confusing, but intentional. The use of A in the middle row on the right side denotes that sense is always anchored centripetally. Perceptual capacity radiates as a range, often a literal circular, conic, or spherical perimeter, of awareness, but also sensitivity radiates figuratively as nested channels or layered modalities of sense.

The use of A1 and Ax above and below A respectively, implies the hierarchical pull from the superlative top, down to the personal, and the plummet from the personal middle down to the bottom. Ax would be the opposite of A1; insignificant, low status, shame and indignity. Looking at the Left side of the chart, the numbering scheme is even more confusing, but it is to emphasize the multiple levels of opposition that characterize the public and private aspects of physics-sense. The A1 range is the most universal private experience, (the ultimate being experience itself), which is meta-phorical. Experiences are associated to each other through metaphor, with the most tightly isomorphic metaphor being imitation or repetition. The higher up on the A column we go, the more latitude there is in recognizing common associations. Pareidolia and Apophenia are examples of having the aperture disproportionately dilated to the super-private, which becomes unsupportable within human society (delusions of grandeur, ideas of reference, mania, etc.)

Back to the Omega column on the left, the Ω1 is a different kind of magnificence than the private rapture of the Absolute. The public side is not centripetally oriented, but linear and circular. There is no radiant center, only jumps and slides. The Alpha/Aleph side in the East fills the gaps, it infers and elides, it puts two and two together. The Ω1 is mutation and fluke. Unintentional singularity. Its uniqueness is simply an inevitable accumulation of imperfectly repeating behaviors, so that the wonder of biology through evolution can be examined correctly in Darwinian terms. These are terms of the exteriors, however. Regardless of how complex and convoluted the patterns, they are patterns of insensitivity rather than awareness, automaticity rather than authenticity. This is individuality from the outside in – stochastic, social, generational rather than individual.

The cells of the bottom row have as much in common as the cells of the top row are polar opposites, but they are also skewed (this is what the arrows in the center are supposed to mean). This is what I call eigenmorphism. A to Ω1 has a half-black, half-white arrow, showing the relation between the human mind and its homid body. This is a maximal polarization, or so it seems to us. The black arrow from the Microscopic Ωx to the Sub-private Ax have in common the x, connoting the strong relation between mechanical appearances on the molecular level and the recursiveness of awareness in its least signifying, quantitative form. This is contrary to the idea that vibrations or energy are what we are, rather vibrate is what the various parts of our sub-private experience do – jiggling or wagging from position to disposition, from incident to co-incident.

The grey arrow from A1 to Ω points to what I call the profound edge of the continuum. This would be the level at which the Totality is an unbroken, Ouroboran monad. This is what happens ‘behind our backs’, hypnotically through evanescence. It is significance reclaimed and re-membered after having been diffracted into the entropy of spacetime. By contrast, the black and white split arrow corresponds to the ‘pedestrian fold’ – the level of the monad which appears most polarized and least evanescent – the terrestrial aesthetic of ‘ordinary’ experience.

In the top chart I have limited cardinality to the public side and ordinality to the private side to show the relation between morphic scale and phoric frequency. Privacy runs first to last (ordinal), publicity places astronomically numerous to few (cardinal).

Compare with Frame Set View:

Why Computers Can’t Lie and Don’t Know Your Name

What do the Hangman Paradox, Epimenides Paradox, and the Chinese Room Argument have in common?

The underlying Symbol Grounding Problem common to all three is that from a purely quantitative perspective, a logical truth can only satisfy some explicitly defined condition. The expectation of truth itself being implicitly true, (i.e. that it is possible to doubt what is given) is not a condition of truth, it is a boundary condition beyond truth*. All computer malfunctions, we presume, are due to problems with the physical substrate, or the programmer’s code, and not incompetence or malice. The computer, its program, or binary logic in general cannot be blamed for trying to mislead anyone. Computation, therefore, has no truth quality, no expectation of validity or discernment between technical accuracy and the accuracy of its technique. The whole of logic is contained within the assumption that logic is valid automatically. It is an inverted mirror image of naive realism. Where a person can be childish in their truth evaluation, overextending their private world into the public domain, a computer is robotic in its truth evaluation, undersignifying privacy until it is altogether absent.

Because computers can only report a local fact (the position of a switch or token), they cannot lie intentionally. Lying involves extending a local fiction to be taken as a remote fact. When we lie, we know what a computer cannot guess – that information may not be ‘real’.

When we say that a computer makes an error, it is only because of a malfunction on the physical or programmatic level, therefore it is not false, but a true representation of the problem in the system which we receive as an error. It is only incorrect in some sense that is not local to the machine, but rather local to the user, who makes the mistake of believing that the output of the program is supposed to be grounded in their expectations for its function. It is the user who is mistaken.

It is for this same reason that computers cannot intend to tell the truth either. Telling the truth depends on an understanding of the possibility of fiction and the power to intentionally choose the extent to which the truth is revealed. The symbolic communication expressed is grounded strongly in the privacy of the subject as well as the public context, and only weakly grounded in the logic represented by the symbolic abstraction. With a computer, the hierarchy is inverted. A Turing Machine is independent of private intention and public physics, so it is grounded absolutely in its own simulacra. In Searle’s (much despised) Chinese Room Argument – the conceit of the decomposed translator exposes how the output of a program is only known to the program in its own narrow sensibility. The result of the mechanism is simply a true report of a local process of the machine which has no implicit connection to any presented truths beyond the machine…except for one: Arithmetic truth.

Arithmetic truth is not local to the machine, but it is local to all machines and all experiences of correct logical thought. This is an interesting symmetry, as the logic of mechanism is both absolutely local and instantaneous and absolutely universal and eternal, but nothing in between. Every computed result is unique to the particular instantiation of the machine or program, and universal as a Turing emulable template. What digital analogs are not is true or real any sense which relates expressly to real, experienced events in space time. This is the insight expressed in Korzybski’s famous maxim ‘The map is not the territory.’ and in the Use-Mention distinction, where using a word intentionally is understood to be distinct from merely mentioning the word as an object to be discussed. For a computer, there is no map-territory distinction. It’s all one invisible, intangible mapitory of disconnected digital events.

By contrast, a person has many ways to voluntarily discern territories and maps. They can be grouped together, such as when the acoustic territory of sound is mapped to the emotional-lyric territory of music, or the optical territory of light is mapped as the visual territory of color and image. They can be flipped so that the physics is mapped to the phenomenal as well, which is how we control the voluntary muscles of our body. For us, authenticity is important. We would rather win the lottery than just have a dream that we won the lottery. A computer does not know the difference. The dream and the reality are identical information.

Realism, then, is characterized by its opposition to the quantitative. Instead of being pegged to the polar austerity which is autonomous local + explicitly universal, consciousness ripens into the tropical fecundity of middle range. Physically real experience is in direct contrast to digital abstraction. It is semi-unique, semi-private, semi-spatiotemporal, semi-local, semi-specific, semi-universal. Arithmetic truth lacks any non-functional qualities, so that using arithmetic to falsify functionalism is inherently tautological. It is like asking an armless man to raise his hand if he thinks he has no arms.

Here’s some background stuff that relates:

The Hangman Paradox has been described as follows:

A judge tells a condemned prisoner that he will be hanged at noon on one weekday in the following week but that the execution will be a surprise to the prisoner. He will not know the day of the hanging until the executioner knocks on his cell door at noon that day.Having reflected on his sentence, the prisoner draws the conclusion that he will escape from the hanging. His reasoning is in several parts. He begins by concluding that the “surprise hanging” can’t be on Friday, as if he hasn’t been hanged by Thursday, there is only one day left – and so it won’t be a surprise if he’s hanged on Friday. Since the judge’s sentence stipulated that the hanging would be a surprise to him, he concludes it cannot occur on Friday.He then reasons that the surprise hanging cannot be on Thursday either, because Friday has already been eliminated and if he hasn’t been hanged by Wednesday night, the hanging must occur on Thursday, making a Thursday hanging not a surprise either. By similar reasoning he concludes that the hanging can also not occur on Wednesday, Tuesday or Monday. Joyfully he retires to his cell confident that the hanging will not occur at all.The next week, the executioner knocks on the prisoner’s door at noon on Wednesday — which, despite all the above, was an utter surprise to him. Everything the judge said came true.

1) The conclusion “I won’t be surprised to be hanged Friday if I am not hanged by Thursday” creates another proposition to be surprised about. By leaving the condition of ‘surprise’ open ended, it could include being surprised that the judge lied, or any number of other soft contingencies that could render an ‘unexpected’ outcome. The condition of expectation isn’t an objective phenomenon, it is a subjective inference. Objectively, there is no surprise since objects don’t anticipate anything.

2) If we want to close in tightly on the quantitative logic of whether deducibility can be deduced – given five coin flips and a certainty that one will be heads, each successive tails coin flip increases the odds that one the remaining flips will be heads. The fifth coin will either be 100% likely to be heads, or will prove that the certainty assumed was 100% wrong.

I think the paradox hinges on 1) the false inference of objectivity in the use of the word surprise and 2) the false assertion of omniscience by the judge. It’s like an Escher drawing. In real life, surprise cannot be predicted with certainty and the quality of unexpectedness it is not an objective thing, just as expectation is not an objective thing.

Connecting the dots, expectation, intention, realism, and truth are all rooted in the firmament of sensory-motive participation. To care about what happens cannot be divorced from our causally efficacious role in changing it. It’s not just a matter of being petulant or selfish. The ontological possibility of ‘caring’ requires letters that are not in the alphabet of determinism and computation. It is computation which acts as punctuation, spelling, and grammar, but not language itself. To a computer, every word or name is as generic as a number. They can store the string of characters that belong to what we call a name, but they have no way to really recognize who that name belongs to.

*I maintain that what is beyond truth is sense: direct phenomenological participation

Two-photon interferometry and quantum state collapse

From the paper Two-photon interferometry and quantum state collapse:

“In short, for ideal measurements both experiment and standard quantum theory imply an instantaneous collapse to unpredictable but definite outcomes.The MS [measurement state] is the global form of the collapsed state with no need for a separate “process 1” or measurement postulate. The other postulates of quantum physics imply that, when systems become entangled, their observed states instantly collapse into unpredictable but definite outcomes. In particular, Schrodinger’s cat is in a nonparadoxical definite state, alive when the nucleus does not decay and dead when it does. This solution of the problem of outcomes requires no assistance from other worlds, human minds, hidden variables, collapse mechanisms, collapse postulates, or “for all practical purposes” arguments.”

I think that this is a promising study which gives support to the MSR model. Once we take the step of questioning whether there can be any such thing as measurement which is other than a kind of ordinary sensory-motor interaction (even if it is microphenomenal),the hard problem of consciousness and explanatory gap disappear.

Seeing that it is the capacity to feel which, through its interruption, gives rise to the sense of touch, and it is the sense of touch which gives rise to touchable things rather than the other way around, we should take the opportunity to go back and reinterpret all of the particle physics of the last 50 years – not because it is wrong, but because it is right for the wrong reasons.

If photons sense each other, how do we know that photons themselves are anything more than atoms sensing and instructing each other? This study fits with a model of the universe in which all phenomena throughout eternity is, on one level, a simultaneous sensory experience. Within the solitude of that Absolute experience are miniature versions of the same, (such as our own human individual sense of conscious solitude). In the polarization-derived ‘spaces’ or ‘gaps’ in between these homing-monads, cat-experiences and atomic-experiences take their marching orders from each other’s presence, represented as localized ‘homing signals’. Cats and atoms alike, since they are ultimately but frozen appearances within some nesting of the global nature of sense itself (the Absolute), also receive intuitive influence from the overall event of the total interaction.

Instead of seeing photons as the foregrounded units, all of the evidence seems to point to the idea that the opposite might be true. As Hobson mentions, “The photons “knew” each others’ phase angles, despite their arbitrarily large separation. This certainly appears to be nonlocal, and indeed, the results violate Bell’s inequality, verifying nonlocality” The so called measurement state would make more sense as the fundamental capacity of the Absolute to pretend at photon, atom, or dead cat forms. This paper takes the step of entangling the outcome of the cat and the outcome of the particle’s decay with the overall measurement state. I think a good next step is to entangle the entire presentation of cats and particles and even ‘outcomes’ to the participant’s sensory nature. It’s all entangled, even entanglement itself. In our case, as human beings, we sense cats more directly than we can sense a particle’s decay, but not as directly as we can make sense of our own thoughts and feelings about them.

Primordial identity pansensitivity (PIP) is the proposition that the capacity to experience, to participate perceptually is the ultimate irreducible universal. In Kantian terms, the sole synthetic a priori. In relativistic terms, the maximally inclusive inertial frame. Other philosophers have used terms like Absolute, Totality, Transcendental Signifier, and Supreme Monad.

The difference between PIP and theism is that PIP does not presume a human-like, or entity-like subject associated with the Absolute. Neither is it presumed that the Absolute is a field, force, vacuum, or information-theoretic phase space. The Absolute is neither local nor nonlocal, but rather the parent of that distinction, and therefore orthogonal to it .

Under PIP, the Absolute is understood to be the meta-phoric firmament within which the three ‘verses’ are diffracted. The three verses, following a Hegelian-type dialectic of thesis, antithesis, and synthesis* are phoric (directly experienced), morphic (embodied presence), and metric (abstract relation of the other two verses). In one sense, the Absolute is more closely aligned with the phoric+morphic, i.e. the collection of all presence with no absence.

From this there is a sense of omniscience, as all times and places would be ‘here and now’. Take the universe as we know it, multiply it by eternity, see all of it from every perspective possible, and remove all of the space and time from it and we would have the PIP absolute – a timeless everythingness which transcends the difference between novelty and repetition. If mortality is marked by an unbearable lightness of being, in which all experience is either meaninglessly fleeting or meaninglessly repeating, the immortality of the Absolute is marked by an unbearable saturation of meaning. All is absolutely unique and absolutely generic at once.

Ordinary sense is, on every level, what diffracts/diverges/disentangles one state from another, and one level of sensory interpretation from another. The sensation is the fundamental physical presentation, the measurement of alienated sensations (measured as photons, atoms, bodies) is derived representation. Notions of space, time, and information are meta-representations – concepts and abstractions, which themselves reflect the unity of the Absolute, but in a skeletal and figurative way.

*note that the dialectic itself is a metric abstraction which cannot be directly experienced or embodied. The actual thesis which physically exists is the phoric thesis: sensory-motive-time. Matter-energy-space is the morphic antithesis. Entropy-Significance-Aion (eternity) is the synthesis.

Recent Comments