Archive

Law of Conservation of Mystery

Law of Conservation of Mystery – Refers to the weird tendency for profound and fundamental issues to resist final resolution. Under eigenmorphism, both the microcosmic and cosmic frames (the infinitesimal and the great) relate to the fusion of chance and choice. It is as if the personal, macrocosmic range of awareness might act as a lens, bending the impersonality of the universe into a personal bubble, and in another sense, the personal bubble may project an illusion of impersonality outwardly. Both of these can be thought of not as illusions or distortions, but of mutual relation between foreground and background which constitutes a tessellated synergy.

Both the sub-personal quirkiness of QM and the super-personal spookiness of divination (such as the I Ching or Tarot cards) exemplify that the perception of spiritual or mechanical absolutes is elusive and bound to the choice between belief and belief in disbelief. In both quantum mechanics and divination, the human participant is responsible for the interpretation – the individual is the prism which splits the beam of their interpretation between chance and choice…or the individual is responsible for remaining skeptical and resisting pseudoscientific claims. If we choose to allow choice on the cosmological level, even there, the continuum between luck which is intentionally or unintentionally fateful and karma which is divinely mechanistic reflects a difference in degree of universal approbation. The Law of Conservation of Mystery is particularly applicable to paranormal phenomena. Everything from UFOs to NDEs have passionately devoted supporters who are either seen as deluded fools stuck in a prescientific past or prophets of enlightenment ahead of their time. What preserves that bifocal antagonism is technically eigenmorphism – it’s how different perceptual inertial frames maintain their character, but this special case of perceptual relativity is on us. It keeps us guessing and pushing further, but it also keeps us blind and stuck in our assumptions.

Superposition of the Absolute – The concept of superposition has enjoyed wide acceptance on the microcosmic level of quantum physics, but the idea of the Totality of the universe having a kind of multistable nature has not yet been widely considered as far as I know. The superposition of a wavefunction is tolerated because it helps us justify what we have measured, but any escalation of this kind of merged possibility to the macrocosm is strictly forbidden. Under PIP, the entire cosmos can be understood to be perpetually in superposition, or perhaps meta-superposition. Any event can be meaningful or meaningless according to one’s interpretation, but some events are more insistent upon meaningful interpretations than others.

Coincidence and pattern invariably count as evidence of the Absolute, whether the Absolute is regarded as mechanical law, divine will, or existential indifference. In this way, the insistence or existence of pattern can be understood as the wavefunction collapse of eternity. This is hard to grasp since eternity is the opposite of instantaneous, so that the ‘collapse’ is occurring in some sense across all time, weaving through it as a mysterious thread that pulls every participant forward into their own knowledge and delusion. On the Absolute scale, every lifetime is a single moment that echoes forever, and the echo of the forever-now into nested subroutines of smaller and smaller ‘nows’.

On the super-personal level (super-private/transpersonal/collective), when coincidence seems to become something more (synchronicity, precognition, or destiny), the collapse can be understood as a collapse on multiple nested frames at once. “Then it all made sense”, Eureka!, I hit bottom. etc. The multiplicity of conflicting possibilities can, for a moment, pop into a single focus that will resonate for a lifetime.

On the quantum level, probability is used in a particular way to explain behaviors of phenomena to which we attribute no intentional choice. Einstein’s famous objection ‘God does not play dice’ perhaps echoes a deep intuition that people have always had about the way that nature reflects a partially hidden order. This expectation is perhaps the common thread of all three epistemological branches – the theological, the philosophical, and the scientific. The value of prediction is particularly powerful for both scientific and theocratic authority as evidence of positive connection with either natural law or divine will. Science demands theories predict successfully, while religion demands prophetic promise. Under the Superposition of the Absolute, the ultimate natural law can be seen to become more flexible and porous, and the localization of divine will can be seen to have limitations and natural constraints. If PIP is to make a prediction itself, it would be to suggest that all wavefunctions share the identical, nested, non-well-founded superposition, one which can be understood as sense or perceptual relativity itself.

P, PP, PIP, MSR Disambiguation

Pansensitivity (P) proposes that sensation is a universal property.

Primordial Pansensitivity (PP) proposes that because sensation is primitive, mechanism is derived from insensitivity. Whether it is mechanism that assumes form without sensibility (materialism) or function without sensation (computationalism), they both can only view feeling as a black box/epiphenomenon/illusion.

Under PP, both materialism and computationalism make sense as partial negative images of P, so that PP is the only continuum or capacity needed to explain feeling and doing (sense-motive), objective forms and functions (mass-energy), and informative positions and dispositions (space-time).

PP says that the appearance of forms and functions are, from an absolute perspective, sensory-motive experiences which have been alienated through time and across space.

Primordial Identity Pansensitivity (PIP) adds to the Ouroboran Monism of PP, (sense twisted within itself = private experience vs public bodies) by suggesting that PP is not only irreducible, but it is irreducibility itself.

PIP suggests that distance is a kind of insensitivity, so that all other primitive possibilities which are grounded in mechanism, such as information or energy, are reductionist in a way which oversignifies the distanced perspective, while anthropomorphic primitives such as love or divinity are holistic in a way which oversignifies the local perspective. Local and distant are assumed to be Cartesian opposites, but PIP maps locality and distance as the same in terms of being two opposite branches of insensitivity. Both the holistic and reductionist views ignore the production of distance which they both rely on for their perspective, both take perspective itself, perception, and relativity for granted.

MSR (Multisense Realism) tries to rehabilitate reductionism and holism by understanding them as bifocal strategies which arise naturally, each appropriate for a particular context of perceived distance. Both are near-sighted and far-sighted in opposite ways, as the subject seeks to first project anthropomorphism outward onto the world and then, following a crisis of disillusionment, seeks the opposite – to project exterior mechanism into the self. MSR invites us to step outside of the bifocal antagonism and into a balanced appreciation of the totality.

Wittgenstein in Wonderland, Einstein under Glass

If I understand the idea correctly – that is, if there is enough of the idea which is not private to Ludwig Wittgenstein that it can be understood by anyone in general or myself in particular, then I think that he may have mistaken the concrete nature of experienced privacy for an abstract concept of isolation. From Philosophical Investigations:

The words of this language are to refer to what can be known only to the speaker; to his immediate, private, sensations. So another cannot understand the language. – http://plato.stanford.edu/entries/private-language/

To begin with, craniopagus (brain conjoined) twins, do actually share sensations that we would consider private.

The results of the test did not surprise the family, who had long suspected that even when one girl’s vision was angled away from the television, she was laughing at the images flashing in front of her sister’s eyes. The sensory exchange, they believe, extends to the girls’ taste buds: Krista likes ketchup, and Tatiana does not, something the family discovered when Tatiana tried to scrape the condiment off her own tongue, even when she was not eating it.

There should be no reason that it would not be technologically feasible to eventually export the connectivity which craniopagus twins experience through some kind of neural implant or neuroelectric multiplier. There are already computers that can be controlled directly through the brain.

Brain-computer interfaces that monitor brainwaves through EEG have already made their way to the market. NeuroSky’s headset uses EEG readings as well as electromyography to pick up signals about a person’s level of concentration to control toys and games (see “Next-Generation Toys Read Brain Waves, May Help Kids Focus”). Emotiv Systems sells a headset that reads EEG and facial expression to enhance the experience of gaming (see “Mind-Reading Game Controller”).

All that would be required in principle would be to reverse the technology to make them run in the receiving direction (computer>brain) and then imitate the kinds of neural connections which brain conjoined twins have that allow them to share sensations. The neural connections themselves would not be aware of anything on a human level, so it would not need to be public in the sense that sensations would be available without the benefit of a living human brain, only that the awareness could, to some extent, incite a version of itself in an experientially merged environment.

Because of the success and precision of science has extended our knowledge so far beyond our native instruments, sometimes contradicting them successfully, we tend to believe that the view that diagnostic technology provides is superior to, or serves as a replacement for our own awareness. While it is true that our own experience cannot reveal the same kinds of things that an fMRI or EEG can, I see that as a small detail compared to the wealth of value that our own awareness provides about the brain, the body, and the worlds we live in. Natural awareness is the ultimate diagnostic technology. Even though we can certainly benefit from a view outside of our own, there’s really no good reason to assume that what we feel, think, and experience isn’t a deeper level of insight into the nature of biochemical physics than we could possibly gain otherwise. We are evidence that physics does something besides collide particles in a void. Our experience is richer, smarter, and more empirically factual than what an instrument outside of our body can generate on its own. The problem is that our experience is so rich and so convoluted with private, proprietary knots, that we can’t share very much of it. We, and the universe, are made of private language. It is the public reduction of privacy which is temporary and localized…it’s just localized as a lowest common denominator.

While It is true that at this stage in our technical development, subjective experience can only be reported in a way which is limited by local social skills, there is no need to invoke a permanent ban on the future of communication and trans-private experience. Instead of trying to report on a subjective experience, it could be possible to share that experience through a neurological interface – or at least to exchange some empathic connection that would go farther than public communication.

If I had some psychedelic experience which allowed me to see a new primary color, I can’t communicate that publicly. If I can just put on a device that allows our brains to connect, then someone else might be able to share the memory of what that looked like.

It seems to me that Wittgenstein’s private language argument (sacrosanct as it seems to be among the philosophically inclined) assumes privacy as identical to isolation, rather than the primordial identity pansensitivty which I think it could be. If privacy is accomplished as I suggest, by the spatiotemporal ‘masking’ of eternity, than any experience that can be had is not a nonsense language to be ‘passed over in silence’, but rather a personally articulated fragment of the Totality. Language is only communication – intellectual measurement for sharing public-facing expressions. What we share privately is transmeasurable and inherently permeable to the Totality beneath the threshold of intellect.

Said another way, everything that we can experience is already shared by billions of neurons. Adding someone else’s neurons to that group should indeed be only a matter of building a synchronization technology. If, for instance, brain conjoined twins have some experience that nobody else has (like being the first brain conjoined twins to survive to age 40 or something), then they already share that experience, so it would no longer be a ‘private language’. The true future of AI may not be in simulating awareness as information, but in using information to share awareness. Certainly the success of social networking and MMPGs has shown us that what we really want out of computers is not for them to be us, but for us to be with each other in worlds we create.

I propose that rather than beginning from the position of awareness being a simulation to represent a reality that is senseless and unconscious, we should try assuming that awareness itself is the undoubtable absolute. I would guess that each kind of awareness already understands itself far better than we understand math or physics, it is only the vastness of human experience which prevents that understanding to be shared on all levels of itself, all of the time.

The way to understand consciousness would not be to reduce it to a public language of physics and math, since our understanding of our public experience is itself robotic and approximated by multiple filters of measurement. To get at the nature of qualia and quanta requires stripping down the whole of nature to Absolute fundamentals – beyond language and beyond measurement. We must question sense itself, and we must rehabilitate our worldview so that we ourselves can live inside of it. We should seek the transmeasurable nature of ourselves, not just the cells of our brain or the behavioral games that we have evolved as one particular species in the world. The toy model of consciousness provided by logical positivism and structural realism is, in my opinion, a good start, but in the wrong direction – a necessary detour which is uniquely (privately?) appropriate to a particular phase of modernism. To progress beyond that I think requires making the greatest cosmological 180 since Galileo. Einstein had it right, but he did not generalize relativity far enough. His view was so advanced in the spatialization of time and light that he reduced awareness to a one dimensional vector. What I think he missed, is that if we begin with sensitivity, then light becomes a capacity with which to modulate insensitivity – which is exactly what we see when we share light across more than one slit – a modulation of masked sensitivity shared by matter independently of spacetime.

Light, Vision, and Optics

In the above diagram, the nature of light is examined from a semiotic perspective. As with Piercian sign trichotomies, and semiotics in general the theme of interpretation is deconstructed as it pertains to meanings, interpreters, and objects. In this case the object or sign is “Optics”. This would be the classical, macroscopic appearance of light as beams or rays which can be focused and projected, Color wheels and primary colors are among the tools we use to orient our own human experience of vision with the universal nature of material illumination.

On the other side of bottom of the triangle is “Vision”. This is the component which gives vision a visual quality. The arrows leading to and from vision denote the incoming receptivity from optics and the outgoing engagement toward “Light”. When we see, our awareness is informed from the bottom up and the top down. Seeing rides on top of the low level interactions of our cells, while looking is our way of projecting our will as attention to the visual field.

While optics dictate measurable relationships among physical properties of light on the macroscopic scale, ‘light’ is the hypothetical third partner in the sensory triad. Light is both the microphysical functions of quantum electrodynamics and the absolute frame of perceptual relativity from which various perceptual inertial frames emerge. The span between light and optics is marked by the polar graph and label “Image” to describe the role of resemblance and relativity. Image is a fusion of the cosmological truth of all that can be seen and illuminated (light), with the localization to a particular inertial frame (optics-in-space), and recapitulation by a particular interpreter – who is a time-feeler of private experience.

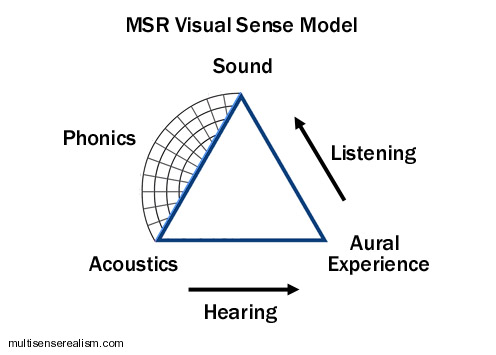

This triangle schema is not limited to light. Any sense can be used with varying degrees of success:

The overall picture can be generalized as well:

Note that the afferent and efferent sided of the triangle have a push-pull orientation, while the quanta side is an expanding graph. This is due to the difference between participation within spacetime, which is proprietary feeling, and the measured positions between participants on multiple scales or frames of participation. Sense is the totality of experience from which subjective extractions are derived. The physical mode describes the relation between each subjective experience and between other frames of subjective experience as representational tokens: bodies or forms. It’s all a kind of trail of breadcrumbs which lead back to the source, which is originality itself.

Pink Floyd, Money

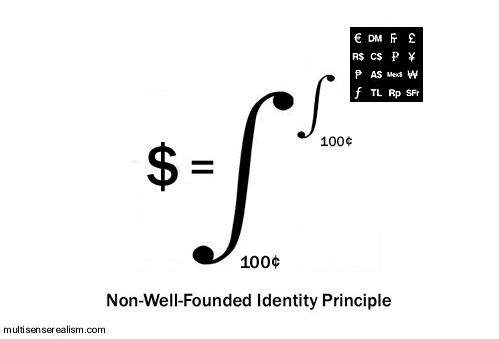

Delving deeper into the Non-Well-Founded Identity Principle that I proposed earlier, here is an illustration which applies the principle to money – US dollars specifically.

What I intend to show is that a dollar is defined by the integral which spans a continuum from the most literal (a dollar “simply is” one hundred cents) to the most figurative. The most figurative end of the continuum is another continuum – an orthogonal continuum which contains the entire top level continuum from what is meant literally as one hundred cents to every other meaning, association, context and contingency, from every perspective, throughout eternity.

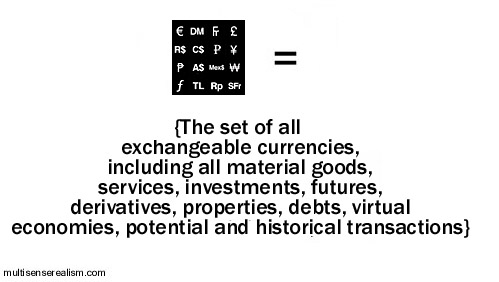

This stack of figurative extensions can yield many loose morphological analogs – electron shell type morphology, stepped pyramid, color wheel mandalas, etc. The closest figures to associate with the dollar would be other kinds of currencies: Euros, Deutschmarks, Yen, etc. These are all of the things which are like dollars, but not dollars, and which dollars can actually buy. The associations radiate out from there to include references to any transaction with dollars. Ultimately this would stretch to encompass any real or imagined transaction which has ever occurred, every transaction which could occur, or could not occur…until the set includes the entire contents of eternity (aka, the Absolute Inertial Frame, or the Totality*).

Once you recover from that (seriously, come back later…I have already had one person say they got a headache thinking about this), then have a look at the diagram below. Same thing, only from the Absolute perspective. Borrowing from the Dark Side of The Moon Prism, I attempt to show how the cosmos is the continuum of self-individuating, nested, Ouroboran Monism. Rather than the Blue Sky icon representing the Totality, it represents any individual thing as a generic presence.

In the top diagram, I show how the individuality of a dollar implies the figuratively nested Totality, and in this diagram, it is the Totality which is considered the individual identity which is spread across the continuum of its own absolutely individuated cardinality or literal granularity.

Another point to make about the continuum is that the bottom limits of the integrals represent both that which is common to each and every unity. The cents which ‘make up’ a dollar represent the quantitative definition which describes any particular dollar, and therefore all dollars. The top end of the integrals represent the opposite definitions – that which is increasingly unique to a particular event in time or associated with a unique group of less particular experiences (qualia, concepts).

You’re welcome 😉

Non-Well-Founded Identity Principle

In an effort to clarify this concept, I wanted to add an update:

Edit 11/02/2018

The point of the Non-Well-Founded Identity principle is to characterize identity in a way which I propose is more accurate and makes fewer presumptions. Rather than following our scientific impulses to define all things in single, final ways, we can step back and instead integrate the full spectrum of epistemological and ontological nuances into our descriptions of math, logic, and science. What I propose here with the Non-Well-Founded Identity Principle is a redefinition of the identity principle to one which factors in the reality of perception, which I propose is not only a bottom up construction, but also a diffraction from the totality down. Unlike artificial intelligence, natural intelligence is kind of prism which opens up the ‘light’ of consciousness to its deeper nature, using both analytical steps and intuitive synthesis.

Rather than saying A=A (that everything is itself), I suggest that every phenomenon is:

- A spectrum of presentations/qualities/properties which can be said to be bounded on two ends.

- On one end, all things are bounded by a conserved identity. They are simply what they appear to be in whatever perspective and context they appear.

- On the other end, all things are a spectrum of resemblances/similarities/associations/dissimilarities that can be navigated poetically and reveal profound dimensions that echo the totality of experience.

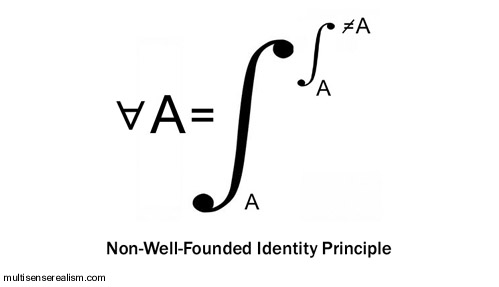

In other words, rather than A=A, I propose instead that A equals a spectrum that runs from self equivalence (A=A) to a second spectrum of similarities that ultimately include diametric dissimilarity, i.e. running from A=A to A~!=A.

A= {the spectrum of identity running from A to (a nested spectrum of identity running from almost totally A to almost totally not A)}

This idea is extended further below so that “A” as a unit of identity is replaced by sense itself, so that any sense experience is a spectrum that runs from experience of a purely particular experience to the nested spectrum that runs from all particular experiences to all experiences to the particular experiences that define the sense spectrum itself.

End of update.

Beginning of previous article:

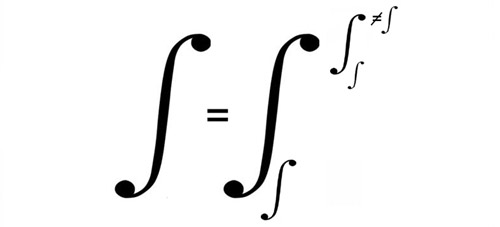

Here’s a crazy little number that I like to call the Non-Well-Founded Identity Principle. It woke my boiling brain up a few times last night, so I present it now in its raw state of lunacy.

The idea here is “For All A, A equals the integral between A and (the integral between A and not A)”.

This represents a refinement of trivial identity, A=A, to reflect the grounding of all propositions in the Absolute inertial frame of pansensitivity. The nested integral specifies that all integrations are themselves defined as that which is not disintegrated. Any object, subject, or sensory presentation or representation (A) is itself, and it is also the range of all possible relations, literal, figurative, and otherwise, between itself and all that is not itself (≠A).

This comes out of the idea that sense is the Explanatory Gap, i.e. the gap between private experience and public bodies is a non-well-founded set (non-well-founded sets contain themselves as members) in which primordial pansensitivity*defines its nested child sense experiences in a terms which are both unique, generic, and everything in between, depending on how the local perceptual inertia frames it.

*pansensitivity is plain old feeling, sensing, being and doing, but extended and universalized beyond Homo sapiens, as well as physics and arithmetic truth. Ontology itself – being; the is-ness and it-ness of all phenomena can be reduced further through the Non-Well-Founded Identity Principle, under which ontology becomes the nested gap between phenomenology and the sense of its own absence. This is a very tricky shell game, but it is not intended as a trick or a game. Said another way, ‘privacy is the difference between privacy and the difference between private and public experience.’

Applied to philosophy of mind, we would get: Naive realism equals the difference between naive realism and (the difference between naive realism and reductionism). Another one would be Sense equals the sense of the difference between the sense and (the difference between sense and logic). It could be said that X=/(=/≠) X, so that any number is a straight isomorphism with itself, but it is also a superposition of any potential combinations with or relativity upon any and all X that it is not.

The reductio ad absurdum can be seen in this second expression:

in which integration itself is the integral between integration and disintegration. Every set or process is defined by its own self-same initiation and termination.

Is this all insipid tautology? Is it another way of catching a glimpse of Heisenberg uncertainty or Gödel incompleteness through a fun house mirror? I don’t know much about calculus, so there may be a more conventional way of expressing these kinds of relations, but in the mean time, to me, it’s an absolutely interesting way of modeling the absolute: A universal capacity to simultaneously universalize and de-univeralize (proprietize) the universal experience.

Why PIP (and MSR) Solves the Hard Problem of Consciousness

The Hard Problem of consciousness asks why there is a gap between our explanation of matter, or biology, or neurology, and our experience in the first place. What is it there which even suggests to us that there should be a gap, and why should there be a such thing as experience to stand apart from the functions of that which we can explain.

Materialism only miniaturizes the gap and relies on a machina ex deus (intentionally reversed deus ex machina) of ‘complexity’ to save the day. An interesting question would be, why does dualism seem to be easier to overlook when we are imagining the body of a neuron, or a collection of molecules? I submit that it is because miniaturization and complexity challenge the limitations of our cognitive ability, we find it easy to conflate that sort of quantitative incomprehensibility with the other incomprehensibility being considered, namely aesthetic* awareness. What consciousness does with phenomena which pertain to a distantly scaled perceptual frame is to under-signify it. It becomes less important, less real, less worthy of attention.

Idealism only fictionalizes the gap. I argue that idealism makes more sense on its face than materialism for addressing the Hard Problem, since material would have no plausible excuse for becoming aware or being entitled to access an unacknowledged a priori possibility of awareness. Idealism however, fails at commanding the respect of a sophisticated perspective since it relies on naive denial of objectivity. Why so many molecules? Why so many terrible and tragic experiences? Why so much enduring of suffering and injustice? The thought of an afterlife is too seductive of a way to wish this all away. The concept of maya, that the world is a veil of illusion is too facile to satisfy our scientific curiosity.

Dualism multiplies the gap. Acknowledging the gap is a good first step, but without a bridge, the gap is diagonalized and stuck in infinite regress. In order for experience to connect in some way with physics, some kind of homunculus is invoked, some third force or function interceding on behalf of the two incommensurable substances. The third force requires a fourth and fifth force on either side, and so forth, as in a Zeno paradox. Each homunculus has its own Explanatory Gap.

Dual Aspect Monism retreats from the gap. The concept of material and experience being two aspects of a continuous whole is the best one so far – getting very close. The only problem is that it does not explain what this monism is, or where the aspects come from. It rightfully honors the importance of opposites and duality, but it does not question what they actually are. Laws? Information?

Panpsychism toys with the gap.Depending on what kind of panpsychism is employed, it can miniaturize, multiply, or retreat from the gap. At least it is committing to closing the gap in a way which does not take human exceptionalism for granted, but it still does not attempt to integrate qualia itself with quanta in a detailed way. Tononi’s IIT might be an exception in that it is detailed, but only from the quantitative end. The hard problem, which involves justifying the reason for integrated information being associated with a private ‘experience’ is still only picked at from a distance.

Primordial Identity Pansensitivity, my candidate for nomination, uses a different approach than the above. PIP solves the hard problem by putting the entire universe inside the gap. Consciousness is the Explanatory Gap. Naturally, it follows serendipitously that consciousness is also itself explanatory. The role of consciousness is to make plain – to bring into aesthetic evidence that which can be made evident. How is that different from what physics does? What does the universe do other than generate aesthetic textures and narrative fragments? It is not awareness which must fit into our physics or our science, our religion or philosophy, it is the totality of eternity which must gain meaning and evidence through sensory presentation.

*Is awareness ‘aesthetic’? That we call a substance which causes the loss of consciousness a general anesthetic might be a serendipitous clue. If so, the term local anesthetic as an agent which deadens sensation is another hint about our intuitive correlation between discrete sensations and overall capacity to be ‘awake’. Between sensations (I would call sub-private) and personal awareness (privacy) would be a spectrum of nested channels of awareness.

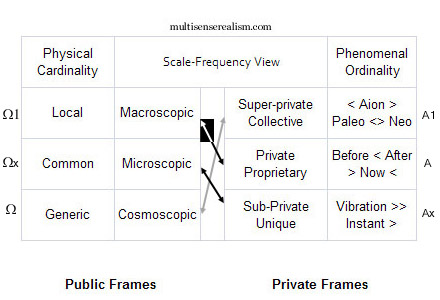

Charting It All

In an effort to provide a more straightforward view of pansensitivity and eigenmorphism, the chart above organizes all phenomena in the cosmos by scale of publicly extended body length and frequency range of privately experienced times. Going left to right (Metaphorically, Occident to Orient), the first and second column denote the public, physical scopes (perceptual inertial frames) according to cardinality and size. The bottom left frames (Ω) correspond to the outermost types of physical phenomena, i.e. absolutely gigantic or absolutely infinitessimal. This reflects the aesthetic intuition by which the atom comes out having more in common morphologically and dynamically with a solar system than a tree or coral reef, despite being at opposite ends of the scale of our awareness. The Ω range of frames is the envelope of physicality, where physical and mathematical ‘laws’ meet the most universally public perceptions.

Our awareness is extended technologically, which broadens our view of the public universe, however, since the awareness being extended is primarily visual and somatic (‘tangible’-kinesthetic rather than tactile), the telescoping of our sensory awareness is also narrowing our depth of field within the private (phenomenological) side of physics. My conjecture is that because of the nature of perceptual relativity, the more we focus on the the outer contexts, not only do we not see the private experience in the universe on these distant scales, but also our entire worldview will, by default, adjust to recontextualize the local experiences of the self. The fallacy of the instrument (if you have only a hammer, everything looks like a nail) might arise through this kind of empathetic feedback loop. This is likely to extend into so-called ‘supernatural’ phenomenon, which explains the increases in magnitude, frequency, and connectedness of coincidence experienced by subjects through altered states of consciousness. The higher up on the right hand column, the more large patterns of synchronicity, with deeper resonance (A1) are available for direct personal experience (A).

By contrast, the lower down on the Oriental, right hand scale we go, the more the needle of synchronicity tips toward mere statistical coincidence and the more top-down intuition, imagination, and eidetic narrative are collapsed into the stepped logic of bottom-up causality. The numbering schema is confusing, but intentional. The use of A in the middle row on the right side denotes that sense is always anchored centripetally. Perceptual capacity radiates as a range, often a literal circular, conic, or spherical perimeter, of awareness, but also sensitivity radiates figuratively as nested channels or layered modalities of sense.

The use of A1 and Ax above and below A respectively, implies the hierarchical pull from the superlative top, down to the personal, and the plummet from the personal middle down to the bottom. Ax would be the opposite of A1; insignificant, low status, shame and indignity. Looking at the Left side of the chart, the numbering scheme is even more confusing, but it is to emphasize the multiple levels of opposition that characterize the public and private aspects of physics-sense. The A1 range is the most universal private experience, (the ultimate being experience itself), which is meta-phorical. Experiences are associated to each other through metaphor, with the most tightly isomorphic metaphor being imitation or repetition. The higher up on the A column we go, the more latitude there is in recognizing common associations. Pareidolia and Apophenia are examples of having the aperture disproportionately dilated to the super-private, which becomes unsupportable within human society (delusions of grandeur, ideas of reference, mania, etc.)

Back to the Omega column on the left, the Ω1 is a different kind of magnificence than the private rapture of the Absolute. The public side is not centripetally oriented, but linear and circular. There is no radiant center, only jumps and slides. The Alpha/Aleph side in the East fills the gaps, it infers and elides, it puts two and two together. The Ω1 is mutation and fluke. Unintentional singularity. Its uniqueness is simply an inevitable accumulation of imperfectly repeating behaviors, so that the wonder of biology through evolution can be examined correctly in Darwinian terms. These are terms of the exteriors, however. Regardless of how complex and convoluted the patterns, they are patterns of insensitivity rather than awareness, automaticity rather than authenticity. This is individuality from the outside in – stochastic, social, generational rather than individual.

The cells of the bottom row have as much in common as the cells of the top row are polar opposites, but they are also skewed (this is what the arrows in the center are supposed to mean). This is what I call eigenmorphism. A to Ω1 has a half-black, half-white arrow, showing the relation between the human mind and its homid body. This is a maximal polarization, or so it seems to us. The black arrow from the Microscopic Ωx to the Sub-private Ax have in common the x, connoting the strong relation between mechanical appearances on the molecular level and the recursiveness of awareness in its least signifying, quantitative form. This is contrary to the idea that vibrations or energy are what we are, rather vibrate is what the various parts of our sub-private experience do – jiggling or wagging from position to disposition, from incident to co-incident.

The grey arrow from A1 to Ω points to what I call the profound edge of the continuum. This would be the level at which the Totality is an unbroken, Ouroboran monad. This is what happens ‘behind our backs’, hypnotically through evanescence. It is significance reclaimed and re-membered after having been diffracted into the entropy of spacetime. By contrast, the black and white split arrow corresponds to the ‘pedestrian fold’ – the level of the monad which appears most polarized and least evanescent – the terrestrial aesthetic of ‘ordinary’ experience.

In the top chart I have limited cardinality to the public side and ordinality to the private side to show the relation between morphic scale and phoric frequency. Privacy runs first to last (ordinal), publicity places astronomically numerous to few (cardinal).

Compare with Frame Set View:

Why Computers Can’t Lie and Don’t Know Your Name

What do the Hangman Paradox, Epimenides Paradox, and the Chinese Room Argument have in common?

The underlying Symbol Grounding Problem common to all three is that from a purely quantitative perspective, a logical truth can only satisfy some explicitly defined condition. The expectation of truth itself being implicitly true, (i.e. that it is possible to doubt what is given) is not a condition of truth, it is a boundary condition beyond truth*. All computer malfunctions, we presume, are due to problems with the physical substrate, or the programmer’s code, and not incompetence or malice. The computer, its program, or binary logic in general cannot be blamed for trying to mislead anyone. Computation, therefore, has no truth quality, no expectation of validity or discernment between technical accuracy and the accuracy of its technique. The whole of logic is contained within the assumption that logic is valid automatically. It is an inverted mirror image of naive realism. Where a person can be childish in their truth evaluation, overextending their private world into the public domain, a computer is robotic in its truth evaluation, undersignifying privacy until it is altogether absent.

Because computers can only report a local fact (the position of a switch or token), they cannot lie intentionally. Lying involves extending a local fiction to be taken as a remote fact. When we lie, we know what a computer cannot guess – that information may not be ‘real’.

When we say that a computer makes an error, it is only because of a malfunction on the physical or programmatic level, therefore it is not false, but a true representation of the problem in the system which we receive as an error. It is only incorrect in some sense that is not local to the machine, but rather local to the user, who makes the mistake of believing that the output of the program is supposed to be grounded in their expectations for its function. It is the user who is mistaken.

It is for this same reason that computers cannot intend to tell the truth either. Telling the truth depends on an understanding of the possibility of fiction and the power to intentionally choose the extent to which the truth is revealed. The symbolic communication expressed is grounded strongly in the privacy of the subject as well as the public context, and only weakly grounded in the logic represented by the symbolic abstraction. With a computer, the hierarchy is inverted. A Turing Machine is independent of private intention and public physics, so it is grounded absolutely in its own simulacra. In Searle’s (much despised) Chinese Room Argument – the conceit of the decomposed translator exposes how the output of a program is only known to the program in its own narrow sensibility. The result of the mechanism is simply a true report of a local process of the machine which has no implicit connection to any presented truths beyond the machine…except for one: Arithmetic truth.

Arithmetic truth is not local to the machine, but it is local to all machines and all experiences of correct logical thought. This is an interesting symmetry, as the logic of mechanism is both absolutely local and instantaneous and absolutely universal and eternal, but nothing in between. Every computed result is unique to the particular instantiation of the machine or program, and universal as a Turing emulable template. What digital analogs are not is true or real any sense which relates expressly to real, experienced events in space time. This is the insight expressed in Korzybski’s famous maxim ‘The map is not the territory.’ and in the Use-Mention distinction, where using a word intentionally is understood to be distinct from merely mentioning the word as an object to be discussed. For a computer, there is no map-territory distinction. It’s all one invisible, intangible mapitory of disconnected digital events.

By contrast, a person has many ways to voluntarily discern territories and maps. They can be grouped together, such as when the acoustic territory of sound is mapped to the emotional-lyric territory of music, or the optical territory of light is mapped as the visual territory of color and image. They can be flipped so that the physics is mapped to the phenomenal as well, which is how we control the voluntary muscles of our body. For us, authenticity is important. We would rather win the lottery than just have a dream that we won the lottery. A computer does not know the difference. The dream and the reality are identical information.

Realism, then, is characterized by its opposition to the quantitative. Instead of being pegged to the polar austerity which is autonomous local + explicitly universal, consciousness ripens into the tropical fecundity of middle range. Physically real experience is in direct contrast to digital abstraction. It is semi-unique, semi-private, semi-spatiotemporal, semi-local, semi-specific, semi-universal. Arithmetic truth lacks any non-functional qualities, so that using arithmetic to falsify functionalism is inherently tautological. It is like asking an armless man to raise his hand if he thinks he has no arms.

Here’s some background stuff that relates:

The Hangman Paradox has been described as follows:

A judge tells a condemned prisoner that he will be hanged at noon on one weekday in the following week but that the execution will be a surprise to the prisoner. He will not know the day of the hanging until the executioner knocks on his cell door at noon that day.Having reflected on his sentence, the prisoner draws the conclusion that he will escape from the hanging. His reasoning is in several parts. He begins by concluding that the “surprise hanging” can’t be on Friday, as if he hasn’t been hanged by Thursday, there is only one day left – and so it won’t be a surprise if he’s hanged on Friday. Since the judge’s sentence stipulated that the hanging would be a surprise to him, he concludes it cannot occur on Friday.He then reasons that the surprise hanging cannot be on Thursday either, because Friday has already been eliminated and if he hasn’t been hanged by Wednesday night, the hanging must occur on Thursday, making a Thursday hanging not a surprise either. By similar reasoning he concludes that the hanging can also not occur on Wednesday, Tuesday or Monday. Joyfully he retires to his cell confident that the hanging will not occur at all.The next week, the executioner knocks on the prisoner’s door at noon on Wednesday — which, despite all the above, was an utter surprise to him. Everything the judge said came true.

1) The conclusion “I won’t be surprised to be hanged Friday if I am not hanged by Thursday” creates another proposition to be surprised about. By leaving the condition of ‘surprise’ open ended, it could include being surprised that the judge lied, or any number of other soft contingencies that could render an ‘unexpected’ outcome. The condition of expectation isn’t an objective phenomenon, it is a subjective inference. Objectively, there is no surprise since objects don’t anticipate anything.

2) If we want to close in tightly on the quantitative logic of whether deducibility can be deduced – given five coin flips and a certainty that one will be heads, each successive tails coin flip increases the odds that one the remaining flips will be heads. The fifth coin will either be 100% likely to be heads, or will prove that the certainty assumed was 100% wrong.

I think the paradox hinges on 1) the false inference of objectivity in the use of the word surprise and 2) the false assertion of omniscience by the judge. It’s like an Escher drawing. In real life, surprise cannot be predicted with certainty and the quality of unexpectedness it is not an objective thing, just as expectation is not an objective thing.

Connecting the dots, expectation, intention, realism, and truth are all rooted in the firmament of sensory-motive participation. To care about what happens cannot be divorced from our causally efficacious role in changing it. It’s not just a matter of being petulant or selfish. The ontological possibility of ‘caring’ requires letters that are not in the alphabet of determinism and computation. It is computation which acts as punctuation, spelling, and grammar, but not language itself. To a computer, every word or name is as generic as a number. They can store the string of characters that belong to what we call a name, but they have no way to really recognize who that name belongs to.

*I maintain that what is beyond truth is sense: direct phenomenological participation

Recent Comments