Archive

Philosophy of Mind Flowchart

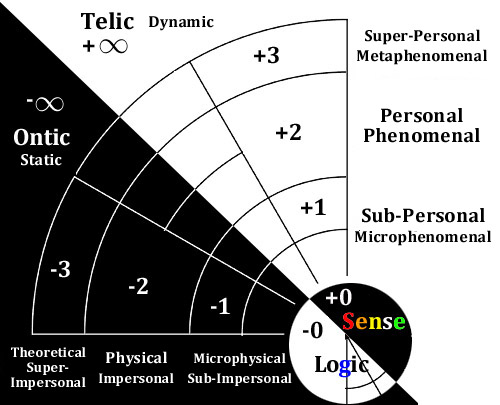

The idea here is that if we want to take the full spectrum of phenomena into account, we have to either begin with a reductionist realism and work upward, or a holistic idealism and work downward.

When we suppose that consciousness is a phenomenon that arises out of unconscious phenomena, we are saying that mechanism, through some act of emergence (generally by complexity), the mechanism in question (generally physical or computational mechanism) becomes enchanted with itself. In this case, as David Chalmers famously points out, there would have to be some threshold beyond which it would be impossible to tell the difference between a real person and a machine which acts just like a real person (a philosophical zombie). Finding this unacceptable, he suggests instead some variety of panpsychism should be explored, including perhaps, what I would call a promiscuous or ‘leaky’ panpsychism in which devices such as thermostats would have to be considered aware in some sense.

Finding both of these alternatives unacceptable, I suggest that we move over to the right side and begin with a downward facing ideal absolute. For the spiritually inclined, this could be called by any number of theistic names, however, it can also be conceived of equally well in completely non-spiritual, atheistic terms. When we suppose that awareness itself is inescapable and inevitable in all possible or theoretical universes, we are saying that through some divergence or illusion, awareness takes on a temporary solid appearance. In MSR, I suggest that this is a more plausible option than brute emergence from nothingness…modulated constraint within everythingness.*

Rather than positing an appeal to future scientific understanding to explain the emergence of aesthetic realism from mechanism, the divergence of mechanism from total awareness can be made palatable through a nested modulation of insensitivity. Intentionally partitioning intention itself so that it appears unintentional given a certain amount of insensitivity. This could be viewed either in the religious sense of ‘God’s divine plan is not visible to us’, or in a more conservative sense of ‘Shit happens coincidentally, but coincidental shit also happens to be meaningful from some perspective’.

If anyone is interested in what the crazy pink cone and all that is, I can explain in more detail, but briefly, if we take the MSR road from disenchanted idealism (the conservative ‘Shit happens’ option), then instead of the Chalmers dilemma of zombies vs leaky panpsychism, we get a continuum in which local sense is selectively blinded to the sense of non-human experiences, through a combination of frame rate mismatch (time scale difference cause entropy and local sense approximates) and distance (literal spatial scale difference, as well as experiential unfamiliarity).**

The other ten dollar words there, ‘tessellated monism’ and ‘eigenmetric diffraction’ both refer to the juxtaposition of sensitivity and insensitivity, through which a kind of metabolism of accumulating significance (solitrophy) in the face of fading sense (entropy) and fading motive (gravity).

*I call this cosmology the Sole Entropy Well hypothesis and it has to do with reversing Boltzmann’s solution to Loschmidt’s paradox so that entropy is a bottomless absolute, like c, in which local ranges of entropy and extropy stretch and multiply in a fractal-like reproduction.

**I call this aspect of MSR Eigenmorphism, which has to do with things appearing to be more doll-like and less familiar from a distance. This makes, for example, the presence of atoms and solar systems in our experience more similar to each other than either of them seems like a tree or a cell. The limits of our perception coincide with the simplicity of ontology, and they are, in a sense, the same thing (given eigenmorphism). As a rule of thumb, distance = the significance of insignficance.

The Sound and Style of Consciousness

In music, timbre also known as tone color or tone quality from psychoacoustics, is the quality of a musical note or sound or tone that distinguishes different types of sound production, such as voices and musical instruments, string instruments, wind instruments, and percussion instruments. The physical characteristics of sound that determine the perception of timbre include spectrum and envelope.

a signal with its envelope marked in red

What I remember from my 7th grade general music class about timbre is that it is what made modern pop and rock different from other forms of music. All of those sound effects that become possible with electric amplification allow us to transform the same kinds of melodies that have always been a part of music into new kinds of sonic textures. Any song can be made into a heavy metal or punk song by cranking up the amplitude and distortion and manipulating the tempo.

The Wiki gives a list of subjective experiences and objective acoustic properties, such as:

Subjective Objective

Vibrato Frequency modulation

Tremolo Amplitude modulation

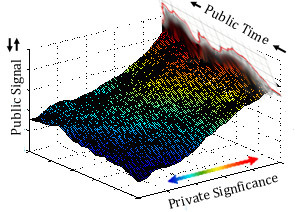

I think that this a clue that timbre can be used meaningfully as a jumping off point for understanding subjectivity and consciousness. If we think of the red signal envelope as a cross section of a 3D prismatic glacier behind it, the axis of that figurative third dimension would be where private significance (aesthetic quality) intersects public spacetime.

I’m not saying that qualia can literally be reduced to a kind of perpendicular meta-spacetime, but that it can be figuratively thought to cast a shadow which is measurable in those terms. The public universe is flat in comparison to the private universe, even though any particular private experience of eternity is of course orders of magnitude narrower than the totality of all experience. The full extent of our own lives and the lives of others is hidden by the constraint of our insensitivity. Our attention is directed to public facing events, to places and times outside of our private here and now.

Timbre at least recognizes the dipole of subjective and objective dimensions of phenomena and the sensible link between them. Our Western approach of psychoacoustics still reduces the subjective psycho- qualities to a mechanical model which is presumed to be driven by the acoustics only, but what I suggest is that acoustic mechanics are themselves micro-aesthetic experiences which are shared on all phenomenal levels (sub-personal, personal, and super-personal).

Timbre is a word which has been called “the psychoacoustician’s multidimensional waste-basket category for everything that cannot be labeled pitch or loudness”. The difference between a recording of a flute and a recording of a guitar playing the same song could be described in terms of timbre. Each instrument has its own idiosyncratic mixture of tonal and extra-tonal noise-like qualities. When we talk about playing a song on the piano, we are treating the melody as the object. What is being played is an abstract concept of sequenced notes, but what is bringing that abstraction into a concretely realized experience is the acoustic and artistic qualities of the instrument and the performance.

A font or typeface can be seen in a similar way. The invention of customizable fonts was one of the favorite features of early word processors. Instead of being locked into a particular typewriters look, the consumer could now make their printed output look more like published text. Font designers had to painstakingly build the look of each letter in the character set (since a computer would not be much good at guessing what a Helvetica style might be). Soon the entire typographic palette of styles and sizes were available, bringing into sharp distinction the difference between the alphanumeric data (ASCII text), and the font (character set). We can understand, that just as the melody of a song sounds different when it is played on a flute than when it is blasting out of a distorted electric guitar, a phrase written in Times New Roman carries a different meaning when it is written in Comic Sans. The computer, however, does not have any need to differentiate. It doesn’t care what font you write a program in, just as sheet music doesn’t carry instructions for a flute to sound like a flute.

If we use timbre and typeface as metaphors for qualia, we can see how it describes a difference in kind from the skeletal underpinnings of quanta. It’s true that we can use quantitative mechanisms to communicate qualia, but only if the receiver has the right kind of sensory range to match the sender’s intent. The mechanism has no way to appreciate the aesthetic content though, as all such qualities, whether they are fonts, timbre, image, or meaning, are reduced to the same binary digits. Compare this with what we hear, for example, when dragging a stick along the ground. There is the sound of the foot of the stick scraping the ground itself, and then there is the amplified, woody resonance which describes the length, mass, and density of the stick. Both can be felt as well as heard. The effect overall is a unified gestalt. Unlike a computation in which data must be explicitly separated and handled differently (some bits belong to the font, some to the text), natural sound is a feeling for our body and a knowing for our mind that is bound together metaphorically.

There is no mechanical process through which feelings and thoughts are bound. Instead, they are divided, like the spectrum of visible light is divided from white light, from the common sense of all experience. There is no way to simulate that coherence mechanically, but given enough artificial cues, our naturally poetic psyche will confabulate animation into it. We look at the words typed in a romantic script font and get a sense of a voice which might make the words seem pretentious or anachronistic. We hear the distorted guitar strings and feel that the song has become massively and explosively energetic. The ‘information’ which describes the underlying structure of the music or the typeface is non-poetic. It is only pixels and mathematical relations which relate to themselves and to mathematics in general, not to the experience of being alive. For being alive and aware, we need extra-mathematical qualities – the same qualities from which math itself emerges.

Seeing Visibility

Given that light has many strange properties, both on our natural scale as rays and on the elementary scale as photons, there is every reason to doubt that light qualifies as something which is unambiguously physical. On the other hand, since we cannot imagine a completely new color wheel, it would seem to say that the experience of seeing light is “real”, and not, a label for certain kinds of information that is fabricated in the brain. People who become blind at an early age, for example, experience stimulation to their visual cortex as tactile stimulation rather than seeing lights or spots.The condition of blindsight shows that parts of our brain can receive optical information without our having experienced that information personally as visual sensation.

In a way, white light can be considered to be what it looks like when transparency is concentrated. White light is when the quality of visibility is so saturated that it exceeds the range of discernment . A bright light illuminates a room not with whiteness but with clarity. To shed light on something is to flood the visual field with an immediacy of aesthetic acquaintance that suggests veridical qualities of the environment being illuminated. This is why we have metaphors such as ‘seeing the light’. Because it is about being connected with the presence of what is true and sensible, rather than being passively bombarded with particles. It can be said that our experience of seeing is not a direct detection of what is true, since there are so many ways to reveal optical illusion.

By calling it an illusion, we are framing the phenomena in a way as to implicate human fallibility rather than physics. Somehow we are wrong about what our eyes report, yet it is not clear that our assumption about what our eyes are reporting is scientifically valid. In fact, if it were not for these optical illusions, science would have very little to go on in determining the nature of vision as separate from physical truth, so it is actually the gaps between our expectations and the truth which reveal more truth, both about the nature of visual awareness, optics, electromagnetism, and quantum mechanics. Optical illusions are an encyclopedia of the nature of perceptual illusion, physical reality, and the relation between the two.

The folk science explanation for light perception generally begins with the idea that we can see light hitting our retina. That may be true, but not scientifically. The part that light plays in our visual system ends with the isomerization of rhodopsin in the retina. If what we see as “light” is in the visual cortex, then obviously what the visual cortex receives is not photons from the outside world, and it is not a direct analog that shows up as a small screen of images in an MRI. To even guess at some of the content of our visual field requires a blind statistical reconstruction. There is no plain-text transmission of images in our brain to simply hack into and view.

The effect that light has on the retina is merely to trigger the geometric extension of Vitamin A molecules and stop the flow of glutamate to the bipolar cells. That is well behind the first ganglion that would lead to the optic nerve and visual cortex. I propose instead that photons are not entities that are independent of their transmitters and receivers, and that light is therefore not physical but rather inter-physical, aka, phenomenal, aka sensory. Photons are measures of the sensitivity of our mode of detection. It is neither ray, beam, particle, or wave, but rather a rhythmic phenomenalization of matter – a feeling that matter has about what is going on around it. By making inferences beyond our sensible grasp, I think that Quantum Theory has given noun and verb-like properties to what are ultimately adjectives. Bosons and fermions may not be like that at all, but rather they are opportunities for matter to re-acquaint itself. The elementary measurable features of the cosmos may not be particles or strings, but qualities which characterize the capacity of matter to measure and interact with itself. Physics is a mirror. For every action and equal and opposite reaction, because equal and opposite reaction is a perfect reflection of our mode of scientific inquiry. We are investing our coins of empiricism in nature, extracting the empirical value, and recording the profit in our scientific ledger, like good, serious 18th century gentlemen.

It seems to me that only a medium which is intrinsically filled with the sense of color, form, and intensity across the many physical scales could reliably and veridically bridge the gap between public material realism private experience.The notion of a seeing light as a Rube Goldberg patchwork of conveyance into separate effects on every level*, all transported through a one dimensional collision detection schema is not consistent with reality. There are too many examples of people who have seen things in dreams and visions, too many qualities of visual experience which cannot be decomposed sensibly to pixels or lines for the photon explanation to be satisfactory. The qualia of color alone, whose idiopathic shifts and wheel-like symmetry have no place in the smooth continuum of the electromagnetic spectrum.

I suggest that light is only one specific form of a more universal medium, and that this medium is already known to us informally by the word ‘sense’. Sense as in sensation, sensitivity, sensor, but also as in making sense, sixth sense, and ‘in the sense of’. The unity of all sense can be more precisely expressed as ‘primordial identity pansensitivity’, ‘nested sensory-motive participation’, or even something like ‘self-tesselating aesthetic re-aquaintence’, depending on how technical and pretentious we want to get. From this Absolute firmament, and I think only from this firmament, can we get the full range of private experience, public physics, symbolic information, and the capacity to compare and contrast them. Only when physics is seen as identical with sense can physics be completed.

On the elementary level, with a nested sense primitive, we get relativistic locality (so eigenmetrics rather than eigenstates). Sense is modulating its own self-transparency and reflectivity to generate eigenmetric milieus – levels of scale that foster certain kinds of aesthetic themes and activities. The micro-world with its mathematical-molecular-insectoid clarity is different from the soft, lush features of zoological-arboreal-botanical existence. On some level perhaps, sense is nearly undiluted, and so the entire history of the cosmos is as a single now – a white whole singularity in which the now cannot even be completed and the here cannot hold even the hint of a ‘there’. On that level, there is non-locality.

*optical, molecular, cellular, ocular, neurological, psychological, sociological, zoological..

Computation as Shadow of Consciousness

I’m borrowing a (fantastic) image from

Incredible Shadow Art Created From Junk by Tim Noble & Sue Webster.

to make a point about the Map-Territory distinction and how it pertains to simulated intelligence.

Although a computer simulation produces an output over time, that does not mean that it is four dimensional (Time can be an additional dimension on any number of dimensions – a cartoon can be 2D but still last fifteen minutes.) By using the terms N and N-1 dimensional, I’m trying to make the point that no matter how extensive the measurements we make and how compelling their coherence seems to be, it can still be one dimension flatter than the original without our knowing it.

In the case of consciousness, I would say that it absolutely cannot be defined or described quantitatively, so that it truly is N dimensional, or trans-dimensional. Whatever number of dimensions we want to ascribe to some aspect of consciousness, that description will always be n-1 to the real thing. That is, in my understanding, the nature of representation – a destructive reduction of a presentation through a lower level (n-1 or n-x) medium which can be reconstructed by at the higher level if, and only if, there is a higher level interpreter.

Some might object to the metaphor on the grounds that computation is not like a shadow, since changing a computation has predictable effects, while changing a shadow’s appearance does not effect the object. That’s true, but again, in this case, I am using N dimensional phenomena, so that interactiveness is part of the conservation of the temporal, before and after/cause and effect axis. In such a scenario, the flatland effect itself is modified somewhat and there are more dimensions shared. More shared dimensions = more conservation of sensory-motive agreement, however, there is also more that is not shared (feeling, sensations, colors, understanding, for example).

Some who are more familiar with the spectacular capabilities of cutting edge computation might object to the over-simplification, and say that I am criticizing an older generation of approaches to machine learning. There is some truth to that, but in the rarefied air of higher math, we get into what I call the super-impersonal level of intelligence. By comparison, the super-personal level of awareness could be described as mythic or poetic. Synchronicity can be concentrated through divination techniques like Ouija boards and Tarot cards. The Platonic ‘realm’ accessed through ultra-sophisticated computation are, in my view, a dual of that kind of divination, except rooted in the generic and repeatable rather than the instantaneous and volitional. The computer is an oracle to impersonal truths about truth itself, and can build locally applicable strategies from there, however, it cannot factor in any kind of personal feeling or intention.

The computational oracle is much more seductive than divination, since it does not leave the interpretation up to the audience. The computational oracle’s output is to be interpreted as objective fact…which is tremendously useful of course, when we are talking about objects. The danger is when we start believing what it has to say about subejcts. As correct as the computer is about objective truth, it is equally incorrect about people. In the same/opposite way, the Ouija board is neither correct nor incorrect, but offers possibilities that tantalize the imagination.

The Sense of Acquaintance

In David Chalmers’ Precis of The Conscious Mind, in the summary of his argument for the Paradox of Phenomenal Judgment, he uses a particular wording that caught my attention:

“Knowledge of and reference to phenomenal states is based on something tighter than a causal relation; it is based on a relation of acquaintance.”

The term acquaintance sheds some new light on the idea of sense. While he uses it to pull away from functionalist assumptions, I would argue that he needs to go further still, so that there can be no confusion that acquaintance could be accomplished without an aesthetic appreciation. The question of whether an aesthetic quality can be appreciated mechanically, or if mechanism is inherently and eternally anesthetic is at the heart of all philosophical debate over consciousness and artificial intelligence.

In a related metaphysical question, can it be said that sense is truly an acquaintance, or is it always ultimately a re-acquaintance on a deeper level? Each of us, for example, had a moment when their eyes first showed them color. Of course, this would not have been possible had our eyes themselves not been expecting to fulfill a history of acquaintance with light discernment throughout the history of biology, and perhaps even biochemistry and electromagnetism itself. By contrast, our eyes do not acquaint us personally with X-Rays or radio waves. This suggests that on some level, acquaintance may be impossible if it is not ultimately a re-acquaintance.

Any understanding of sense should include this kind of holographic chicken-and-egg paradox of the expected and the unexpected. In order to support novelty in communication, there must be a common agreement on the fundamentals that comprise the channel of communication – its alphabet, protocols, the bandwidth specifications, etc. Each layer of varying information can be seen to arise from a deeper layer of invariant information, but ultimately, the capacity for sense to bootstrap itself must dissolve the difference between acquaintance and re-acquaintance. Sense is beneath time and novelty, beneath difference and similarity. It is (or ‘this is’) eternal by necessity, but it is also eternally masking itself into temporary dis-acquaintance.

Analogue, Brain Simulation Thread

Tell the difference between a set of algorithm’s in code that can mimic all the known processes for the input and output of a guitar into analogue equipment. The answer is no, because pros cant tell the difference. The entire analogue process has been sufficiently well modeled and encapsulated in the algorithmns. The inputs and outputs are physically realistic where the input and output are important. That is what substrate modelling of brain processes in computational neuroscience is about. i.e. Brain simulations.

Just because our analysis of what is going on in the brain reminds is of information processing does not mean that the brain is only an information processor, or that consciousness is conjured into existence as a kind of information-theoretic exhaust from the manipulation of bits.

What you are not considering is that beneath any mechanical or theoretical process (which is all that computation is as far as we know) is an intrinsic sensible-physical context which allows switches to load, store, and compare – allows recursive enumeration, digital identities,…a whole slew of rules about how generic functions work. This is already a low level kind of consciousness. That could still support Strong AI in theory, because bits being the tips of an iceberg of arithmetic awareness would make it natural to presume that low level awareness scales up neatly to high level awareness.

In practice, however, this does not have to be the case, and in fact what we see thus far is the opposite. The universally impersonal and uncanny nature of all artificial systems suggests the complete lack of personal presence. Regardless of how sophisticated the simulation, all imitations have some level at which some detector cannot be fooled. Consciousness itself however, like the wetness of water, cannot be fooled. No doll, puppet, or machine which is constructed from the outside in has any claim on sentience at the level which we have projected onto it. This is not about a substitution level, it is about the specific nature of sense being grounded in the unprecedented, genuine, simple, proprietary, and absolute rather than the opposite (probabilistic, reproducible, complex, generic, and local). From the low level to a high is not a difference in degree, but a difference in kind, even though the difference between the high level and low level is a difference in degree.

What I mean by that is that anything can be counted, but numbers cannot be reconstructed into what has been counted. I count my fingers…1, 2, 3, 4, 5. We have now destructively compressed the “information” of my hand, each unique finger and the thumb, into a figure. Five. Five can apply generically to anything, so we cannot imagine that five contains the recipe for fingers. This is obviously a reductio ad absurdum, but I introduce it not as a straw man but as a clear, simple illustration of the difference between sensory-motive realism and information-theoretic abstractions. You can map a territory, but you can’t make a territory out of a map regardless of how much the map reminds you of the territory.

So yes, digital representations can seem exactly like analog representations to us, but they are both representations within a sensory context rather than a sensory-motive presentation of their own. All forms of representation exist to communicate across space and time, bridging or eliding the entropic gaps in direct experience. It’s not a bad thing that modeling a brain will not result in a human consciousness, its a great thing. If it were not, it would be criminal to subject living beings to the horrors of being developed and enslaved in a lab. Fortunately, by modeling these beautiful 4-D dynamic sculptures of the recordings of our consciousness, we can tap into something very new and different from ourselves, but without being a threat to us (unless we take it for granted that they have true understanding, then we’re screwed).

Cookie Cutter Cosmologies

(With apologies to those who are more philosophically literate.)

Something In The Water: Logos and Tao

A superficial read of Pre-Socratic Greek philosophy introduces a dualism of Being and Becoming beginning with the water-based cosmology of Thales of Miletus in the 6th century BCE. Thales conceived of the universe as made of a single material substance: water**. Thales said:

“It is water that, in taking different forms, constitutes the earth, atmosphere, sky, mountains, gods and men, beasts and birds, grass and trees, and animals down to worms, flies and ants. All these are different forms of water. Meditate on water.”

On the other side of the known world, the development of Taoism at roughly the same time spoke of the primacy of water, or the metaphor of water as effortless constant change.

“Water never resists. It accepts all.

It never judges.

Therefore, to be one with Tao, be like water.”– Tao Te Ching

Heraclitus of Ephesus picks up on the theme of nature emerging from a single Logos, but expressed as fire rather than water. Many comparisons can be drawn between Heraclitus and Lao Tzu, as they both speak to a cosmos which is changeless only in its perpetual changing, and grounded in a united harmony of contrasts.*

“The one is made up of all things, and all things issue from the one.”

“It is an attunement of opposite tension (palintropos harmonie),like that of the bow and the lyre.”

(A Comparison between Heraclitus’ Logos and Lao-Tzu’s Tao pdf)

Being and Becoming

Parmenides of Elea can be seen to comprise something of a philosophical dipole, with Heraclitus seeing (centuries before Process Philosophy [1]) a universe based on change and becoming rather than on fixed forms, and Parmenides asserting that the true nature of the universe is divided between the eternal divine truths and ephemeral local appearances [2]. Parmenides speaks in terms of what-is being what cannot not-be.

“what-is is ungenerable and imperishable,

a whole of a single kind, and unshaking and complete;

nor was it nor will it be, since it is now all together

one, cohesive.”

His emphasis on the significance of what-is (really, really real) as eternal and unchanging coincides with the views of Pythagoreans, such as Philolaus of Croton who venerated the role of number in the cosmos and saw mathematics specifically as that which which joins the unlimiteds and limiters which make up everything.

Plato sharpened the Pythagorean appreciation for ideal forms (including goodness, beauty, equality, bigness, likeness, unity, being, sameness, difference, change, and changelessness) which he saw as eternal. Platonic idealism lends a Parmenidean fixation to the insubstantial Heraclitean flux, but all remain analog and abstract. Democritus, who was in a sense the antithesis of Plato (Aristoxenus said that Plato wished to burn all the writings of Democritus that he could collect), managed to reconcile the flux and fixed in a different way which incorporated the concrete and empirical.

Philosophies of Science

Democritus’ notion of unchanging, irreducible atoms in a void which constantly change configurations is an idea which reconciles both Heraclitus and Parmenides [3]. This model has cast physics in a mold which, even today, remains almost inescapable. The combination of determinism and uncertainty has gone beyond atoms into the subatomic level. Quantum mechanical events are determined to be indeterminable, but governed by formulaic probabilities which are precise and unchanging.

Aristotle had a different perspective on what is essential which seems more grounded in language and relates to the proprietary versus the generic. A horse is an essential substance, whereas the category ‘horse’ is a secondary kind of substance. His hylomorphic compounds conceive of substance as the form of things, or their matter plus essence. It is a top-down kind of holism which contradicts the bottom-up reductionism of Democritus, however Aristotle extends the binary logic of Parmenides rather than the divine flowing harmonies of Heraclitus and Plato.

Galileo applied the Classical dualism of unlimiteds and limiters in a scientific way, defining them in physical terms so that Primary qualities are conceived as being independent of any observer (shape, position, motion, contact, and number) and Secondary qualities are thought to be properties which produce sensations in observers. Galileo had a functionalist view of qualia, so that experiences like colors or feelings had a purpose in guiding our behavior toward God’s design. Locke updated Galileo’s primary and secondary qualities so that primary qualities had a dimension of realism which was not only objective but located in objects themselves; properties of solidity, extension, and figure. His secondary qualities are seen as powers of objects which mechanically produce sensation in us.

Kant’s take on unseen universals and their changing expressions was defined in terms of Noumena and Phenomena. His contrasts of ‘a priori’, ‘a posteriori’, ‘analytic’, and ‘synthetic’ clarify some of the themes of causality common to Locke, Aristotle, and Descartes. Even into the modern era, ultimate questions in philosophy and science lead back to ideal absolutes such as ‘information’ or ‘existence’ versus properties of a Phenomenal world of sensation (’emergent’ or ‘illusory’). Jung and Bateson used the words Pleuroma and Creatura to talk about the difference between the eternal totality which is beyond all experience and qualities, and the living world that is subject to perceptual difference and information.

There are of course, many other philosophers and scientists that should be mentioned. Hobbes and Newton can be said to have continued the reductionist tradition of Democritus, while dialectic and monadic themes in Hegel, Leibniz, and Spinoza recapture the pre-Socratic sense of the Absolute. Aside from this bifurcation of Transcendental Idealism and Empirical Realism, there is also also a progression from science as a discussion about ideals to a realization of empire.The chain of mentoring from Socrates to Plato to Aristotle to Alexander the Great begins with intellectual questioning and ends with the exercise of global political power, with a mastery of form, substance, causality and virtue in between.

Breaking the Mold

Thales’ hylozoism (he believed that matter was alive), and Aristotle’s essentialism fell into strong disfavor during the Early Modern period, however there were a few who challenged the primacy of so called primary qualities. For George Berkeley, all qualities were secondary, and matter was only a representation of the mind:

“The deducing therefore of causes or occasions from effects and appearances, which alone are perceived by sense, entirely relates to reason.”

Even though Berkeleyan idealism can arguably be seen to be supported by the Observer Principle interpretation of Quantum Theory, as well as Simulation Hypothesis, Holographic Universe, and Bohm’s Implicate Order, modern panpsychism has been dogged by the tendency for Idealism to be painted as Solipsism. The cliche “If a tree falls in a forest..” is often used to point out the absurdity of idealism, i.e. that the idea of linking existence to perception is beneath consideration on account of it being childish, superstitious, psychotic, and above all unfalsifiable.

In my view, this is a prejudiced characterization, in which the difference between one’s own individual perception is used to stand in for the principle of perception in general. This mistake is repeated again and again in Functionalism, Computationalism, Structured Realism, Logical Positivism, and Emergentism, among others, which demand a return to reductionist, Democritean models. A similar dismissal of Searle’s treatment of the Symbol Grounding Problem, and Chalmers Hard Problem of Consciousness underscores the commitment to the primacy of fixed, object-like rules rather than rule-making subjects.

Cutting the Cookie

Taking this philosophical evolution into perspective, it is my intent to transcend all of the particulars of the past and see all of the prior philosophical approaches as blind men overlapping in their examination of the proverbial elephant. I side with Berkeley on the primacy of experience, as I see the publicly measurable aspects of the world as a reduced vocabulary which cannot add up to the rich aesthetics which we experience. I would compare qualia as an endless supply of cookie dough, in which cookie cutters could be made from the dough itself. The idea is that the edible can be made hard and inedible, but no amount of cookie-cutting utensils can render an edible cookie.

Going beyond cookies and cutters, however, requires a combination of Berkeley’s best arguments, Einstein’s Relativity, and Quantum Mechanical observations. The notion of perspective itself, of inertial frames and the importance of measurement in determining the nature of nature is repudiation of Berkeley. It is perception after all which serves as the master metaphor for all possible “relation”. It is from perception that all phenomena “emerge” and to perception that all phenomena become “evident”. I see no scientific reason not to extend this principle of perception beyond human experience and even beyond biology, so that physics itself is understood to be identical to “sense”. Sensing and sense-making provide a cookie and cutter autopoiesis, within which experience can both return ephemeral Creatura to the Pleroma, and draw eternal forms and fictions into the empirical realm. The cookie and cutter are themselves aesthetic contrasts within the same cookie batter/cook.

*The Tao translates as ‘the way’, and logos “Originally a word meaning “a ground”, “a plea”, “an opinion”, “an expectation”, “word”, “speech”, “account”, “reason”, it became a technical term in philosophy, beginning with Heraclitus (ca. 535–475 BC), who used the term for a principle of order and knowledge”.

In my own etymological forays, I have come across a theme which relates a lot of terms having to do with consciousness with the Proto-IndoEuorpean roots “wag” and “wegh”. The combined sense is that of a way of weaving or wagging. Tending to wander or wave, and attending: to wake, watch, and weigh.

**In the 5th c,, Xenophanes linked all physical phenomena with clouds and the sea.

“The sea is the source of water and of wind…

The stars come into being from burning clouds…

The sun consists of burning clouds…

The moon is compressed cloud…

All things of this sort [comets, shooting stars, meteors] are either groups or movements of clouds.”

- “Modern philosophers who appeal to process rather than substance include Nietzsche, Heidegger, Charles Peirce, Alfred North Whitehead, Robert M. Pirsig, Charles Hartshorne, Arran Gare and Nicholas Rescher. In physics Ilya Prigogine distinguishes between the “physics of being” and the “physics of becoming”. Process philosophy covers not just scientific intuitions and experiences, but can be used as a conceptual bridge to facilitate discussions among religion, philosophy, and science.” – Wiki

- Parmenides prefigures Plato, Galileo, Descartes, Locke, Kant, Hegel, Spinoza and many others who invoke a Heaven-and-Earth type dualism.

- “Democritus managed to reconcile the Heraclitean theory of flux with the theory of perfect immutability of Parmenides and demonstrate that both theories are truly complimentary for each one explains a different level of reality and do not contradict each other. It is true that, essentially, things do not change (as Parmenides claims), for they are composed of eternal and indivisible particles (atoms), but it is also true that the arrangement of this atoms could be altered resulting in apparent changes (as Heraclitus argued).” – Substantia Primordium: Parmenides and Heraclitus reconciled

Recent Comments